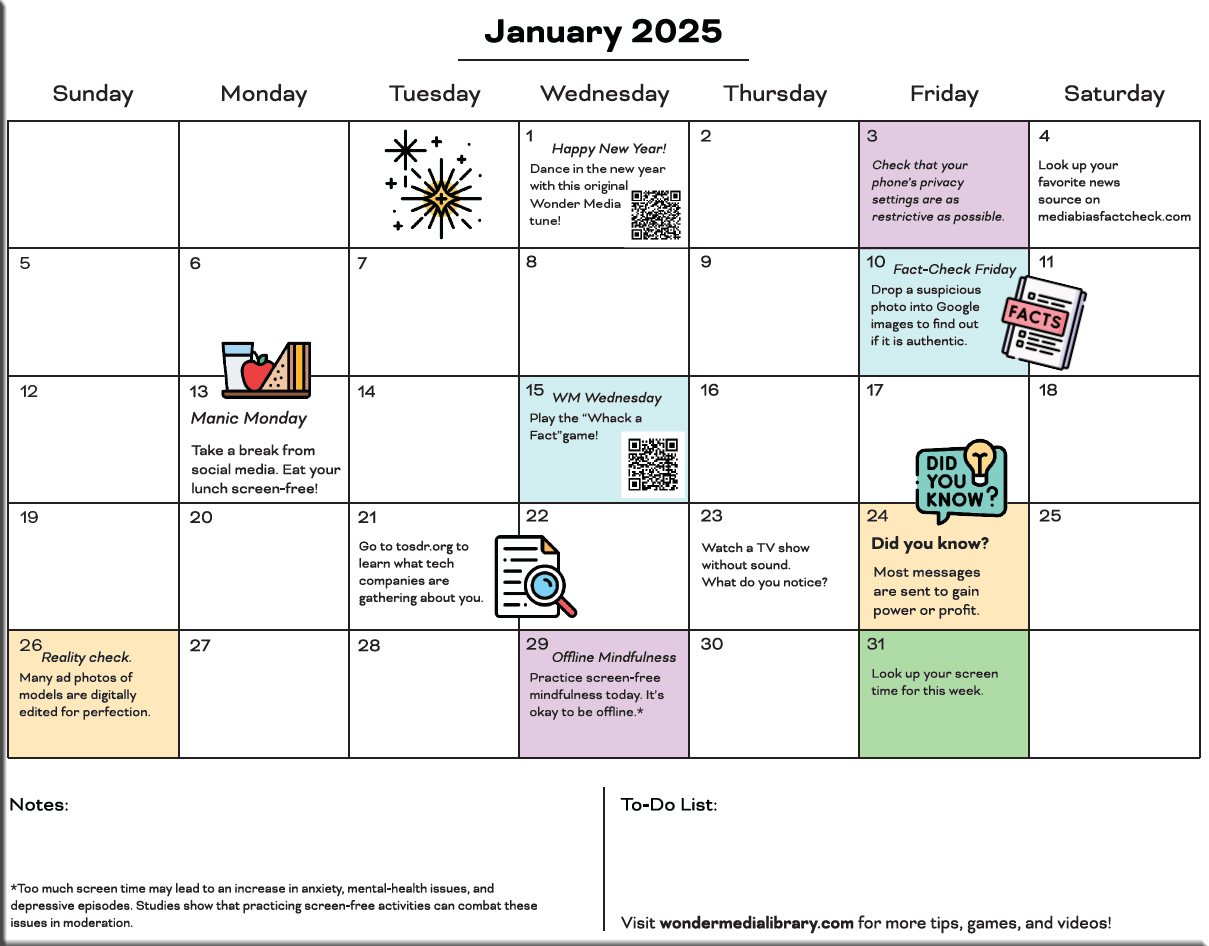

The state of AI in early 2024: Gen AI adoption spikes and starts to generate value — from mckinsey.com

As generative AI adoption accelerates, survey respondents report measurable benefits and increased mitigation of the risk of inaccuracy. A small group of high performers lead the way.

If 2023 was the year the world discovered generative AI (gen AI), 2024 is the year organizations truly began using—and deriving business value from—this new technology. In the latest McKinsey Global Survey on AI, 65 percent of respondents report that their organizations are regularly using gen AI, nearly double the percentage from our previous survey just ten months ago. Respondents’ expectations for gen AI’s impact remain as high as they were last year, with three-quarters predicting that gen AI will lead to significant or disruptive change in their industries in the years ahead.

Organizations are already seeing material benefits from gen AI use, reporting both cost decreases and revenue jumps in the business units deploying the technology. The survey also provides insights into the kinds of risks presented by gen AI—most notably, inaccuracy—as well as the emerging practices of top performers to mitigate those challenges and capture value.

.

.

What’s the future of AI? — from mckinsey.com

AI is here to stay. To outcompete in the future, organizations and individuals alike need to get familiar fast. This series of McKinsey Explainers dives deep into the seven technologies that are already shaping the years to come.

We’re in the midst of a revolution. Just as steam power, mechanized engines, and coal supply chains transformed the world in the 18th century, AI technology is currently changing the face of work, our economies, and society as we know it. We don’t know exactly what the future will look like. But we do know that these seven technologies will play a big role.

.

Generate an e-book in minutes with groqbook — from heatherbcooper.substack.com by Heather Cooper

Plus new Canva workflow tools, Perplexity Pages, and more

.

The new Canva

Canva announced “a whole new Canva” to improve workplace collaborative creation and a revamped platform to simplify its tools for anyone to use.

At Canva Create, several AI features were announced that enhance the design and content creation process:

- Magic Design: Upload an image and select a style to get a curated selection of personalized templates.

- Magic Write: An AI-powered copywriting assistant that can generate written content from a text prompt, useful for presentations and website copy.

- Magic Eraser: This feature can remove unwanted objects or backgrounds from images.

- Magic Edit: Users can swap an object with something else entirely using generative AI.

- Beat Sync: Automatically matches video footage to a soundtrack of your choice.

- Translate: Automatically translates text in designs to over 100 different languages.

Tools are the next big thing in AI — from link.wired.com by Will Knight

Things might get more interesting in business settings as AI companies start deploying so-called “AI agents,” which can take action by operating other software on a computer or via the internet.

Anthropic, a competitor to OpenAI, announced a major new product today that attempts to prove the thesis that tool use is needed for AI’s next leap in usefulness.