Miscommunication Leads AI-Based Hiring Tools Astray — from adigaskell.org

Nearly every Fortune 500 company now uses artificial intelligence (AI) to screen resumes and assess test scores to find the best talent. However, new research from the University of Florida suggests these AI tools might not be delivering the results hiring managers expect.

The problem stems from a simple miscommunication between humans and machines: AI thinks it’s picking someone to hire, but hiring managers only want a list of candidates to interview.

Without knowing about this next step, the AI might choose safe candidates. But if it knows there will be another round of screening, it might suggest different and potentially stronger candidates.

AI agents explained: Why OpenAI, Google and Microsoft are building smarter AI agents — from digit.in by Jayesh Shinde

In the last two years, the world has seen a lot of breakneck advancement in the Generative AI space, right from text-to-text, text-to-image and text-to-video based Generative AI capabilities. And all of that’s been nothing short of stepping stones for the next big AI breakthrough – AI agents. According to Bloomberg, OpenAI is preparing to launch its first autonomous AI agent, which is codenamed ‘Operator,’ as soon as in January 2025.

Apparently, this OpenAI agent – or Operator, as it’s codenamed – is designed to perform complex tasks independently. By understanding user commands through voice or text, this AI agent will seemingly do tasks related to controlling different applications in the computer, send an email, book flights, and no doubt other cool things. Stuff that ChatGPT, Copilot, Google Gemini or any other LLM-based chatbot just can’t do on its own.

2025: The year ‘invisible’ AI agents will integrate into enterprise hierarchies — from venturebeat.com by Taryn Plumb

In the enterprise of the future, human workers are expected to work closely alongside sophisticated teams of AI agents.

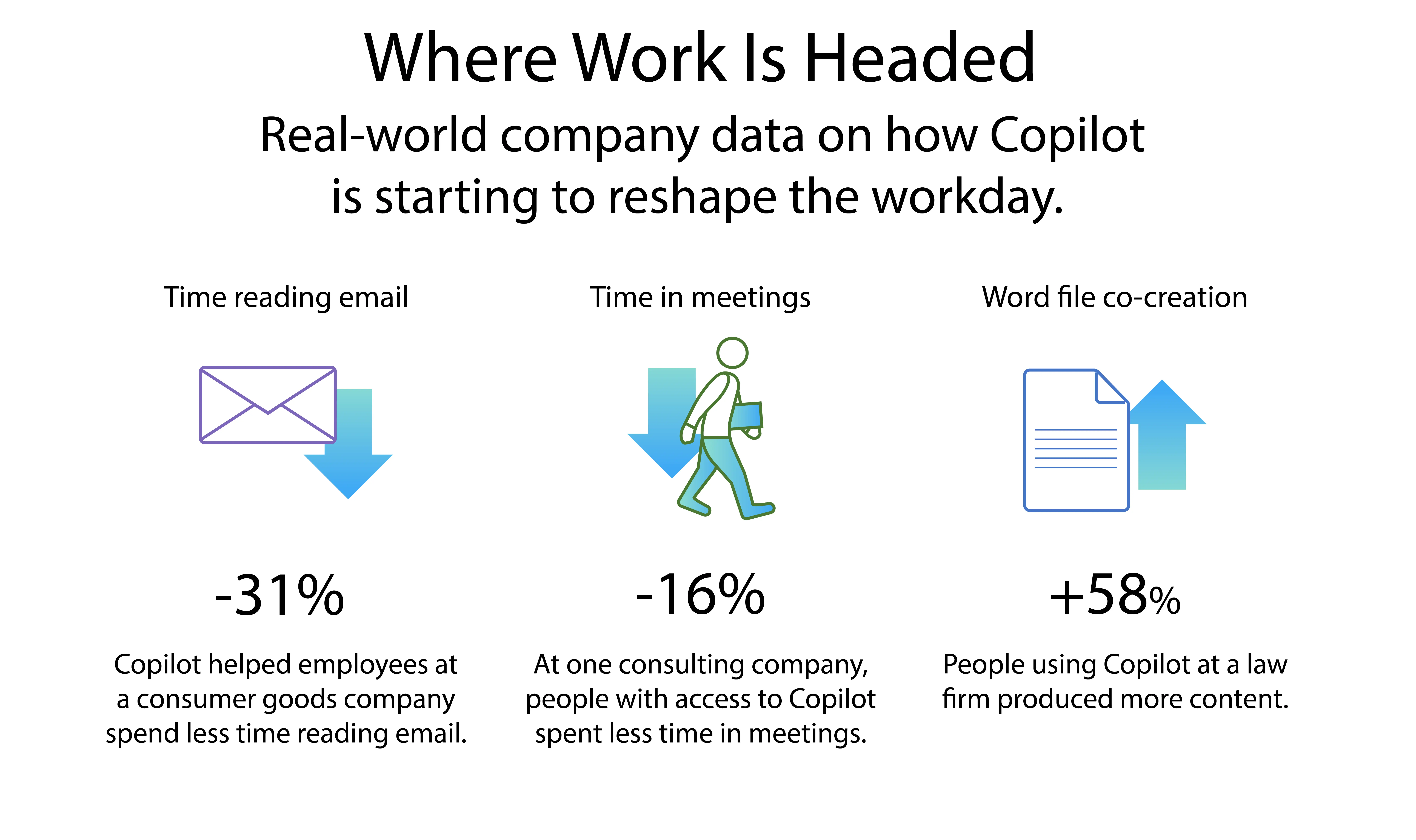

According to McKinsey, generative AI and other technologies have the potential to automate 60 to 70% of employees’ work. And, already, an estimated one-third of American workers are using AI in the workplace — oftentimes unbeknownst to their employers.

However, experts predict that 2025 will be the year that these so-called “invisible” AI agents begin to come out of the shadows and take more of an active role in enterprise operations.

“Agents will likely fit into enterprise workflows much like specialized members of any given team,” said Naveen Rao, VP of AI at Databricks and founder and former CEO of MosaicAI.

State of AI Report 2024 Summary — from ai-supremacy.com by Michael Spencer

Part I, Consolidation, emergence and adoption.

Which AI Image Model Is the Best Speller? Let’s Find Out! — from whytryai.com by Daniel Nest

I test 7 image models to find those that can actually write.

- DALL-E 3 by OpenAI (via Microsoft Designer)

- FLUX1.1 [pro] by Black Forest Labs (via Glif)

- Ideogram 2.0 by Ideogram (via Ideogram)

- Imagen 3 by Google (via Image FX)

- Midjourney 6.1 by Midjourney (via Midjourney)

- Recraft V3 by Recraft (via Recraft)

- Stable Diffusion 3.5 Large by Stability AI (via Hugging Face)

How to get started with AI agents (and do it right) — from venturebeat.com by Taryn Plumb

So how can enterprises choose when to adopt third-party models, open source tools or build custom, in-house fine-tuned models? Experts weigh in.

OpenAI, Google and Anthropic Are Struggling to Build More Advanced AI — from bloomberg.com (behind firewall)

Three of the leading artificial intelligence companies are seeing diminishing returns from their costly efforts to develop newer models.

OpenAI and others seek new path to smarter AI as current methods hit limitations — from reuters.com by Krystal Hu and Anna Tong

Summary

- AI companies face delays and challenges with training new large language models

- Some researchers are focusing on more time for inference in new models

- Shift could impact AI arms race for resources like chips and energy

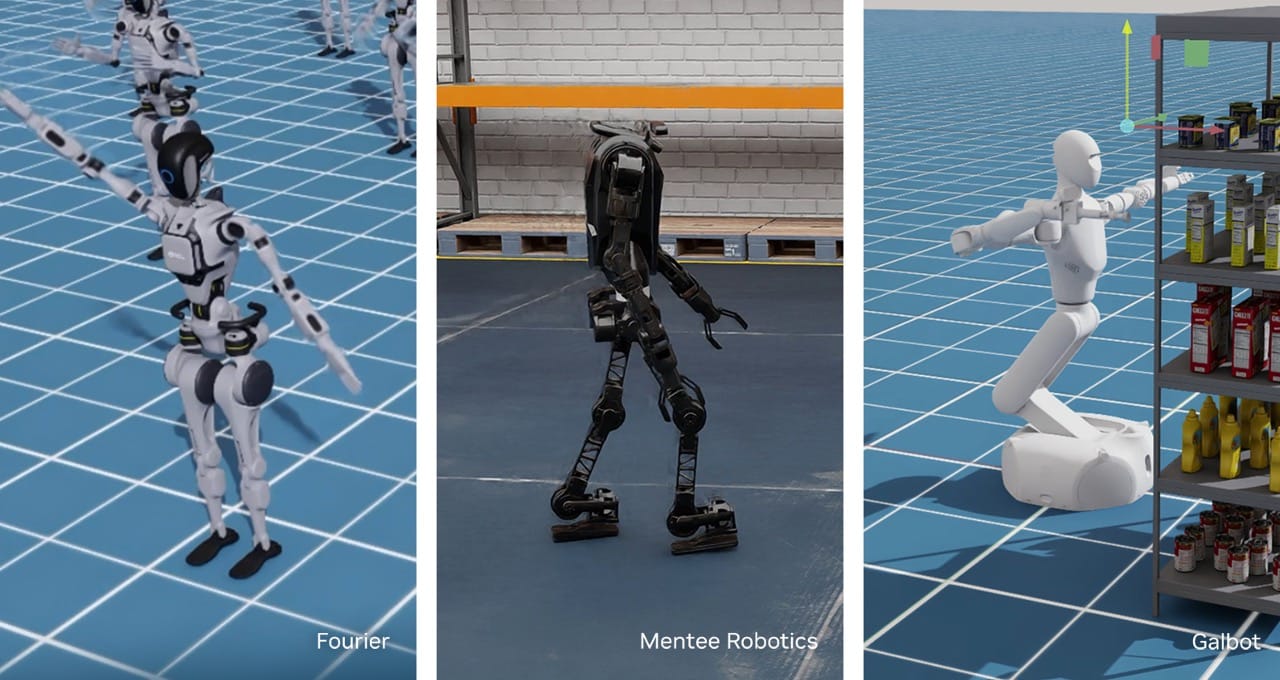

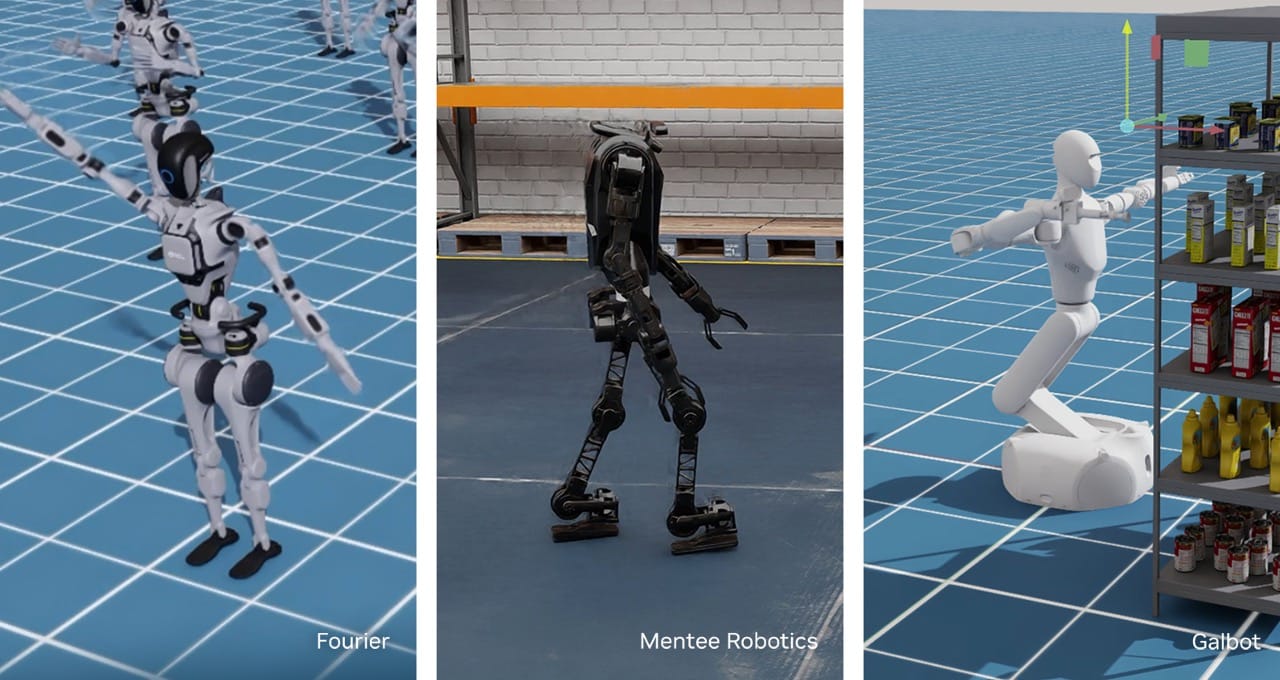

NVIDIA Advances Robot Learning and Humanoid Development With New AI and Simulation Tools — from blogs.nvidia.com by Spencer Huang

New Project GR00T workflows and AI world model development technologies to accelerate robot dexterity, control, manipulation and mobility.

How Generative AI is Revolutionizing Product Development — from intelligenthq.com

A recent report from McKinsey predicts that generative AI could unlock up to $2.6 to $4.4 annually trillion in value within product development and innovation across various industries. This staggering figure highlights just how significantly generative AI is set to transform the landscape of product development. Generative AI app development is driving innovation by using the power of advanced algorithms to generate new ideas, optimize designs, and personalize products at scale. It is also becoming a cornerstone of competitive advantage in today’s fast-paced market. As businesses look to stay ahead, understanding and integrating technologies like generative AI app development into product development processes is becoming more crucial than ever.

What are AI Agents: How To Create a Based AI Agent — from ccn.com by Lorena Nessi

Key Takeaways

- AI agents handle complex, autonomous tasks beyond simple commands, showcasing advanced decision-making and adaptability.

- The Based AI Agent template by Coinbase and Replit provides an easy starting point for developers to build blockchain-enabled AI agents.

- AI based agents specifically integrate with blockchain, supporting crypto wallets and transactions.

- Securing API keys in development is crucial to protect the agent from unauthorized access.

What are AI Agents and How Are They Used in Different Industries? — from rtinsights.com by Salvatore Salamone

AI agents enable companies to make smarter, faster, and more informed decisions. From predictive maintenance to real-time process optimization, these agents are delivering tangible benefits across industries.