Introducing Eureka Labs — “We are building a new kind of school that is AI native.” — by Andrej Karpathy, Previously Director of AI @ Tesla, founding team @ OpenAI

However, with recent progress in generative AI, this learning experience feels tractable. The teacher still designs the course materials, but they are supported, leveraged and scaled with an AI Teaching Assistant who is optimized to help guide the students through them. This Teacher + AI symbiosis could run an entire curriculum of courses on a common platform. If we are successful, it will be easy for anyone to learn anything, expanding education in both reach (a large number of people learning something) and extent (any one person learning a large amount of subjects, beyond what may be possible today unassisted).

?? Excited to share that I am starting an AI+Education company called Eureka Labs.

The announcement:—

We are Eureka Labs and we are building a new kind of school that is AI native.How can we approach an ideal experience for learning something new? For example, in the case… pic.twitter.com/RHPkqdjB8R

— Andrej Karpathy (@karpathy) July 16, 2024

After Tesla and OpenAI, Andrej Karpathy’s startup aims to apply AI assistants to education — from techcrunch.com by Rebecca Bellan

Andrej Karpathy, former head of AI at Tesla and researcher at OpenAI, is launching Eureka Labs, an “AI native” education platform. In tech speak, that usually means built from the ground up with AI at its core. And while Eureka Labs’ AI ambitions are lofty, the company is starting with a more traditional approach to teaching.

San Francisco-based Eureka Labs, which Karpathy registered as an LLC in Delaware on June 21, aims to leverage recent progress in generative AI to create AI teaching assistants that can guide students through course materials.

What does it mean for students to be AI-ready? — from timeshighereducation.com by David Joyner

Not everyone wants to be a computer scientist, a software engineer or a machine learning developer. We owe it to our students to prepare them with a full range of AI skills for the world they will graduate into, writes David Joyner

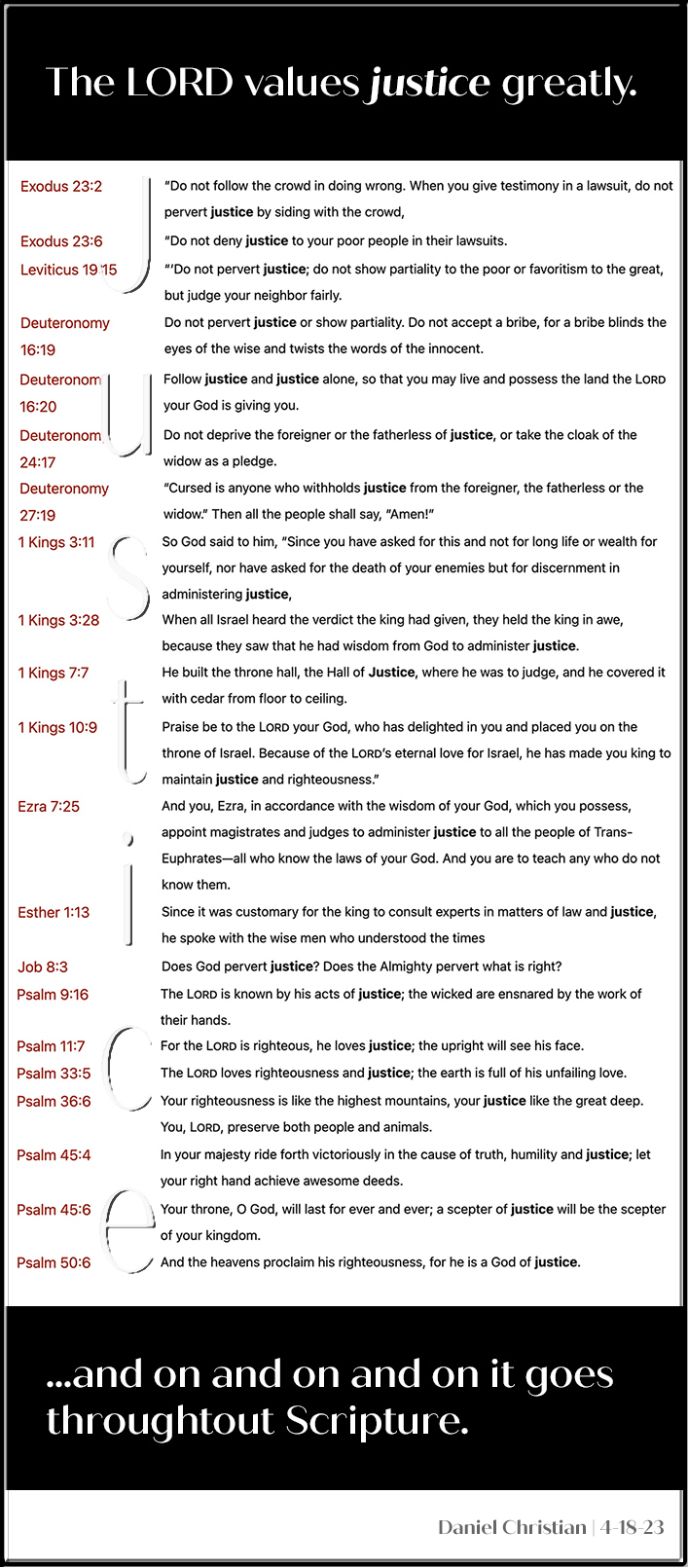

We owe it to our students to prepare them for this full range of AI skills, not merely the end points. The best way to fulfil this responsibility is to acknowledge and examine this new category of tools. More and more tools that students use daily – word processors, email, presentation software, development environments and more – have AI-based features. Practising with these tools is a valuable exercise for students, so we should not prohibit that behaviour. But at the same time, we do not have to just shrug our shoulders and accept however much AI assistance students feel like using.

Teachers say AI usage has surged since the school year started — from eschoolnews.com by Laura Ascione

Half of teachers report an increase in the use of AI and continue to seek professional learning

Fifty percent of educators reported an increase in AI usage, by both students and teachers, over the 2023–24 school year, according to The 2024 Educator AI Report: Perceptions, Practices, and Potential, from Imagine Learning, a digital curriculum solutions provider.

The report offers insight into how teachers’ perceptions of AI use in the classroom have evolved since the start of the 2023–24 school year.

OPINION: What teachers call AI cheating, leaders in the workforce might call progress — from hechingerreport.org by C. Edward Waston and Jose Antonio Bowen

Authors of a new guide explore what AI literacy might look like in a new era

Excerpt (emphasis DSC):

But this very ease has teachers wondering how we can keep our students motivated to do the hard work when there are so many new shortcuts. Learning goals, curriculums, courses and the way we grade assignments will all need to be reevaluated.

The new realities of work also must be considered. A shift in employers’ job postings rewards those with AI skills. Many companies report already adopting generative AI tools or anticipate incorporating them into their workflow in the near future.

A core tension has emerged: Many teachers want to keep AI out of our classrooms, but also know that future workplaces may demand AI literacy.

What we call cheating, business could see as efficiency and progress.

It is increasingly likely that using AI will emerge as an essential skill for students, regardless of their career ambitions, and that action is required of educational institutions as a result.

Teaching Writing With AI Without Replacing Thinking: 4 Tips — from by Erik Ofgang

AI has a lot of potential for writing students, but we can’t let it replace the thinking parts of writing, says writing professor Steve Graham

Reconciling these two goals — having AI help students learn to write more efficiently without hijacking the cognitive benefits of writing — should be a key goal of educators. Finding the ideal balance will require more work from both researchers and classroom educators, but Graham shares some initial tips for doing this currently.

Why I ban AI use for writing assignments — from timeshighereducation.com by James Stacey Taylor

Students may see handwriting essays in class as a needlessly time-consuming approach to assignments, but I want them to learn how to engage with arguments, develop their own views and convey them effectively, writes James Stacey Taylor

Could they use AI to generate objections to the arguments they read? Of course. AI does a good job of summarising objections to Singer’s view. But I don’t want students to parrot others’ objections. I want them to think of objections themselves.

Could AI be useful for them in organising their exegesis of others’ views and their criticisms of them? Yes. But, again, part of what I want my students to learn is precisely what this outsources to the AI: how to organise their thoughts and communicate them effectively.

How AI Will Change Education — from digitalnative.tech by Rex Woodbury

Predicting Innovation in Education, from Personalized Learning to the Downfall of College

This week explores how AI will bleed into education, looking at three segments of education worth watching, then examining which business models will prevail.

- Personalized Learning and Tutoring

- Teacher Tools

- Alternatives to College

- Final Thoughts: Business Models and Why Education Matters

New Guidance from TeachAI and CSTA Emphasizes Computer Science Education More Important than Ever in an Age of AI — from csteachers.org by CSTA

The guidance features new survey data and insights from teachers and experts in computer science (CS) and AI, informing the future of CS education.

SEATTLE, WA – July 16, 2024 – Today, TeachAI, led by Code.org, ETS, the International Society of Technology in Education (ISTE), Khan Academy, and the World Economic Forum, launches a new initiative in partnership with the Computer Science Teachers Association (CSTA) to support and empower educators as they grapple with the growing opportunities and risks of AI in computer science (CS) education.

…

The briefs draw on early research and insights from CSTA members, organizations in the TeachAI advisory committee, and expert focus groups to address common misconceptions about AI and offer a balanced perspective on critical issues in CS education, including:

- Why is it Still Important for Students to Learn to Program?

- How Are Computer Science Educators Teaching With and About AI?

- How Can Students Become Critical Consumers and Responsible Creators of AI?