The Future of College in an AI World — from linkedin.com by Jeff Selingo

In today’s issue: The tension over AI in higher ed; application inflation continues and testing is back; what’s the future of the original classroom technology, the learning management system.

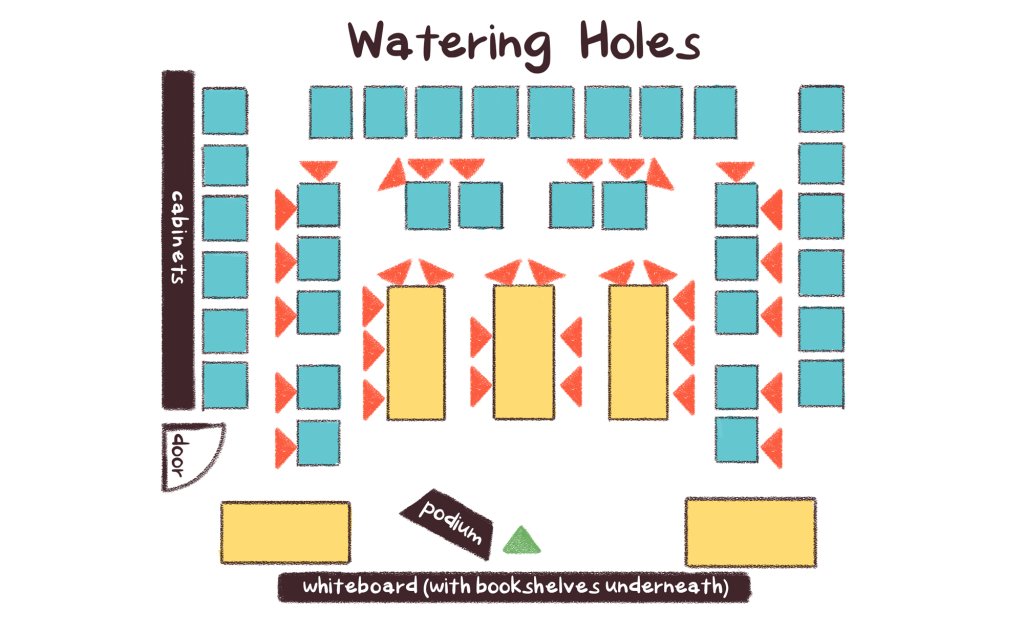

Hundreds of higher ed and industry leaders gathered Tuesday for a summit

on AI and the future of learning at the University of Michigan.

.

Conversations like the one we had at Michigan this week are necessary, but the action rarely matches the ambition.

- We say the humanities are the operating system of an AI world, yet students and parents don’t believe it. They’re voting with their feet toward STEM, business, and narrowly tailored majors they believe will lead to a job.

- Meanwhile, colleges are quietly eliminating the very humanities degrees the panelists were championing, employers are cutting the entry rungs off the career ladder for new graduates, and as Podium Education co-founder Christopher Parrish reminded us yesterday, there’s a yawning gap between demand for experience and the internships that actually exist.

AI Music Generators: Teaching With These Catchy AI Tools — from techlearning.com by Erik Ofgang

AI music generators are getting better and better, and there are more applications in the classroom as a result.

Are All AI Music Generators More Or Less The Same?

No. After experimenting with a few various free ones, I found a wide range of quality with the same prompts.

Gemini is the only one I’d currently recommend. It’s user-friendly but limited and only creates 30-second clips. Other music generators could potentially outperform Gemini with prompt adjustments. The ones I tried did better with the instrumentals but struggled more with the lyrics, and that kind of defeated the purpose of the tool for me.

ChatDOC: Teaching With The AI Summarizing Tool — from techlearning.com by Erik Ofgang

ChatDOC lets users turn any PDF into an AI chatbot that can summarize the text, answer questions, and generate quizzes.

What Is ChatDOC?

ChatDOC is an AI designed to help users interact with PDFs of various types, be it research papers, short stories, or chapters from larger works. Users upload a PDF and then have the opportunity to “chat” with that document, that is speak with a chatbot that bases its answers off of the uploaded text.

ChatDOC can perform tasks such as provide a short summary, search for specific terms, explain the overall theme if it’s a work of literature, or unpack the science in a research paper.

Other similar tools are out there, but ChatDOC is definitely one of the better PDF readers I’ve used. Its free version is quick and easy-to-use, and delivers on its promise of providing an AI that can discuss a given document with users and even quiz them on it.

From AI access to workforce readiness — from chieflearningofficer.com by Johnny Hamilton, Amy Stratbucker, & Brad Bigelow

Is your workforce using the right tool with an outdated mindset and playbook? Why old playbooks fall short — and what learning leaders must do next.

The leadership opportunity

Organizations do not need to predict every future AI capability. They need systems that allow people to explore with curiosity, practice safely, reflect deeply and adapt continuously — starting with what they already have and extending as capabilities evolve.

For CLOs, this is a moment to lead from the center of change — designing workforce readiness that keeps pace with accelerating technology while making work more rewarding for employees and more valuable for the organization. That is how AI moves from the promise of transformation to demonstrated readiness and, ultimately, from promise to performance.

Addendums on 3/19/26:

How to Build Practice-Based Learning Activities with AI — from drphilippahardman.substack.com by Dr Philippa Hardman

Four evidence-based methods for designing, building & deploying active learning activities with your favourite LLM

Most L&D teams are using AI to make content faster. The real opportunity is using it as a practice engine.

The Synthesia 2026 AI in L&D Report f2026 AI in L&D Report found that the fastest-growing areas of planned AI adoption aren’t in content creation — they’re in assessments and simulations (36%), adaptive pathways (33%), and AI tutors (29%). In other words: L&D teams are starting to realise that the most powerful use of AI isn’t producing learning materials. It’s creating environments where learners actually practise.

And you can build these right now — no dev team, no custom platform, no code. Each method below includes a prompt you can paste into your preferred AI tool to generate a working interactive prototype: a self-contained practice activity with a briefing screen, a live AI interaction, and a debrief — all running in the browser, ready to share with stakeholders or deploy to learners.

OpenAI Adds Interactive Math and Science Learning Tools to ChatGPT — from campustechnology.com by Rhea Kelly

Key Takeaways

- ChatGPT adds interactive learning tools: OpenAI introduced interactive math and science visualizations that allow users to explore formulas, variables, and relationships in real time.

- The tool currently covers over 70 core math and science topics and is aimed initially at high school and college-level learners.

- Users can adjust variables, manipulate formulas, and immediately see how changes affect graphs and outcomes.