The Role of Faculty in the University of the Future — from er.educause.edu by Tanya Gamby, David Kil, Rachel Koblic, Paul LeBlanc, Mihnea Moldoveanu, and George Siemens

In the age of AI, the true future of higher education lies not in replacing faculty but in freeing them to do what only humans can—build meaningful relationships, cultivate wisdom, and guide students through the ethical and intellectual challenges machines cannot navigate.

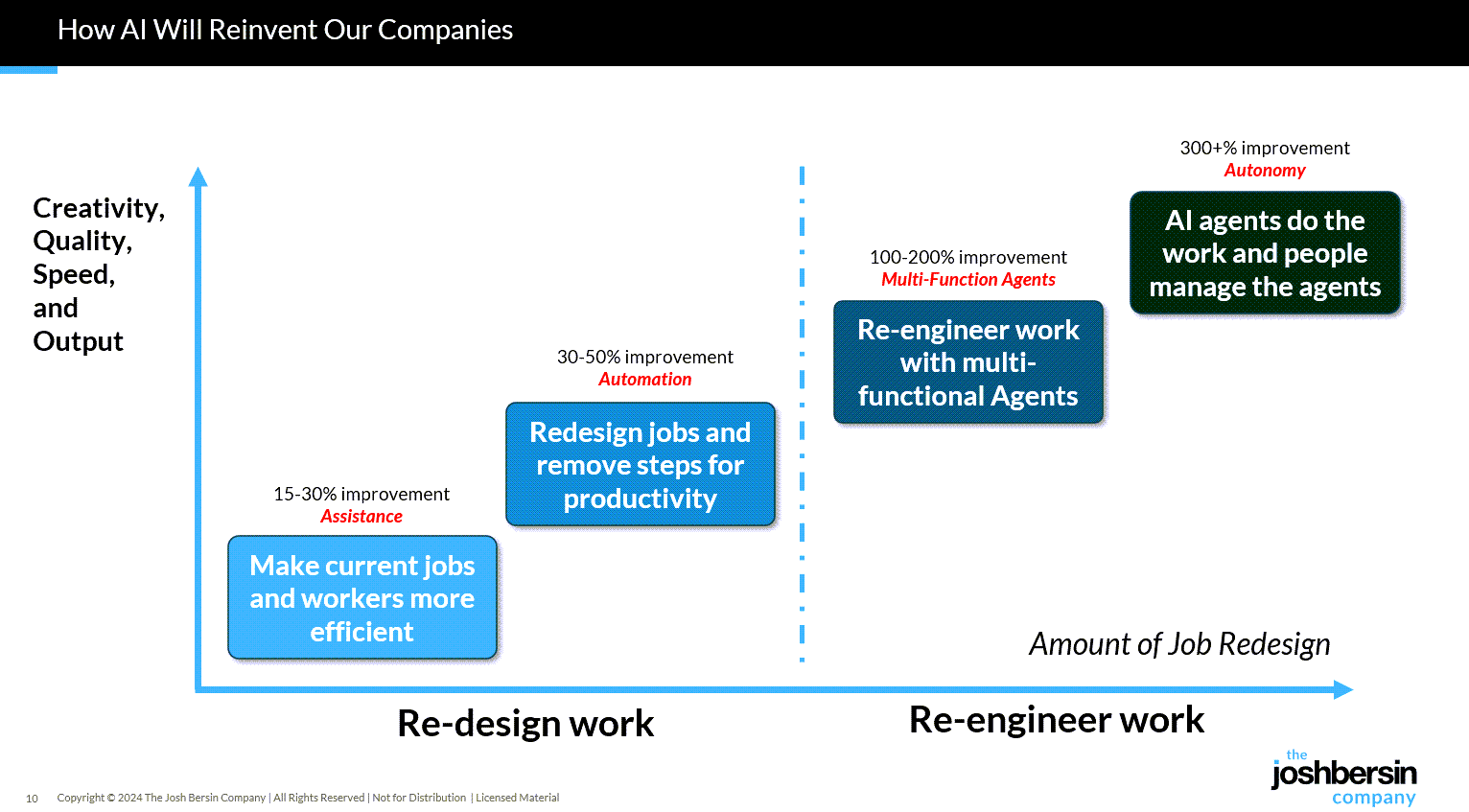

Today, the work of knowledge transfer is often done better, faster, with more precision, and more patiently by AI. These systems can provide nonjudgmental, individualized learning opportunities twenty-four hours a day, seven days a week. Think of AI as a “genius teaching assistant” who assumes much of the work of basic knowledge transfer, unlocking learning when students get stuck and providing real-time assessment. Such a genius TA would offer faculty dashboards that update student progress, flag those who are struggling, and recommend targeted interventions. These tasks free faculty to focus on building genuine relationships with students, using the classroom to foster human skills, and curating community. This may be the great gift of AI to education. But it requires a profound reimagining of faculty roles—perhaps the single biggest hurdle to reimagining higher education, and equally its greatest opportunity.

A concerned faculty member might hear all this and conclude they are becoming obsolete. The opposite is true. The evolution of faculty roles demands more—not less—of what makes a great teacher.

This means intervening in high-impact moments when the genius TA has not unlocked learning; curating class time to lift students from knowing material to applying it in contexts that require critical thinking, judgment, and discernment; and cultivating the human skills that will be most prized in the age of AI: effective communication, constructive dialogue, empathy, creativity, and professional disposition. Most importantly, it means building genuine relationships with students—that make them feel like they matter—the kind that fuels transformation.

From DSC:

A quick comment on one of the sentences in the article, which asserts:

Centers for teaching and learning, which have long supported faculty development at many institutions, will be among the busiest places on campus in the years ahead.

I would change the word will be to should:

Centers for teaching and learning, which have long supported faculty development at many institutions, should be among the busiest places on campus in the years ahead.

For that statement to be true, centers for teaching and learning need to be well-versed in the tools and pedagogies involved, plus in learning science. Those centers need to have credibility for faculty members to value their services. And that’s just it, isn’t it? The faculty members need to see those centers for teaching and learning as having something that they lack…that they need assistance with. Otherwise, if such centers are just viewed as superfluous, nothing much will change.

Also, my experience has been that if those centers for teaching and learning are in an IT group/department, they should be moved to the academic side of the house instead. Many faculty members don’t value people from IT enough to make changes in how they teach — no matter how qualified those people are. They view those people as “IT” only.

You might also be interested in the other articles in that series:

- The AI Tsunami Is Here: Reinventing Education for the Age of AI

- Dialogue at Scale: AI, Soft Skills, and the Future of Assessment

- From Personalized to Precision Learning: Unlocking the Next Transformation in Higher Education

- The University, the Chatbot, and a Call for a New Mission for Higher Education