Gen AI Is Going Mainstream: Here’s What’s Coming Next — from joshbersin.com by Josh Bersin

I just completed nearly 60,000 miles of travel across Europe, Asia, and the Middle East meeting with hundred of companies to discuss their AI strategies. While every company’s maturity is different, one thing is clear: AI as a business tool has arrived: it’s real and the use-cases are growing.

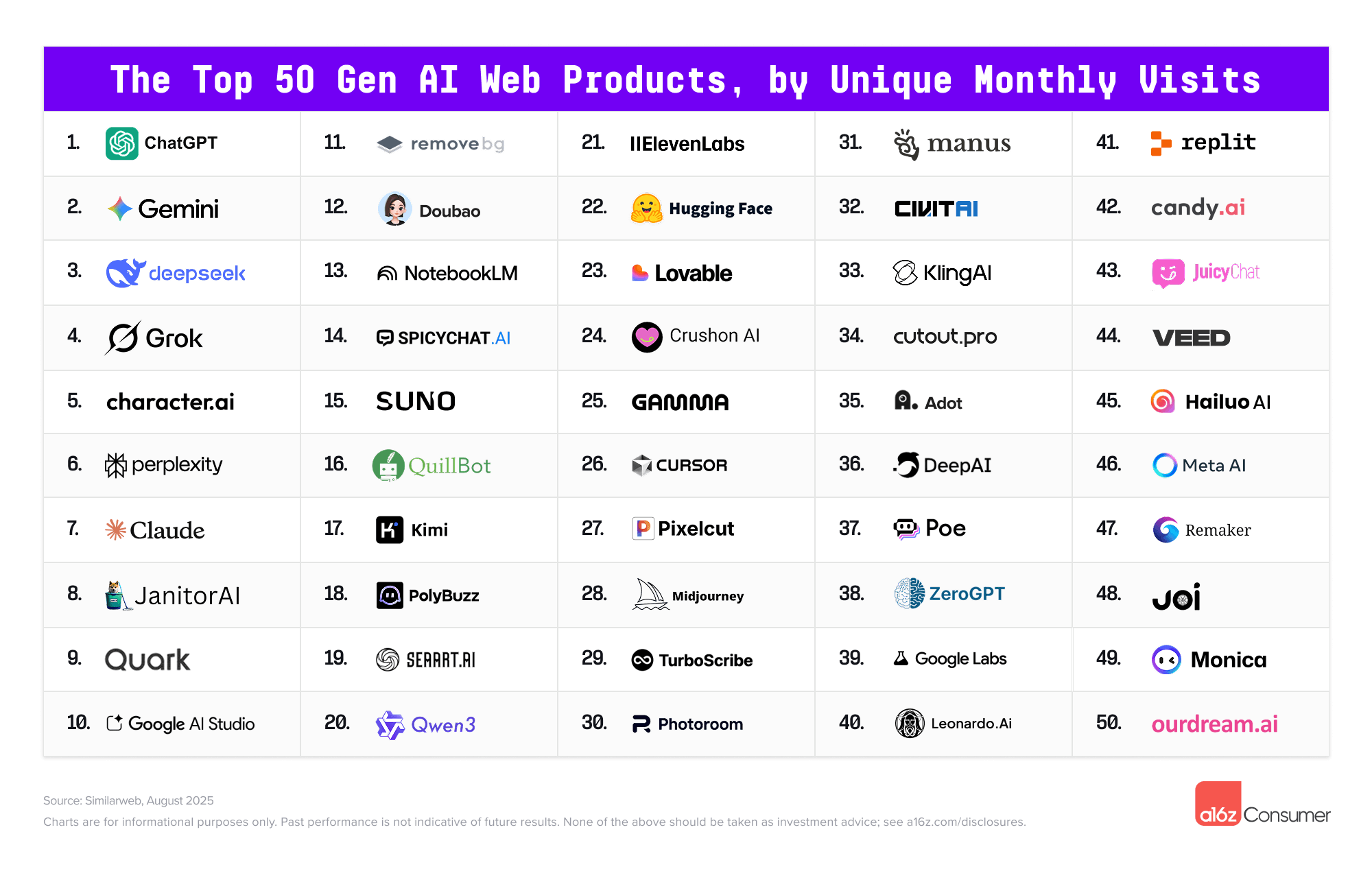

A new survey by Wharton shows that 46% of business leaders use Gen AI daily and 80% use it weekly. And among these users, 72% are measuring ROI and 74% report a positive return. HR, by the way, is the #3 department in use cases, only slightly behind IT and Finance.

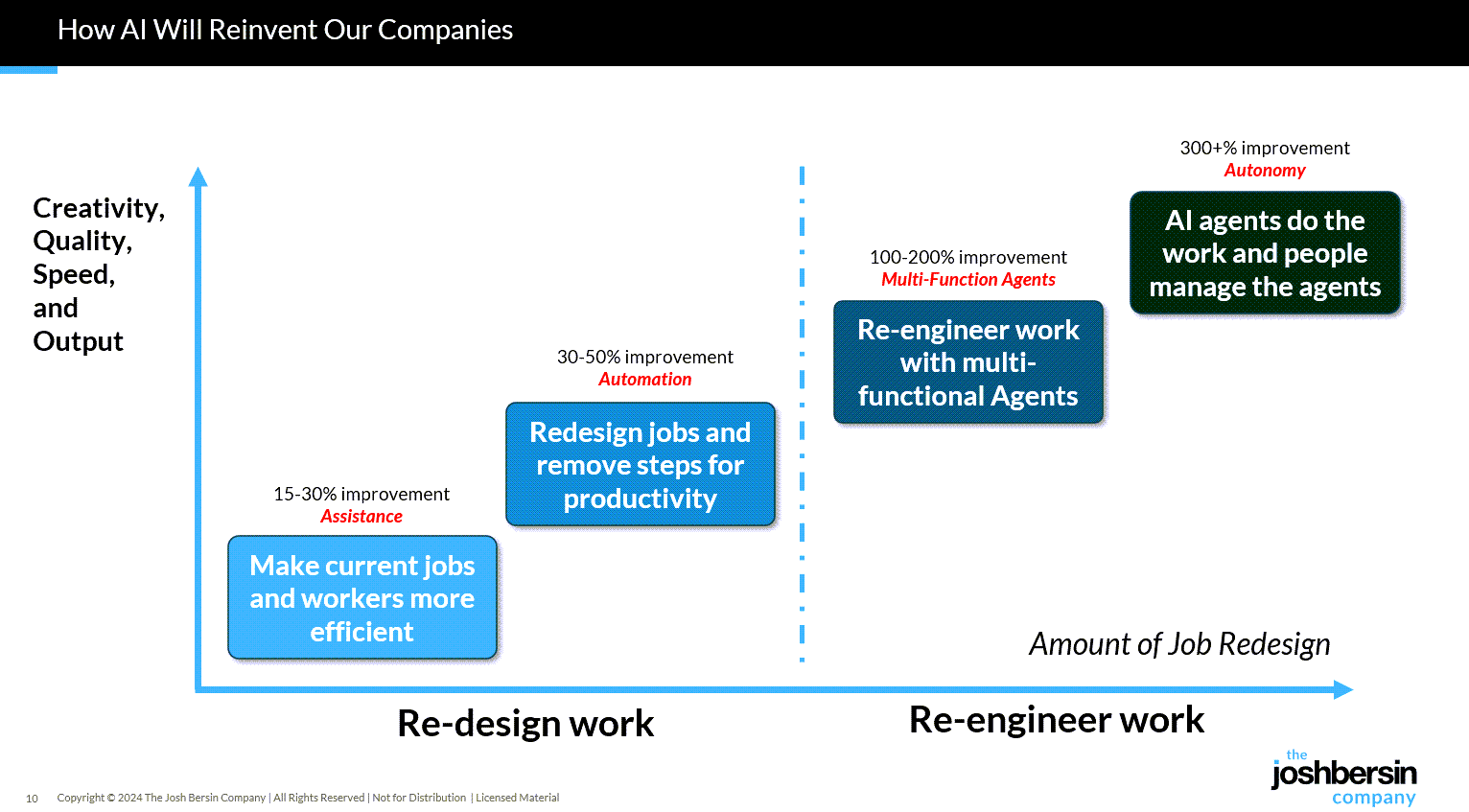

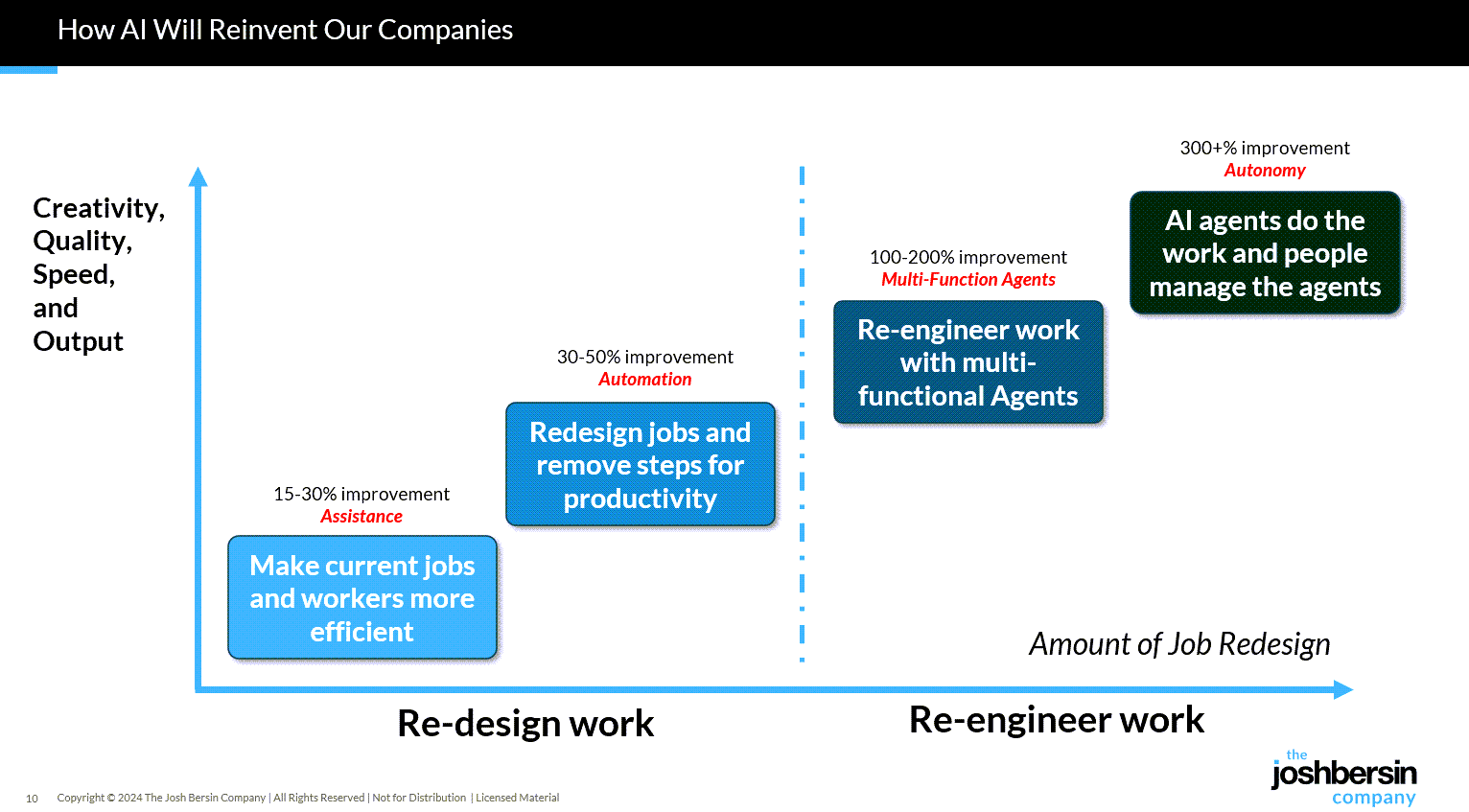

What are companies getting out of all this? Productivity. The #1 use case, by far, is what we call “stage 1” usage – individual productivity.

.

From DSC:

Josh writes: “Many of our large clients are now implementing AI-native learning systems and seeing 30-40% reduction in staff with vast improvements in workforce enablement.”

While I get the appeal (and ROI) from management’s and shareholders’ perspective, this represents a growing concern for employment and people’s ability to earn a living.

And while I highly respect Josh and his work through the years, I disagree that we’re over the problems with AI and how people are using it:

Two years ago the NYT was trying to frighten us with stories of AI acting as a romance partner. Well those stories are over, and thanks to a $Trillion (literally) of capital investment in infrastructure, engineering, and power plants, this stuff is reasonably safe.

Those stories are just beginning…they’re not close to being over.

“… imagine a world where there’s no separation between learning and assessment…” — from aiedusimplified.substack.com by Lance Eaton, Ph.D. and Tawnya Means

An interview with Tawnya Means

So let’s imagine a world where there’s no separation between learning and assessment: it’s ongoing. There’s always assessment, always learning, and they’re tied together. Then we can ask: what is the role of the human in that world? What is it that AI can’t do?

…

Imagine something like that in higher ed. There could be tutoring or skill-based work happening outside of class, and then relationship-based work happening inside of class, whether online, in person, or some hybrid mix.

The aspects of learning that don’t require relational context could be handled by AI, while the human parts remain intact. For example, I teach strategy and strategic management. I teach people how to talk with one another about the operation and function of a business. I can help students learn to be open to new ideas, recognize when someone pushes back out of fear of losing power, or draw from my own experience in leading a business and making future-oriented decisions.

But the technical parts such as the frameworks like SWOT analysis, the mechanics of comparing alternative viewpoints in a boardroom—those could be managed through simulations or reports that receive immediate feedback from AI. The relational aspects, the human mentoring, would still happen with me as their instructor.

Part 2 of their interview is here: