Proverbs 10:12

Hatred stirs up conflict, but love covers over all wrongs.

Proverbs 9:10

The fear of the Lord is the beginning of wisdom, and knowledge of the Holy One is understanding.

Hebrews 4:12

For the word of God is alive and active. Sharper than any double-edged sword, it penetrates even to dividing soul and spirit, joints and marrow; it judges the thoughts and attitudes of the heart.

Proverbs 11:1

The LORD detests dishonest scales, but accurate weights find favor with him.

From DSC:

As a relevant aside, the following article made me think about some of the reasons why the LORD used parables/stories to speak to the people:

The 2-Minute Story That Saved My Career: How Storytelling Can Be The Most Effective Form of Feedback — from techlearning.com by Michael Gaskell

By harnessing the power of story, school leaders can create a culture in which feedback is embraced as an essential ingredient for growth

The Storytelling Approach

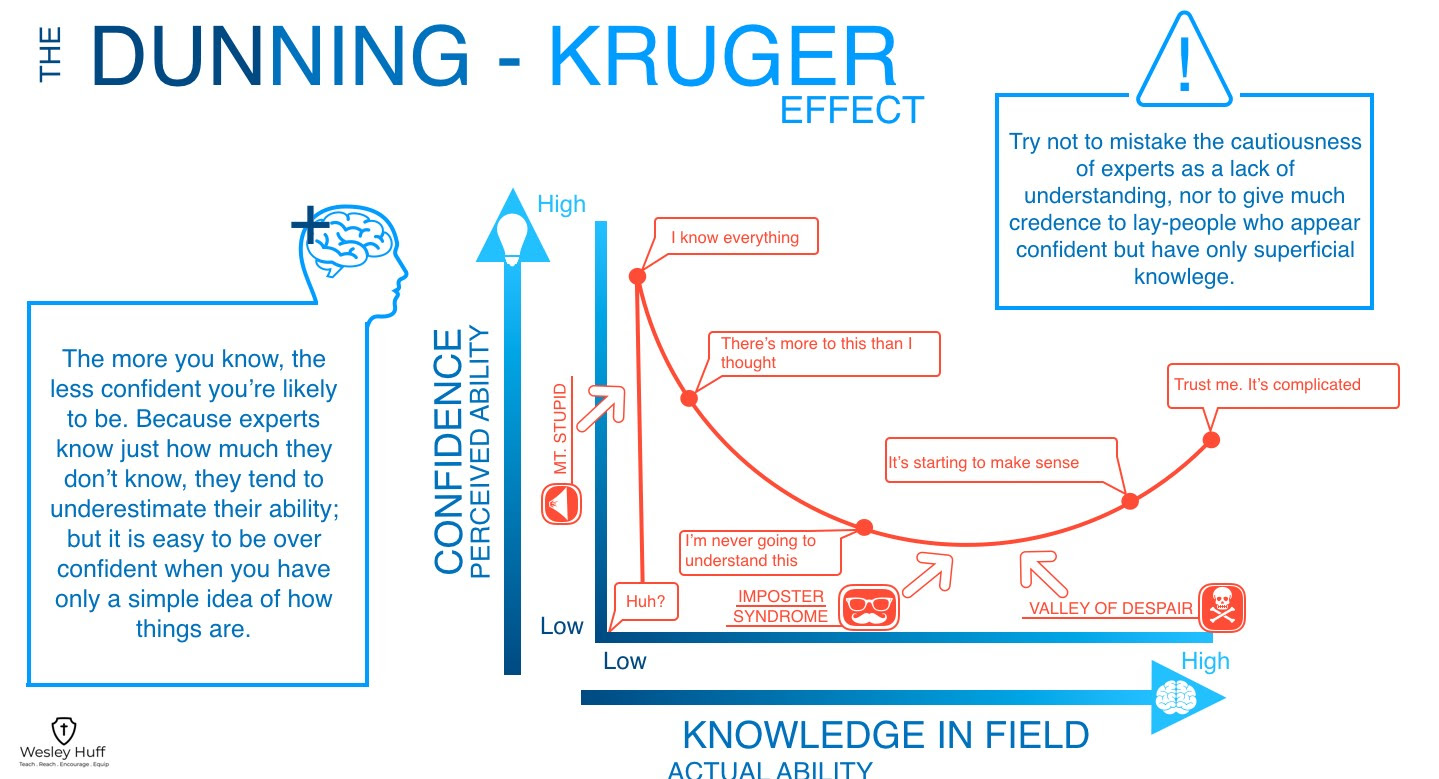

Sharing stories is effective because it seems to get around our defensiveness. When information is presented in a story form, people reason about it differently than if it were presented as a list of facts or a direct critique.

Here’s more of why and then how to implement it with success in your school leadership work.

1. Transportation and Distancing: Listening to a story pulls us out of a defensive mode (the “do I agree or do I disagree?” mindset) and into a thoughtful, observant framework. Being transported allows the individual to identify with others in a way that is different from experiencing the situation for themselves. It’s a third-person, objective mindset, a safe zone for people to evaluate a situation from.

2. Shifting Perspective: When individuals consider challenges from the perspective of someone who is not them, it dramatically alters their thinking. They gain the latitude and freedom to consider the available options without feeling personally attacked. That wise sage did this when he shared his story of struggling. Making it safe and helping me to see him as having an objective, difficult experience is why when I was able to take the perspective of a distanced other. It became easier to think about the situation in a wiser way and come up with a better solution.

3. Engaging Different Brain Systems: Fundamentally different pathways are triggered when processing stories compared to facts. Storytelling engages social relevance brain systems–those that help us understand what other people think and feel, such as empathy, another higher order processing mechanism.