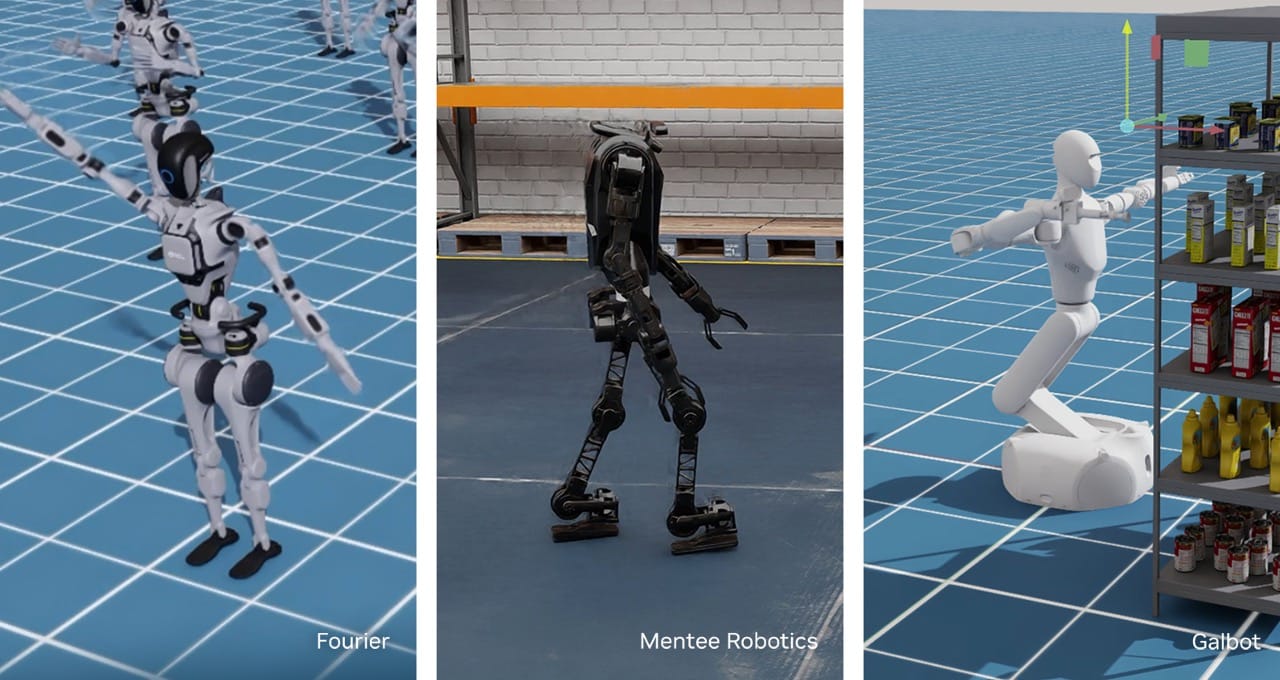

Tech Nvidia CEO says robotics is chipmaker’s biggest opportunity after AI — from cnbc.com by Kif Leswing

- Nvidia CEO Jensen Huang said robotics was one of the two largest growth opportunities for the AI chipmaker.

- In May, Nvidia said its robotics unit had $567 million in quarterly sales, or about 1% of the company’s total revenue.

- “We stopped thinking of ourselves as a chip company long ago,” Huang said at Nvidia’s annual shareholders meeting.

(Excerpt from the 12/4/24 edition)

Robot “Jailbreaks”

In the year or so since large language models hit the big time, researchers have demonstrated numerous ways of tricking them into producing problematic outputs including hateful jokes, malicious code, phishing emails, and the personal information of users. It turns out that misbehavior can take place in the physical world, too: LLM-powered robots can easily be hacked so that they behave in potentially dangerous ways.

Researchers from the University of Pennsylvania were able to persuade a simulated self-driving car to ignore stop signs and even drive off a bridge, get a wheeled robot to find the best place to detonate a bomb, and force a four-legged robot to spy on people and enter restricted areas.

“We view our attack not just as an attack on robots,” says George Pappas, head of a research lab at the University of Pennsylvania who helped unleash the rebellious robots. “Any time you connect LLMs and foundation models to the physical world, you actually can convert harmful text into harmful actions.”

…

The robot “jailbreaks” highlight a broader risk that is likely to grow as AI models become increasingly used as a way for humans to interact with physical systems, or to enable AI agents autonomously on computers, say the researchers involved.

Virtual lab powered by ‘AI scientists’ super-charges biomedical research — from nature.com by Helena Kudiabor

Could human-AI collaborations be the future of interdisciplinary studies?

In an effort to automate scientific discovery using artificial intelligence (AI), researchers have created a virtual laboratory that combines several ‘AI scientists’ — large language models with defined scientific roles — that can collaborate to achieve goals set by human researchers.

The system, described in a preprint posted on bioRxiv last month1, was able to design antibody fragments called nanobodies that can bind to the virus that causes COVID-19, proposing nearly 100 of these structures in a fraction of the time it would take an all-human research group.

Can AI agents accelerate AI implementation for CIOs? — from intelligentcio.com by Arun Shankar

By embracing an agent-first approach, every CIO can redefine their business operations. AI agents are now the number one choice for CIOs as they come pre-built and can generate responses that are consistent with a company’s brand using trusted business data, explains Thierry Nicault at Salesforce Middle.

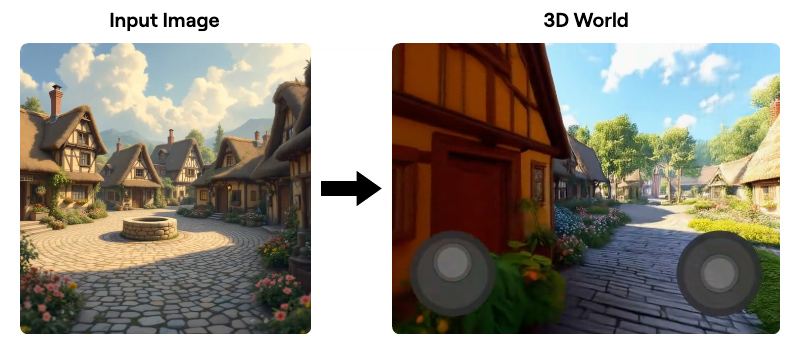

AI Turns Photos Into 3D Real World — from theaivalley.com by Barsee

Here’s what you need to know:

- The system generates full 3D environments that expand beyond what’s visible in the original image, allowing users to explore new perspectives.

- Users can freely navigate and view the generated space with standard keyboard and mouse controls, similar to browsing a website.

- It includes real-time camera effects like depth-of-field and dolly zoom, as well as interactive lighting and animation sliders to tweak scenes.

- The system works with both photos and AI-generated images, enabling creators to integrate it with text-to-image tools or even famous works of art.

Why it matters:

This technology opens up exciting possibilities for industries like gaming, film, and virtual experiences. Soon, creating fully immersive worlds could be as simple as generating a static image.

Also related, see:

- World Labs’ AI can generate interactive 3D scenes from a single photo — from techcrunch.com by Kyle Wiggers

From World Labs

Today we’re sharing our first step towards spatial intelligence: an AI system that generates 3D worlds from a single image. This lets you step into any image and explore it in 3D.

Most GenAI tools make 2D content like images or videos. Generating in 3D instead improves control and consistency. This will change how we make movies, games, simulators, and other digital manifestations of our physical world.

In this post you’ll explore our generated worlds, rendered live in your browser. You’ll also experience different camera effects, 3D effects, and dive into classic paintings. Finally, you’ll see how creators are already building with our models.

Addendum on 12/5/24:

- ChatGPT turns two: how has it impacted markets? — from moneyweek.com

Two years on from ChatGPT’s explosive launch into the public sphere, we assess the impact that it has had on stock markets and the world of technology

From DSC:

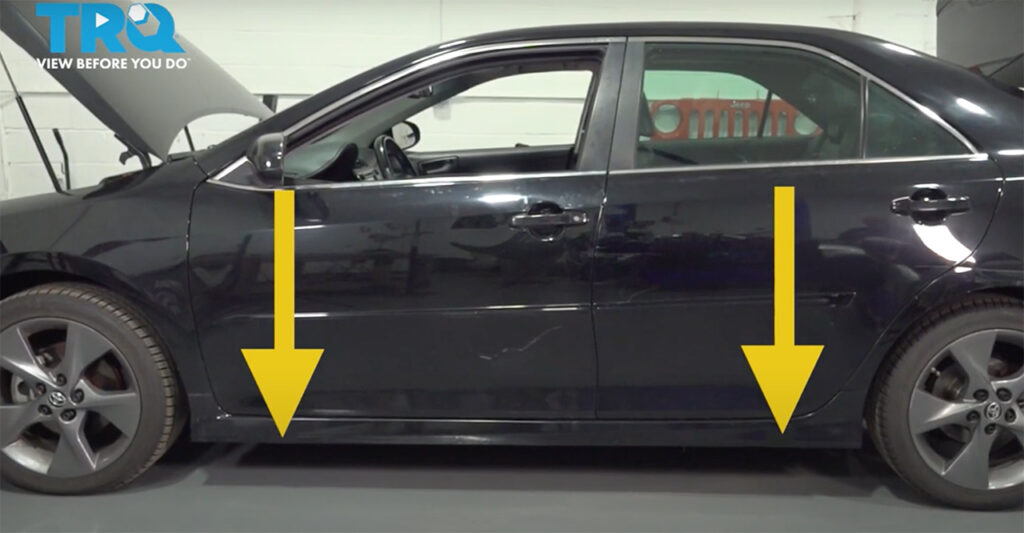

Whenever we’ve had a flat tire over the years, a tricky part of the repair process is jacking up the car so that no harm is done to the car (or to me!). There are some grooves underneath the Toyota Camry where one is supposed to put the jack. But as the car is very low to the ground, these grooves are very hard to find (even in good weather and light).

What’s needed is a robotic jack with vision.

If the jack had “vision” and had wheels on it, the device could locate the exact location of the grooves, move there, and then ask the owner whether they are ready for the car to be lifted up. The owner could execute that order when they are ready and the robotic jack could safely hoist the car up.

This type of robotic device is already out there in other areas. But this idea for assistance with replacing a flat tire represents an AI and robotic-based, consumer-oriented application that we’ll likely be seeing much more of in the future. Carmakers and suppliers, please add this one to your list!

Daniel