I Was a University AI Czar. I’m Not Equipped to Teach in the Age of AI. — from jgellers.substack.com by Josh Gellers, PhD

The reason that I claim I am not well-suited to thrive as an instructor in the age of AI is because both AI Enthusiasts and AI Resisters put a lot of thought and energy into completely redesigning their classes in response to AI. This is the one takeaway that I don’t think the Exhausted Majority has fully accepted yet—to excel as a teacher in this AI era, you need to totally revise how you teach and how you assess what students learn in your classes.

I can say this much—whatever solution our industry comes up with, it’s likely to emerge from teaching and learning centers. Contrary to what Paul Schofield wrote in the Chronicle of Higher Education, pedagogy experts are the best hope we have to equip today’s faculty with the tools required to succeed in this uncertain educational environment. As I always tell my students, “I was trained for 7 years to become a researcher and 2 days to become a teacher.” The idea that only disciplinary experts know how to teach and have nothing to learn from so-called “nonscholars” is so laughable that one has to wonder whether an AI agent jokingly wrote that sad opinion piece to troll the whole academe.

Also from Dr. Gellers, see:

The Worst AI Policy in Higher Ed

How Berkeley Law Boalt-ed From Expertise in Favor of Abstinence

Last week, one of the top law schools in the United States, the University of California, Berkeley School of Law, released its final policy on artificial intelligence, effective summer 2026. In the span of a breezy 1.5 pages, the school outlined the challenge AI poses to legal education and how it plans to address this problem. Despite these intentions, this AI policy is, in my estimation, the worst AI policy in higher education I have seen.

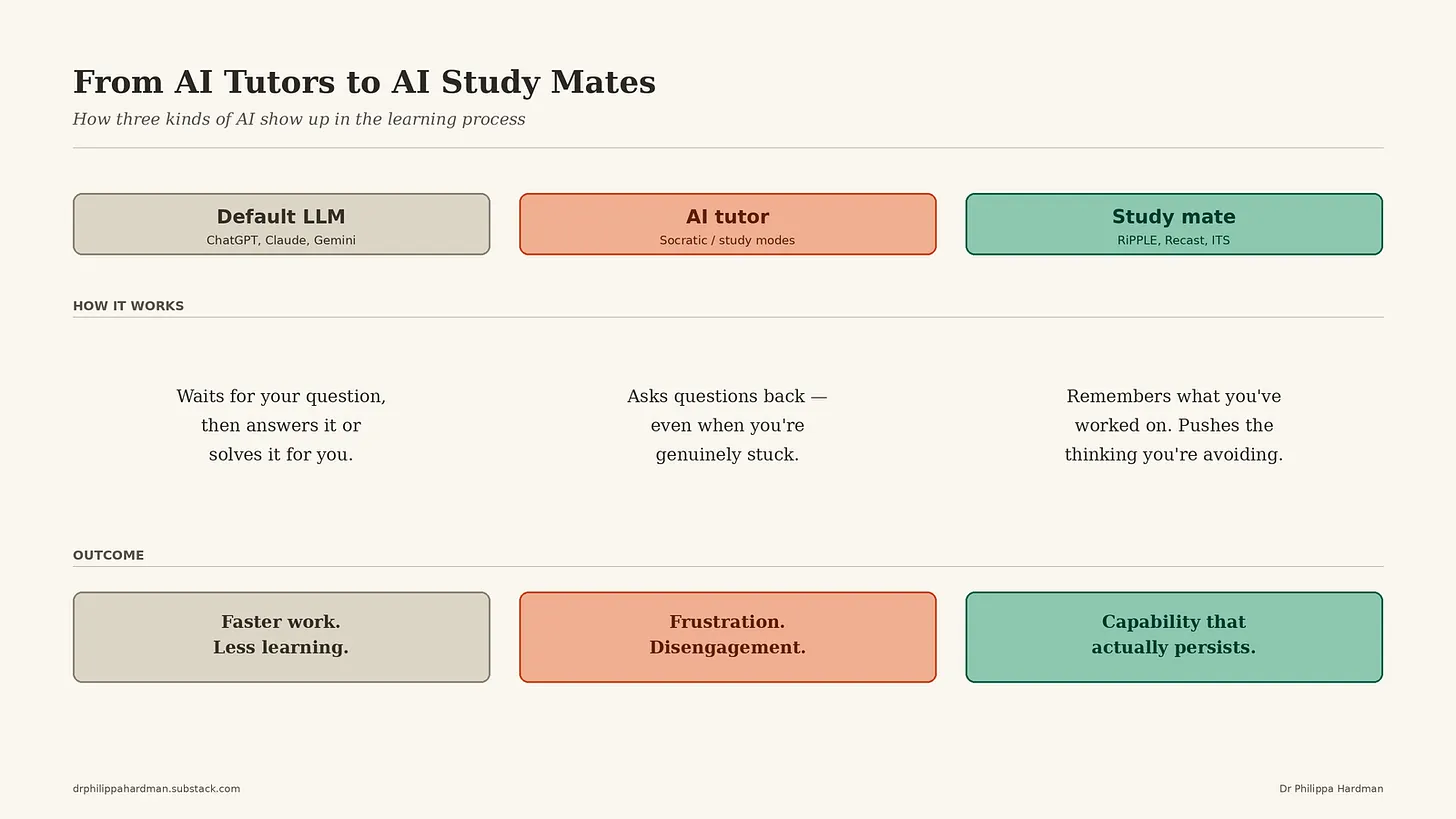

From AI Tutors to AI Study Mates— from drphilippahardman.substack.com by Dr Philippa Hardman

New research reveals how AI can enable real learning — not just productivity gains

The point isn’t that AI is inherently bad for learning — it’s that the meta-analyses showing that LLMs improve assignment and performance scores are measuring the wrong thing. They’re measuring performance with the AI present, not learning that persists once it’s gone.

.

From DSC:

Notice that when an AI-based learning system can remember what you’ve worked on and how you are doing — where you are struggling or doing well — it can have a positive impact on your longer-term learning. That, to me, is where long-term based learner profiles come in.

Later in the article, Dr. Hardman points out that “if we want to deliver AI tooling which supports substantive learning, we need to intentionally create a new category of AI tool for ‘learning at work’ which prioritises learning and development over productivity.” While I agree with that, I do wonder if businesses will care, so long as the work gets done and gets done well. But this calls into mind the word “experience” — something that traditionally has been hard fought to get in the corporate world. But the corporate realm often doesn’t like to pay for experience (beyond key AI-based jobs) when they perceive it’s getting too expensive. Ask all those 50 and over who had or have a target on their backs.

.