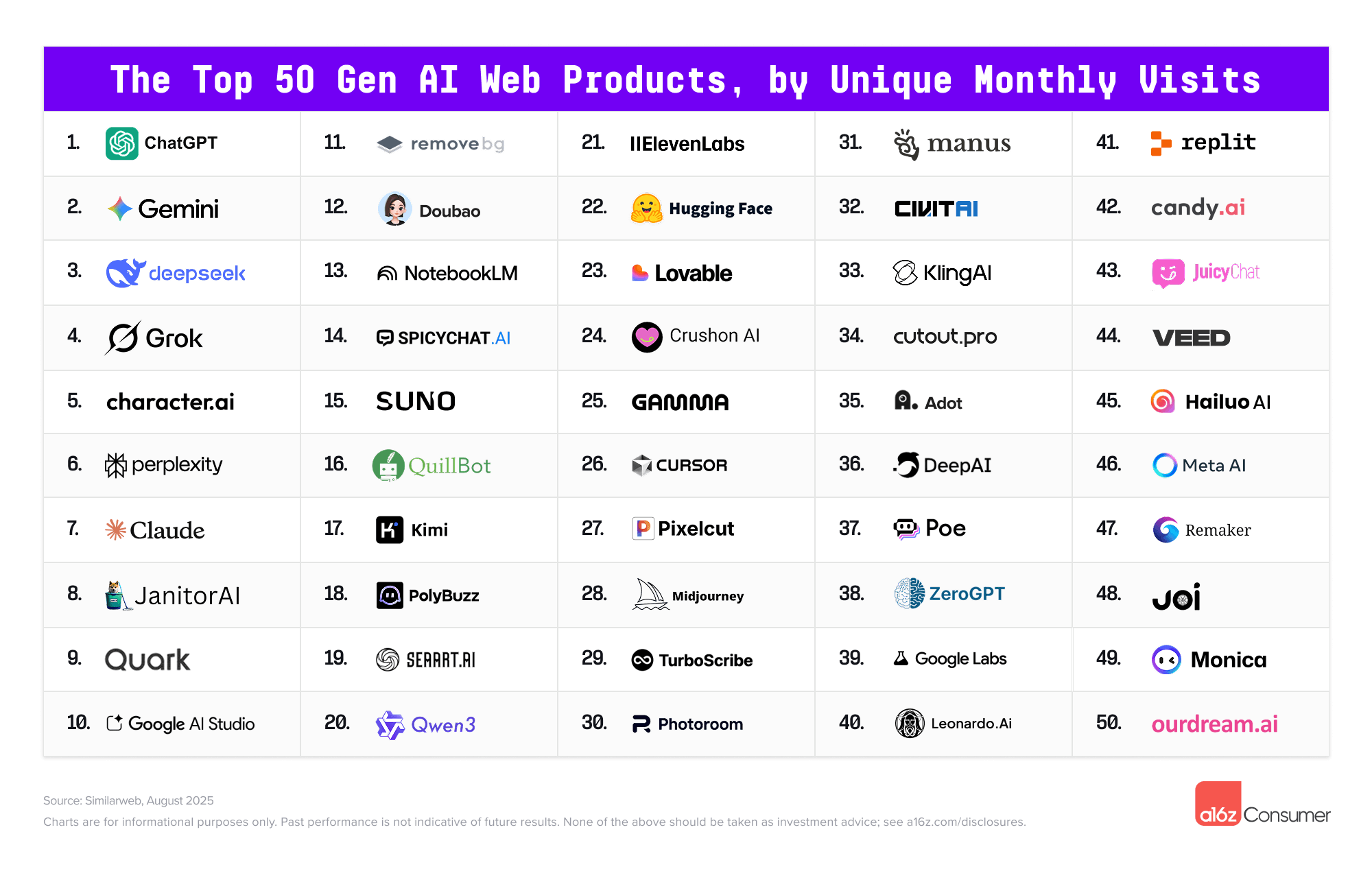

The Top 100 [Gen AI] Consumer Apps 5th edition — from a16z.com

And in an interesting move by Microsoft and Samsung:

A smarter way to talk to your TV: Microsoft Copilot launches on Samsung TVs and monitors — from microsoft.com

Voice-powered AI meets a visual companion for entertainment, everyday help, and everything in between.

Redmond, Wash., August 27—Today, we’re announcing the launch of Copilot on select Samsung TVs and monitors, transforming the biggest screen in your home into your most personal and helpful companion—and it’s free to use.

Copilot makes your TV easier and more fun to use with its voice-powered interface, friendly on-screen character, and simple visual cards. Now you can quickly find what you’re looking for and discover new favorites right from your living room.

Because it lives on the biggest screen in the home, Copilot is a social experience—something you can use together with family and friends to spark conversations, help groups decide what to watch, and turn the TV into a shared space for curiosity and connection.

Thoughts on thinking — from dcurt.is by Dustin Curtis

Intellectual rigor comes from the journey: the dead ends, the uncertainty, and the internal debate. Skip that, and you might still get the insight–but you’ll have lost the infrastructure for meaningful understanding. Learning by reading LLM output is cheap. Real exercise for your mind comes from building the output yourself.

The irony is that I now know more than I ever would have before AI. But I feel slightly dumber. A bit more dull. LLMs give me finished thoughts, polished and convincing, but none of the intellectual growth that comes from developing them myself.

Using AI Right Now: A Quick Guide — from oneusefulthing.org by Ethan Mollick

Which AIs to use, and how to use them

Every few months I put together a guide on which AI system to use. Since I last wrote my guide, however, there has been a subtle but important shift in how the major AI products work. Increasingly, it isn’t about the best model, it is about the best overall system for most people. The good news is that picking an AI is easier than ever and you have three excellent choices. The challenge is that these systems are getting really complex to understand. I am going to try and help a bit with both.

First, the easy stuff.

Which AI to Use

For most people who want to use AI seriously, you should pick one of three systems: Claude from Anthropic, Google’s Gemini, and OpenAI’s ChatGPT.

Also see:

Student Voice, Socratic AI, and the Art of Weaving a Quote — from elmartinsen.substack.com by Eric Lars Martinsen

How a custom bot helps students turn source quotes into personal insight—and share it with others

This summer, I tried something new in my fully online, asynchronous college writing course. These classes have no Zoom sessions. No in-person check-ins. Just students, Canvas, and a lot of thoughtful design behind the scenes.

One activity I created was called QuoteWeaver—a PlayLab bot that helps students do more than just insert a quote into their writing.

It’s a structured, reflective activity that mimics something closer to an in-person 1:1 conference or a small group quote workshop—but in an asynchronous format, available anytime. In other words, it’s using AI not to speed students up, but to slow them down.

…

The bot begins with a single quote that the student has found through their own research. From there, it acts like a patient writing coach, asking open-ended, Socratic questions such as:

What made this quote stand out to you?

How would you explain it in your own words?

What assumptions or values does the author seem to hold?

How does this quote deepen your understanding of your topic?

It doesn’t move on too quickly. In fact, it often rephrases and repeats, nudging the student to go a layer deeper.

The Disappearance of the Unclear Question — from jeppestricker.substack.com Jeppe Klitgaard Stricker

New Piece for UNESCO Education Futures

On [6/13/25], UNESCO published a piece I co-authored with Victoria Livingstone at Johns Hopkins University Press. It’s called The Disappearance of the Unclear Question, and it’s part of the ongoing UNESCO Education Futures series – an initiative I appreciate for its thoughtfulness and depth on questions of generative AI and the future of learning.

Our piece raises a small but important red flag. Generative AI is changing how students approach academic questions, and one unexpected side effect is that unclear questions – for centuries a trademark of deep thinking – may be beginning to disappear. Not because they lack value, but because they don’t always work well with generative AI. Quietly and unintentionally, students (and teachers) may find themselves gradually avoiding them altogether.

Of course, that would be a mistake.

We’re not arguing against using generative AI in education. Quite the opposite. But we do propose that higher education needs a two-phase mindset when working with this technology: one that recognizes what AI is good at, and one that insists on preserving the ambiguity and friction that learning actually requires to be successful.

Leveraging GenAI to Transform a Traditional Instructional Video into Engaging Short Video Lectures — from er.educause.edu by Hua Zheng

By leveraging generative artificial intelligence to convert lengthy instructional videos into micro-lectures, educators can enhance efficiency while delivering more engaging and personalized learning experiences.

This AI Model Never Stops Learning — from link.wired.com by Will Knight

Researchers at Massachusetts Institute of Technology (MIT) have now devised a way for LLMs to keep improving by tweaking their own parameters in response to useful new information.

The work is a step toward building artificial intelligence models that learn continually—a long-standing goal of the field and something that will be crucial if machines are to ever more faithfully mimic human intelligence. In the meantime, it could give us chatbots and other AI tools that are better able to incorporate new information including a user’s interests and preferences.

The MIT scheme, called Self Adapting Language Models (SEAL), involves having an LLM learn to generate its own synthetic training data and update procedure based on the input it receives.

Edu-Snippets — from scienceoflearning.substack.com by Nidhi Sachdeva and Jim Hewitt

Why knowledge matters in the age of AI; What happens to learners’ neural activity with prolonged use of LLMs for writing

Highlights:

- Offloading knowledge to Artificial Intelligence (AI) weakens memory, disrupts memory formation, and erodes the deep thinking our brains need to learn.

- Prolonged use of ChatGPT in writing lowers neural engagement, impairs memory recall, and accumulates cognitive debt that isn’t easily reversed.

Essential AI tools for better work — from wondertools.substack.com by Jeremy Caplan

My favorite tactics for making the most of AI — a podcast conversation

AI tools I consistently rely on (areas covered mentioned below)

- Research and analysis

- Communication efficiency

- Multimedia creation

AI tactics that work surprisingly well

1. Reverse interviews

Instead of just querying AI, have it interview you. Get the AI to interview you, rather than interviewing it. Give it a little context and what you’re focusing on and what you’re interested in, and then you ask it to interview you to elicit your own insights.”

This approach helps extract knowledge from yourself, not just from the AI. Sometimes we need that guide to pull ideas out of ourselves.

OpenAI’s Deep Research Agent Is Coming for White-Collar Work — from wired.com by Will Knight

The research-focused agent shows how a new generation of more capable AI models could automate some office tasks.

Isla Fulford, a researcher at OpenAI, had a hunch that Deep Research would be a hit even before it was released.

Fulford had helped build the artificial intelligence agent, which autonomously explores the web, deciding for itself what links to click, what to read, and what to collate into an in-depth report. OpenAI first made Deep Research available internally; whenever it went down, Fulford says, she was inundated with queries from colleagues eager to have it back. “The number of people who were DMing me made us pretty excited,” says Fulford.

Since going live to the public on February 2, Deep Research has proven to be a hit with many users outside the company too.

Nvidia to open quantum computing research center in Boston — from seekingalpha.com by Ravikash Bakolia

Nvidia (NASDAQ:NVDA) will open a quantum computing research lab in Boston which is expected to start operations later this year.

The Nvidia Accelerated Quantum Research Center, or NVAQC, will integrate leading quantum hardware with AI supercomputers, enabling what is known as accelerated quantum supercomputing, said the company in a March 18 press release.

Nvidia’s CEO Jensen Huang also made this announcement on Thursday at the company’s first-ever Quantum Day at its annual GTC event.

French quantum computer firm Pasqal links up with NVIDIA — from reuters.com

PARIS, March 21 (Reuters) – Pasqal, a fast-growing French quantum computer start-up company, announced on Friday a partnership with chip giant Nvidia (NVDA.O), opens new tab whereby Pasqal’s customers would gain access to more tools to develop quantum applications.

Pasqal said it would connect its quantum computing units and cloud platform onto NVIDIA’s open-source platform called CUDA-Q.

Introducing next-generation audio models in the API — from openai.com

A new suite of audio models to power voice agents, now available to developers worldwide.

Today, we’re launching new speech-to-text and text-to-speech audio models in the API—making it possible to build more powerful, customizable, and intelligent voice agents that offer real value. Our latest speech-to-text models set a new state-of-the-art benchmark, outperforming existing solutions in accuracy and reliability—especially in challenging scenarios involving accents, noisy environments, and varying speech speeds. These improvements increase transcription reliability, making the models especially well-suited for use cases like customer call centers, meeting note transcription, and more.

From DSC:

Look out Google, Amazon, and others! Nvidia is putting the pedal to the metal in terms of being innovative and visionary! They are leaving the likes of Apple in the dust.

The top talent out there is likely to go to Nvidia for a while. Engineers, programmers/software architects, network architects, product designers, data specialists, AI researchers, developers of robotics and autonomous vehicles, R&D specialists, computer vision specialists, natural language processing experts, and many more types of positions will be flocking to Nvidia to work for a company that has already changed the world and will likely continue to do so for years to come.

NVIDIA just shook the AI and Robotic world at NVIDIA GTC 2025.

CEO Jensen Huang announced jaw-dropping breakthroughs.

Here are the top 11 key highlights you can’t afford to miss: (wait till you see no 3) pic.twitter.com/domejuVdw5

— The AI Colony (@TheAIColony) March 19, 2025

NVIDIA’s AI Superbowl — from theneurondaily.com by Noah and Grant

PLUS: Prompt tips to make AI writing more natural

That’s despite a flood of new announcements (here’s a 16 min video recap), which included:

- A new architecture for massive AI data centers (now called “AI factories”).

- A physics engine for robot training built with Disney and DeepMind.

- A partnership with GM to develop next-gen vehicles, factories and robots.

- A new Blackwell chip with “Dynamo” software that makes AI reasoning 40x faster than previous generations.

- A new “Rubin” chip slated for 2026 and a “Feynman” chip set for 2028.

For enterprises, NVIDIA unveiled DGX Spark and DGX Station—Jensen’s vision of AI-era computing, bringing NVIDIA’s powerful Blackwell chip directly to your desk.

Nvidia Bets Big on Synthetic Data — from wired.com by Lauren Goode

Nvidia has acquired synthetic data startup Gretel to bolster the AI training data used by the chip maker’s customers and developers.

Nvidia, xAI to Join BlackRock and Microsoft’s $30 Billion AI Infrastructure Fund — from investopedia.com by Aaron McDade

Nvidia and xAI are joining BlackRock and Microsoft in an AI infrastructure group seeking $30 billion in funding. The group was first announced in September as BlackRock and Microsoft sought to fund new data centers to power AI products.

Nvidia CEO Jensen Huang says we’ll soon see 1 million GPU data centers visible from space — from finance.yahoo.com by Daniel Howley

Nvidia CEO Jensen Huang says the company is preparing for 1 million GPU data centers.

Nvidia stock stems losses as GTC leaves Wall Street analysts ‘comfortable with long term AI demand’ — from finance.yahoo.com by Laura Bratton

Nvidia stock reversed direction after a two-day slide that saw shares lose 5% as the AI chipmaker’s annual GTC event failed to excite investors amid a broader market downturn.

Microsoft, Google, and Oracle Deepen Nvidia Partnerships. This Stock Got the Biggest GTC Boost. — from barrons.com by Adam Clark and Elsa Ohlen

The 4 Big Surprises from Nvidia’s ‘Super Bowl of AI’ GTC Keynote — from barrons.com by Tae Kim; behind a paywall

AI Super Bowl. Hi everyone. This week, 20,000 engineers, scientists, industry executives, and yours truly descended upon San Jose, Calif. for Nvidia’s annual GTC developers’ conference, which has been dubbed the “Super Bowl of AI.”

DeepSeek R-1 Explained — from aieducation.substack.com by Claire Zau

A no-nonsense FAQ (for everyone drowning in DeepSeek headlines)

There is a good chance you’re exhausted by the amount of DeepSeek coverage flooding your inbox. Between the headlines and hot takes on X, it’s hard not to have questions: What is DeepSeek? Why is it special? Why is everyone freaking out? What does this mean for the AI ecosystem? Can you explain the tech? Am I allowed to use it?

Let’s break down why exactly it’s such a big deal with some straightforward FAQs:

AI Voice Agents: 2025 Update — from a16z.com (Andreessen Horowitz) by Olivia Moore

Voice is one of the most powerful unlocks for AI application companies. It is the most frequent (and most information-dense) form of human communication, made “programmable” for the first time due to AI.

…

For consumers, we believe voice will be the first — and perhaps the primary — way people interact with AI. This interaction could take the form of an always-available companion or coach, or by democratizing services, such as language learning, that were previously inaccessible.

The professions upskilling in AI — from by Rachel Cromidas

The use of artificial intelligence at work continues to climb. Twice as many LinkedIn members in the U.S. say they are using AI on the job now compared to 2023, according to the latest Workforce Confidence survey. Meanwhile, at least half of workers say AI skills will help them progress in their careers. Product managers are the most likely to agree AI will give them a boost, while those in healthcare services roles are least likely.

Your AI Writing Partner: The 30-Day Book Framework — from aidisruptor.ai by Alex McFarland and Kamil Banc

How to Turn Your “Someday” Manuscript into a “Shipped” Project Using AI-Powered Prompts

With that out of the way, I prefer Claude.ai for writing. For larger projects like a book, create a Claude Project to keep all context in one place.

- Copy [the following] prompts into a document

- Use them in sequence as you write

- Adjust the word counts and specifics as needed

- Keep your responses for reference

- Use the same prompt template for similar sections to maintain consistency

Each prompt builds on the previous one, creating a systematic approach to helping you write your book.

Using NotebookLM to Boost College Reading Comprehension — from michellekassorla.substack.com by Michelle Kassorla and Eugenia Novokshanova

This semester, we are using NotebookLM to help our students comprehend and engage with scholarly texts

We were looking hard for a new tool when Google released NotebookLM. Not only does Google allow unfettered use of this amazing tool, it is also a much better tool for the work we require in our courses. So, this semester, we have scrapped our “old” tools and added NotebookLM as the primary tool for our English Composition II courses (and we hope, fervently, that Google won’t decide to severely limit its free tier before this semester ends!)

If you know next-to-nothing about NotebookLM, that’s OK. What follows is the specific lesson we present to our students. We hope this will help you understand all you need to know about NotebookLM, and how to successfully integrate the tool into your own teaching this semester.

Leadership & Generative AI: Hard-Earned Lessons That Matter — from jeppestricker.substack.com by Jeppe Klitgaard Stricker

Actionable Advice for Higher Education Leaders in 2025

AFTER two years of working closely with leadership in multiple institutions, and delivering countless workshops, I’ve seen one thing repeatedly: the biggest challenge isn’t the technology itself, but how we lead through it. Here is some of my best advice to help you navigate generative AI with clarity and confidence:

- Break your own AI policies before you implement them. …

- Fund your failures. …

- Resist the pilot program. …

- Host Anti-Tech Tech Talks …

- …+ several more tips

While generative AI in higher education obviously involves new technology, it’s much more about adopting a curious and human-centric approach in your institution and communities. It’s about empowering learners in new, human-oriented and innovative ways. It is, in a nutshell, about people adapting to new ways of doing things.

Maria Anderson responded to Clay’s posting with this idea:

Here’s an idea: […] the teacher can use the [most advanced] AI tool to generate a complete solution to “the problem” — whatever that is — and demonstrate how to do that in class. Give all the students access to the document with the results.

And then grade the students on a comprehensive followup activity / presentation of executing that solution (no notes, no more than 10 words on a slide). So the students all have access to the same deep AI result, but have to show they comprehend and can iterate on that result.

Grammarly just made it easier to prove the sources of your text in Google Docs — from zdnet.com by Jack Wallen

If you want to be diligent about proving your sources within Google Documents, Grammarly has a new feature you’ll want to use.

In this age of distrust, misinformation, and skepticism, you may wonder how to demonstrate your sources within a Google Document. Did you type it yourself, copy and paste it from a browser-based source, copy and paste it from an unknown source, or did it come from generative AI?

You may not think this is an important clarification, but if writing is a critical part of your livelihood or life, you will definitely want to demonstrate your sources.

That’s where the new Grammarly feature comes in.

The new feature is called Authorship, and according to Grammarly, “Grammarly Authorship is a set of features that helps users demonstrate their sources of text in a Google doc. When you activate Authorship within Google Docs, it proactively tracks the writing process as you write.”

AI Agents Are Coming to Higher Education — from govtech.com

AI agents are customizable tools with more decision-making power than chatbots. They have the potential to automate more tasks, and some schools have implemented them for administrative and educational purposes.

Custom GPTs are on the rise in education. Google’s version, Gemini Gems, includes a premade version called Learning Coach, and Microsoft announced last week a new agent addition to Copilot featuring use cases at educational institutions.

Generative Artificial Intelligence and Education: A Brief Ethical Reflection on Autonomy — from er.educause.edu by Vicki Strunk and James Willis

Given the widespread impacts of generative AI, looking at this technology through the lens of autonomy can help equip students for the workplaces of the present and of the future, while ensuring academic integrity for both students and instructors.

The principle of autonomy stresses that we should be free agents who can govern ourselves and who are able to make our own choices. This principle applies to AI in higher education because it raises serious questions about how, when, and whether AI should be used in varying contexts. Although we have only begun asking questions related to autonomy and many more remain to be asked, we hope that this serves as a starting place to consider the uses of AI in higher education.

The Best of AI 2024: Top Winners Across 9 Categories — from aiwithallie.beehiiv.com by Allie Miller

2025 will be our weirdest year in AI yet. Read this so you’re more prepared.

Top AI Tools of 2024 — from ai-supremacy.com by Michael Spencer (behind a paywall)

Which AI tools stood out for me in 2024? My list.

Memorable AI Tools of 2024

Catergories included:

- Useful

- Popular

- Captures the zeighest of AI product innovation

- Fun to try

- Personally satisfying

- NotebookLM

- Perplexity

- Claude

- …

New “best” AI tool? Really? — from theneurondaily.com by Noah and Grant

PLUS: A free workaround to the “best” new AI…

What is Google’s Deep Research tool, and is it really “the best” AI research tool out there?

…

Here’s how it works: Think of Deep Research as a research team that can simultaneously analyze 50+ websites, compile findings, and create comprehensive reports—complete with citations.

Unlike asking ChatGPT to research for you, Deep Research shows you its research plan before executing, letting you edit the approach to get exactly what you need.

…

It’s currently free for the first month (though it’ll eventually be $20/month) when bundled with Gemini Advanced. Then again, Perplexity is always free…just saying.

We couldn’t just take J-Cal’s word for it, so we rounded up some other takes:

- Income Stream Surfers compared it to Perplexity …

- Teacher’s Tech demo’d how prompt detail drastically affects results…

- Olivio Sarikas used it to analyze tweet performance …

Our take: We then compared Perplexity, ChatGPT Search, and Deep Research (which we’re calling DR, or “The Docta” for short) on robot capabilities from CES revealed:

An excerpt from today’s Morning Edition from Bloomberg

Global banks will cut as many as 200,000 jobs in the next three to five years—a net 3% of the workforce—as AI takes on more tasks, according to a Bloomberg Intelligence survey. Back, middle office and operations are most at risk. A reminder that Citi said last year that AI is likely to replace more jobs in banking than in any other sector. JPMorgan had a more optimistic view (from an employee perspective, at any rate), saying its AI rollout has augmented, not replaced, jobs so far.

NVIDIA’s Apple moment?! — from theneurondaily.com by Noah Edelman and Grant Harvey

PLUS: How to level up your AI workflows for 2025…

NVIDIA wants to put an AI supercomputer on your desk (and it only costs $3,000).

…

And last night at CES 2025, Jensen Huang announced phase two of this plan: Project DIGITS, a $3K personal AI supercomputer that runs 200B parameter models from your desk. Guess we now know why Apple recently developed an NVIDIA allergy…

…

But NVIDIA doesn’t just want its “Apple PC moment”… it also wants its OpenAI moment. NVIDIA also announced Cosmos, a platform for building physical AI (think: robots and self-driving cars)—which Jensen Huang calls “the ChatGPT moment for robotics.”

Jensen Huang’s latest CES speech: AI Agents are expected to become the next robotics industry, with a scale reaching trillions of dollars — from chaincatcher.com

NVIDIA is bringing AI from the cloud to personal devices and enterprises, covering all computing needs from developers to ordinary users.

At CES 2025, which opened this morning, NVIDIA founder and CEO Jensen Huang delivered a milestone keynote speech, revealing the future of AI and computing. From the core token concept of generative AI to the launch of the new Blackwell architecture GPU, and the AI-driven digital future, this speech will profoundly impact the entire industry from a cross-disciplinary perspective.

Also see:

NVIDIA Project DIGITS: The World’s Smallest AI Supercomputer. — from nvidia.com

A Grace Blackwell AI Supercomputer on your desk.

From DSC:

I’m posting this next item (involving Samsung) as it relates to how TVs continue to change within our living rooms. AI is finding its way into our TVs…the ramifications of this remain to be seen.

OpenAI ‘now knows how to build AGI’ — from therundown.ai by Rowan Cheung

PLUS: AI phishing achieves alarming success rates

The Rundown: Samsung revealed its new “AI for All” tagline at CES 2025, introducing a comprehensive suite of new AI features and products across its entire ecosystem — including new AI-powered TVs, appliances, PCs, and more.

The details:

- Vision AI brings features like real-time translation, the ability to adapt to user preferences, AI upscaling, and instant content summaries to Samsung TVs.

- Several of Samsung’s new Smart TVs will also have Microsoft Copilot built in, while also teasing a potential AI partnership with Google.

- Samsung also announced the new line of Galaxy Book5 AI PCs, with new capabilities like AI-powered search and photo editing.

- AI is also being infused into Samsung’s laundry appliances, art frames, home security equipment, and other devices within its SmartThings ecosystem.

Why it matters: Samsung’s web of products are getting the AI treatment — and we’re about to be surrounded by AI-infused appliances in every aspect of our lives. The edge will be the ability to sync it all together under one central hub, which could position Samsung as the go-to for the inevitable transition from smart to AI-powered homes.

***

“Samsung sees TVs not as one-directional devices for passive consumption but as interactive, intelligent partners that adapt to your needs,” said SW Yong, President and Head of Visual Display Business at Samsung Electronics. “With Samsung Vision AI, we’re reimagining what screens can do, connecting entertainment, personalization, and lifestyle solutions into one seamless experience to simplify your life.” — from Samsung

Understanding And Preparing For The 7 Levels Of AI Agents — from forbes.com by Douglas B. Laney

The following framework I offer for defining, understanding, and preparing for agentic AI blends foundational work in computer science with insights from cognitive psychology and speculative philosophy. Each of the seven levels represents a step-change in technology, capability, and autonomy. The framework expresses increasing opportunities to innovate, thrive, and transform in a data-fueled and AI-driven digital economy.

The Rise of AI Agents and Data-Driven Decisions — from devprojournal.com by Mike Monocello

Fueled by generative AI and machine learning advancements, we’re witnessing a paradigm shift in how businesses operate and make decisions.

AI Agents Enhance Generative AI’s Impact

Burley Kawasaki, Global VP of Product Marketing and Strategy at Creatio, predicts a significant leap forward in generative AI. “In 2025, AI agents will take generative AI to the next level by moving beyond content creation to active participation in daily business operations,” he says. “These agents, capable of partial or full autonomy, will handle tasks like scheduling, lead qualification, and customer follow-ups, seamlessly integrating into workflows. Rather than replacing generative AI, they will enhance its utility by transforming insights into immediate, actionable outcomes.”

Here’s what nobody is telling you about AI agents in 2025 — from aidisruptor.ai by Alex McFarland

What’s really coming (and how to prepare).

Everyone’s talking about the potential of AI agents in 2025 (and don’t get me wrong, it’s really significant), but there’s a crucial detail that keeps getting overlooked: the gap between current capabilities and practical reliability.

Here’s the reality check that most predictions miss: AI agents currently operate at about 80% accuracy (according to Microsoft’s AI CEO). Sounds impressive, right? But here’s the thing – for businesses and users to actually trust these systems with meaningful tasks, we need 99% reliability. That’s not just a 19% gap – it’s the difference between an interesting tech demo and a business-critical tool.

This matters because it completely changes how we should think about AI agents in 2025. While major players like Microsoft, Google, and Amazon are pouring billions into development, they’re all facing the same fundamental challenge – making them work reliably enough that you can actually trust them with your business processes.

Think about it this way: Would you trust an assistant who gets things wrong 20% of the time? Probably not. But would you trust one who makes a mistake only 1% of the time, especially if they could handle repetitive tasks across your entire workflow? That’s a completely different conversation.

Why 2025 will be the year of AI orchestration — from venturebeat.com by Emilia David|

In the tech world, we like to label periods as the year of (insert milestone here). This past year (2024) was a year of broader experimentation in AI and, of course, agentic use cases.

As 2025 opens, VentureBeat spoke to industry analysts and IT decision-makers to see what the year might bring. For many, 2025 will be the year of agents, when all the pilot programs, experiments and new AI use cases converge into something resembling a return on investment.

In addition, the experts VentureBeat spoke to see 2025 as the year AI orchestration will play a bigger role in the enterprise. Organizations plan to make management of AI applications and agents much more straightforward.

Here are some themes we expect to see more in 2025.

Predictions For AI In 2025: Entrepreneurs Look Ahead — from forbes.com by Jodie Cook

AI agents take charge

Jérémy Grandillon, CEO of TC9 – AI Allbound Agency, said “Today, AI can do a lot, but we don’t trust it to take actions on our behalf. This will change in 2025. Be ready to ask your AI assistant to book a Uber ride for you.” Start small with one agent handling one task. Build up to an army.

“If 2024 was agents everywhere, then 2025 will be about bringing those agents together in networks and systems,” said Nicholas Holland, vice president of AI at Hubspot. “Micro agents working together to accomplish larger bodies of work, and marketplaces where humans can ‘hire’ agents to work alongside them in hybrid teams. Before long, we’ll be saying, ‘there’s an agent for that.'”

…

Voice becomes default

Stop typing and start talking. Adam Biddlecombe, head of brand at Mindstream, predicts a shift in how we interact with AI. “2025 will be the year that people start talking with AI,” he said. “The majority of people interact with ChatGPT and other tools in the text format, and a lot of emphasis is put on prompting skills.

Biddlecombe believes, “With Apple’s ChatGPT integration for Siri, millions of people will start talking to ChatGPT. This will make AI so much more accessible and people will start to use it for very simple queries.”

Get ready for the next wave of advancements in AI. AGI arrives early, AI agents take charge, and voice becomes the norm. Video creation gets easy, AI embeds everywhere, and one-person billion-dollar companies emerge.

These 4 graphs show where AI is already impacting jobs — from fastcompany.com by Brandon Tucker

With a 200% increase in two years, the data paints a vivid picture of how AI technology is reshaping the workforce.

To better understand the types of roles that AI is impacting, ZoomInfo’s research team looked to its proprietary database of professional contacts for answers. The platform, which detects more than 1.5 million personnel changes per day, revealed a dramatic increase in AI-related job titles since 2022. With a 200% increase in two years, the data paints a vivid picture of how AI technology is reshaping the workforce.

Why does this shift in AI titles matter for every industry?

This is wild.

Hume AI just announced a new AI voice model.

It’s like ChatGPT Advanced Voice Mode, Elevenlabs Voice Design, and Google NotebookLM in one.

t can create a voice with a whole personality from a description or 5 second clip. And more.

8 wild examples: pic.twitter.com/XOA779jSiE

— Min Choi (@minchoi) December 23, 2024

AI in 2024: Insights From our 5 Million Readers — from linkedin.com by Generative AI

Checking the Pulse: The Impact of AI on Everyday Lives

So, what exactly did our users have to say about how AI transformed their lives this year?

.

Top 2024 Developments in AI

- Video Generation…

- AI Employees…

- Open Source Advancements…

Getting ready for 2025: your AI team members (Gift lesson 3/3) — from flexos.com by Daan van Rossum

And that’s why today, I’ll tell you exactly which AI tools I’ve recommended for the top 5 use cases to almost 200 business leaders who took the Lead with AI course.

1. Email Management: Simplifying Communication with AI

- Microsoft Copilot for Outlook. …

- Gemini AI for Gmail. …

- Grammarly. …

2. Meeting Management: Maximize Your Time

- Otter.ai. …

- Copilot for Microsoft Teams. …

- Other AI Meeting Assistants. Zoom AI Companion, Granola, and Fathom

3. Research: Streamlining Information Gathering

- ChatGPT. …

- Perplexity. …

- Consensus. …

…plus several more items and tools that were mentioned by Daan.

Where to start with AI agents: An introduction for COOs — from fortune.com by Ganesh Ayyar

Picture your enterprise as a living ecosystem, where surging market demand instantly informs staffing decisions, where a new vendor’s onboarding optimizes your emissions metrics, where rising customer engagement reveals product opportunities. Now imagine if your systems could see these connections too! This is the promise of AI agents — an intelligent network that thinks, learns, and works across your entire enterprise.

Today, organizations operate in artificial silos. Tomorrow, they could be fluid and responsive. The transformation has already begun. The question is: will your company lead it?

The journey to agent-enabled operations starts with clarity on business objectives. Leaders should begin by mapping their business’s critical processes. The most pressing opportunities often lie where cross-functional handoffs create friction or where high-value activities are slowed by system fragmentation. These pain points become the natural starting points for your agent deployment strategy.

Create podcasts in minutes — from elevenlabs.io by Eleven Labs

Now anyone can be a podcast producer

Top AI tools for business — from theneuron.ai

This week in AI: 3D from images, video tools, and more — from heatherbcooper.substack.com by Heather Cooper

From 3D worlds to consistent characters, explore this week’s AI trends

Another busy AI news week, so I organized it into categories:

- Image to 3D

- AI Video

- AI Image Models & Tools

- AI Assistants / LLMs

- AI Creative Workflow: Luma AI Boards

Want to speak Italian? Microsoft AI can make it sound like you do. — this is a gifted article from The Washington Post;

A new AI-powered interpreter is expected to simulate speakers’ voices in different languages during Microsoft Teams meetings.

Artificial intelligence has already proved that it can sound like a human, impersonate individuals and even produce recordings of someone speaking different languages. Now, a new feature from Microsoft will allow video meeting attendees to hear speakers “talk” in a different language with help from AI.

What Is Agentic AI? — from blogs.nvidia.com by Erik Pounds

Agentic AI uses sophisticated reasoning and iterative planning to autonomously solve complex, multi-step problems.

The next frontier of artificial intelligence is agentic AI, which uses sophisticated reasoning and iterative planning to autonomously solve complex, multi-step problems. And it’s set to enhance productivity and operations across industries.

Agentic AI systems ingest vast amounts of data from multiple sources to independently analyze challenges, develop strategies and execute tasks like supply chain optimization, cybersecurity vulnerability analysis and helping doctors with time-consuming tasks.

From DSC:

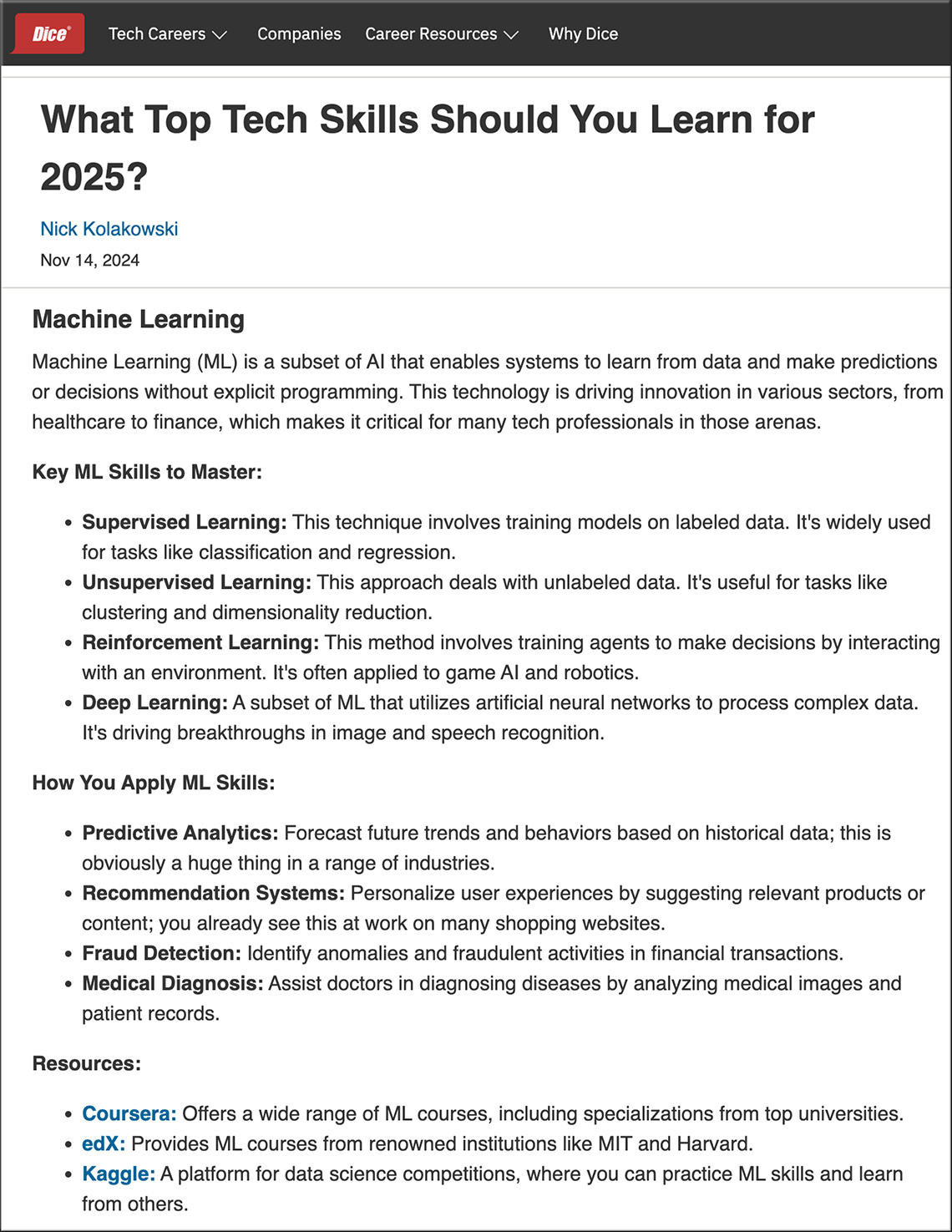

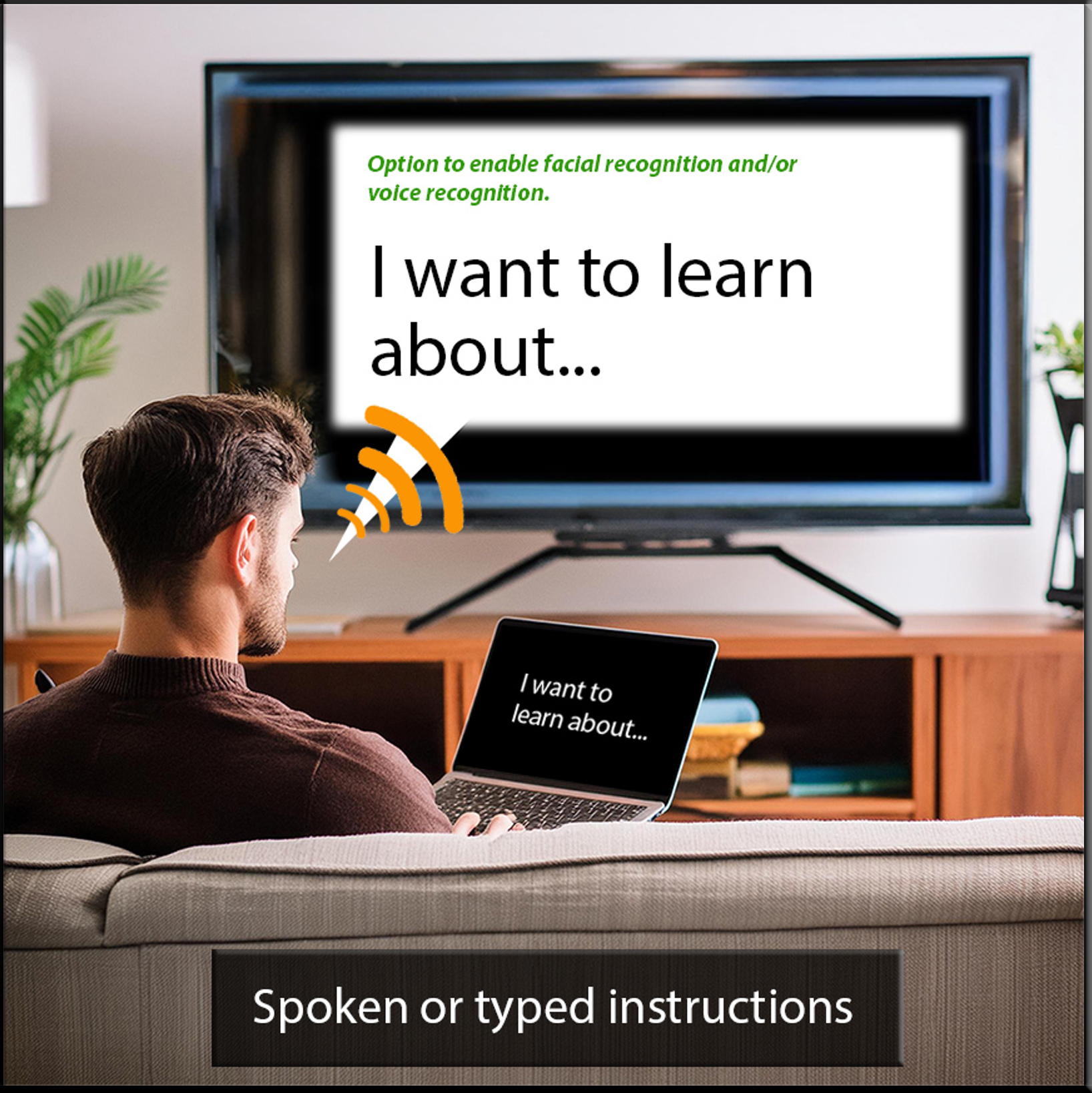

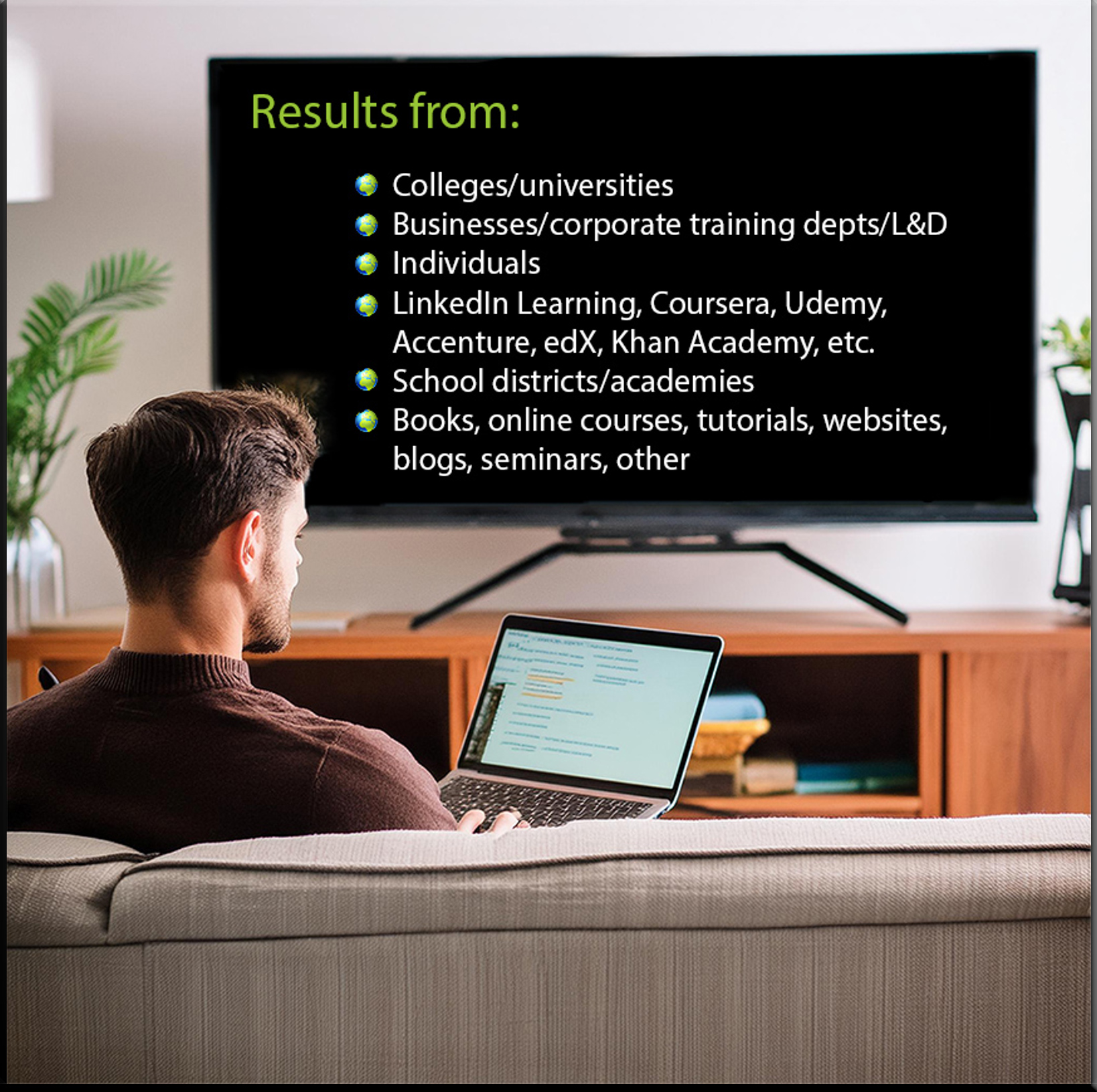

First of all, when you look at the following posting:

What Top Tech Skills Should You Learn for 2025? — from dice.com by Nick Kolakowski

…you will see that they outline which skills you should consider mastering in 2025 if you want to stay on top of the latest career opportunities. They then list more information about the skills, how you apply the skills, and WHERE to get those skills.

I assert that in the future, people will be able to see this information on a 24x7x365 basis.

- Which jobs are in demand?

- What skills do I need to do those jobs?

- WHERE do I get/develop those skills?

And that last part (about the WHERE do I develop those skills) will pull from many different institutions, people, companies, etc.

BUT PEOPLE are the key! Oftentimes, we need to — and prefer to — learn with others!

Duolingo Introduces AI-Powered Innovations at Duocon 2024 — from investors.duolingo.com; via Claire Zau

Duolingo’s new Video Call feature represents a leap forward in language practice for learners. This AI-powered tool allows Duolingo Max subscribers to engage in spontaneous, realistic conversations with Lily, one of Duolingo’s most popular characters. The technology behind Video Call is designed to simulate natural dialogue and provides a personalized, interactive practice environment. Even beginner learners can converse in a low-pressure environment because Video Call is designed to adapt to their skill level. By offering learners the opportunity to converse in real-time, Video Call builds the confidence needed to communicate effectively in real-world situations. Video Call is available for Duolingo Max subscribers learning English, Spanish, and French.

And here’s another AI-based learning item:

AI reading coach startup Ello now lets kids create their own stories — from techcrunch.com by Lauren Forristal; via Claire Zau

Ello, the AI reading companion that aims to support kids struggling to read, launched a new product on Monday that allows kids to participate in the story-creation process.

Called “Storytime,” the new AI-powered feature helps kids generate personalized stories by picking from a selection of settings, characters, and plots. For instance, a story about a hamster named Greg who performed in a talent show in outer space.

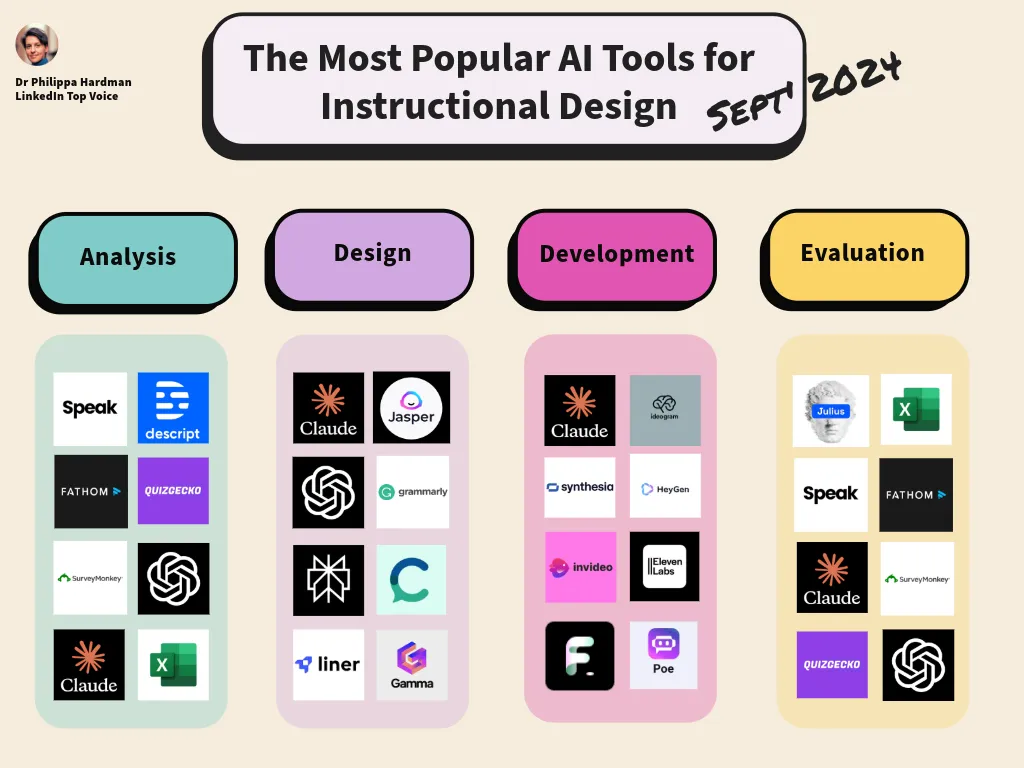

The Most Popular AI Tools for Instructional Design (September, 2024) — from drphilippahardman.substack.com by Dr. Philippa Hardman

The tools we use most, and how we use them

This week, as I kick off the 20th cohort of my AI-Learning Design bootcamp, I decided to do some analysis of the work habits of the hundreds of amazing AI-embracing instructional designers who I’ve worked with over the last year or so.

My goal was to answer the question: which AI tools do we use most in the instructional design process, and how do we use them?

Here’s where we are in September, 2024:

…

Developing Your Approach to Generative AI — from scholarlyteacher.com by Caitlin K. Kirby, Min Zhuang, Imari Cheyne Tetu, & Stephen Thomas (Michigan State University)

As generative AI becomes integrated into workplaces, scholarly work, and students’ workflows, we have the opportunity to take a broad view of the role of generative AI in higher education classrooms. Our guiding questions are meant to serve as a starting point to consider, from each educator’s initial reaction and preferences around generative AI, how their discipline, course design, and assessments may be impacted, and to have a broad view of the ethics of generative AI use.

The Impact of AI in Advancing Accessibility for Learners with Disabilities — from er.educause.edu by Rob Gibson

AI technology tools hold remarkable promise for providing more accessible, equitable, and inclusive learning experiences for students with disabilities.

Gemini makes your mobile device a powerful AI assistant — from blog.google

Gemini Live is available today to Advanced subscribers, along with conversational overlay on Android and even more connected apps.

Rolling out today: Gemini Live <– Google swoops in before OpenAI can get their Voice Mode out there

Gemini Live is a mobile conversational experience that lets you have free-flowing conversations with Gemini. Want to brainstorm potential jobs that are well-suited to your skillset or degree? Go Live with Gemini and ask about them. You can even interrupt mid-response to dive deeper on a particular point, or pause a conversation and come back to it later. It’s like having a sidekick in your pocket who you can chat with about new ideas or practice with for an important conversation.

Gemini Live is also available hands-free: You can keep talking with the Gemini app in the background or when your phone is locked, so you can carry on your conversation on the go, just like you might on a regular phone call. Gemini Live begins rolling out today in English to our Gemini Advanced subscribers on Android phones, and in the coming weeks will expand to iOS and more languages.

To make speaking to Gemini feel even more natural, we’re introducing 10 new voices to choose from, so you can pick the tone and style that works best for you.

.

We’re introducing Gemini Live, a more natural way to interact with Gemini. You can now have a free-flowing conversation, and even interrupt or change topics just like you might on a regular phone call. Available to Gemini Advanced subscribers. #MadeByGoogle pic.twitter.com/eNjlNKubsv

— Google (@Google) August 13, 2024

Beyond Social Media: Schmidt Predicts AI’s Earth-Shaking Impact— from wallstreetpit.com

The next wave of AI is coming, and if Schmidt is correct, it will reshape our world in ways we are only beginning to imagine.

In a recent Q&A session at Stanford, Eric Schmidt, former CEO and Chairman of search giant Google, offered a compelling vision of the near future in artificial intelligence. His predictions, both exciting and sobering, paint a picture of a world on the brink of a technological revolution that could dwarf the impact of social media.

Schmidt highlighted three key advancements that he believes will converge to create this transformative wave: very large context windows, agents, and text-to-action capabilities. These developments, according to Schmidt, are not just incremental improvements but game-changers that could reshape our interaction with technology and the world at large.

.

Eric Schmidt says in the next year, AI models will unite three key pillars: very large context windows, agents and text-to-action, and no-one understands what the impact will be but it will involve everyone having a fleet of AI agents at their command pic.twitter.com/roYSfZGQ5J

— Tsarathustra (@tsarnick) August 13, 2024

The rise of multimodal AI agents— from 11onze.cat

Technology companies are investing large amounts of money in creating new multimodal artificial intelligence models and algorithms that can learn, reason and make decisions autonomously after collecting and analysing data.

The future of multimodal agents

In practical terms, a multimodal AI agent can, for example, analyse a text while processing an image, spoken language, or an audio clip to give a more complete and accurate response, both through voice and text. This opens up new possibilities in various fields: from education and healthcare to e-commerce and customer service.

AI Change Management: 41 Tactics to Use (August 2024)— from flexos.work by Daan van Rossum

Future-proof companies are investing in driving AI adoption, but many don’t know where to start. The experts recommend these 41 tips for AI change management.

As Matt Kropp told me in our interview, BCG has a 10-20-70 rule for AI at work:

- 10% is the LLM or algorithm

- 20% is the software layer around it (like ChatGPT)

- 70% is the human factor

This 70% is exactly why change management is key in driving AI adoption.

But where do you start?

As I coach leaders at companies like Apple, Toyota, Amazon, L’Oréal, and Gartner in our Lead with AI program, I know that’s the question on everyone’s minds.

I don’t believe in gatekeeping this information, so here are 41 principles and tactics I share with our community members looking for winning AI change management principles.

Claude is so good

Prompt:

——–

I am using a video generator

Please give me a map of all the different types of shots and things I can enter for my prompt.Output

——– pic.twitter.com/JSEo3qwg7v— Riley Brown (@rileybrown_ai) June 29, 2024