ElevenLabs’ AI Voice Generator Can Now Fake Your Voice in 30 Languages — from gizmodo.com by Kyle Barr

ElevenLabs said its AI voice generator is out of beta, saying it would support video game and audiobook creators with cheap audio.

According to ElevenLabs, the new Multilingual v2 model promises it can produce “emotionally rich” audio in a total of 30 languages. The company offers two AI voice tools, one is a text-to-speech model and the other is the “VoiceLab” that lets paying users clone a voice by inputting fragments of theirs (or others) speech into the model to create a kind of voice cone. With the v2 model, users can get these generated voices to start speaking in Greek, Malay, or Turkish.

…

Since then, ElevenLabs claims its integrated new measures to ensure users can only clone their own voice. Users need to verify their speech with a text captcha prompt which is then compared to the original voice sample.

From DSC:

I don’t care what they say regarding safeguards/proof of identity/etc. This technology has been abused and will be abused in the future. We can count on it. The question now is, how do we deal with it?

Google, Amazon, Nvidia and other tech giants invest in AI startup Hugging Face, sending its valuation to $4.5 billion — from cnbc.com by Kif Leswing

But Hugging Face produces a platform where AI developers can share code, models, data sets, and use the company’s developer tools to get open-source artificial intelligence models running more easily. In particular, Hugging Face often hosts weights, or large files with lists of numbers, which are the heart of most modern AI models.

While Hugging Face has developed some models, like BLOOM, its primary product is its website platform, where users can upload models and their weights. It also develops a series of software tools called libraries that allow users to get models working quickly, to clean up large datasets, or to evaluate their performance. It also hosts some AI models in a web interface so end users can experiment with them.

The global semiconductor talent shortage — from www2.deloitte.com

How to solve semiconductor workforce challenges

Numerous skills are required to grow the semiconductor ecosystem over the next decade. Globally, we will need tens of thousands of skilled tradespeople to build new plants to increase and localize manufacturing capacity: electricians, pipefitters, welders; thousands more graduate electrical engineers to design chips and the tools that make the chips; more engineers of various kinds in the fabs themselves, but also operators and technicians. And if we grow the back end in Europe and the Americas, that equates to even more jobs.

Each of these job groups has distinct training and educational needs; however, the number of students in semiconductor-focused programs (for example, undergraduates in semiconductor design and fabrication) has dwindled. Skills are also evolving within these job groups, in part due to automation and increased digitization. Digital skills, such as cloud, AI, and analytics, are needed in design and manufacturing more than ever.

The chip industry has long partnered with universities and engineering schools. Going forward, they also need to work more with local tech schools, vocational schools, and community colleges; and other organizations, such as the National Science Foundation in the United States.

Our principles for partnering with the music industry on AI technology — from blog.youtube (Google) by Neal Mohan, CEO, YouTube

AI is here, and we will embrace it responsibly together with our music partners.

- Principle #1: AI is here, and we will embrace it responsibly together with our music partners.

- Principle #2: AI is ushering in a new age of creative expression, but it must include appropriate protections and unlock opportunities for music partners who decide to participate.

- Principle #3: We’ve built an industry-leading trust and safety organization and content policies. We will scale those to meet the challenges of AI.

Developers are now using AI for text-to-music apps — from techcrunch.com by Ivan Mehta

Brett Bauman, the developer of PlayListAI (previously LinupSupply), launched a new app called Songburst on the App Store this week. The app doesn’t have a steep learning curve. You just have to type in a prompt like “Calming piano music to listen to while studying” or “Funky beats for a podcast intro” to let the app generate a music clip.

If you can’t think of a prompt the app has prompts in different categories, including video, lo-fi, podcast, gaming, meditation and sample.

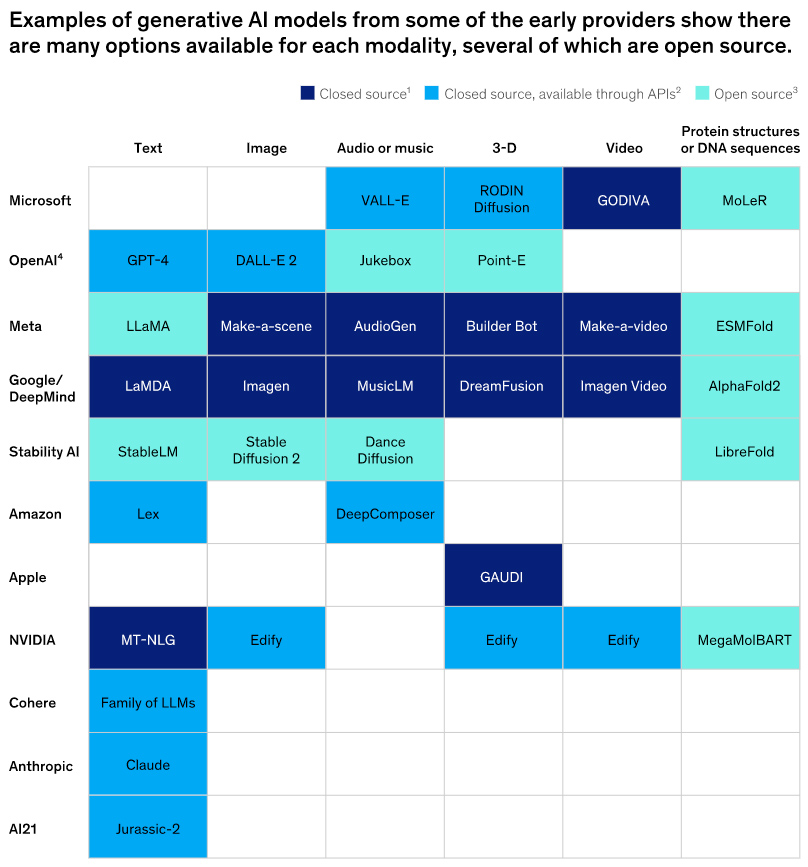

A Generative AI Primer — from er.educause.edu by Brian Basgen

Understanding the current state of technology requires understanding its origins. This reading list provides sources relevant to the form of generative AI that led to natural language processing (NLP) models such as ChatGPT.

Three big questions about AI and the future of work and learning — from workshift.opencampusmedia.org by Alex Swartsel

AI is set to transform education and work today and well into the future. We need to start asking tough questions right now, writes Alex Swartsel of JFF.

- How will AI reshape jobs, and how can we prepare all workers and learners with the skills they’ll need?

- How can education and workforce leaders equitably adopt AI platforms to accelerate their impact?

- How might we catalyze sustainable policy, practice, and investments in solutions that drive economic opportunity?

“As AI reshapes both the economy and society, we must collectively call for better data, increased accountability, and more flexible support for workers,” Swartsel writes.

The Current State of AI for Educators (August, 2023) — from drphilippahardman.substack.com by Dr. Philippa Hardman

A podcast interview with the University of Toronto on where we’re at & where we’re going.