5 influencers predict AI’s impact on business in 2019 — from martechadvisor.com by Christine Crandell

Excerpt:

With Artificial Intelligence (AI) already proving its worth to adopters, it’s not surprising that an increasing number of companies will implement and leverage AI in 2019. Now, it’s no longer a question of whether AI will take off. Instead, it’s a question of which companies will keep up. Here are five predictions from five influencers on the impact AI will have on businesses in 2019, writes Christine Crandell, President, New Business Strategies.

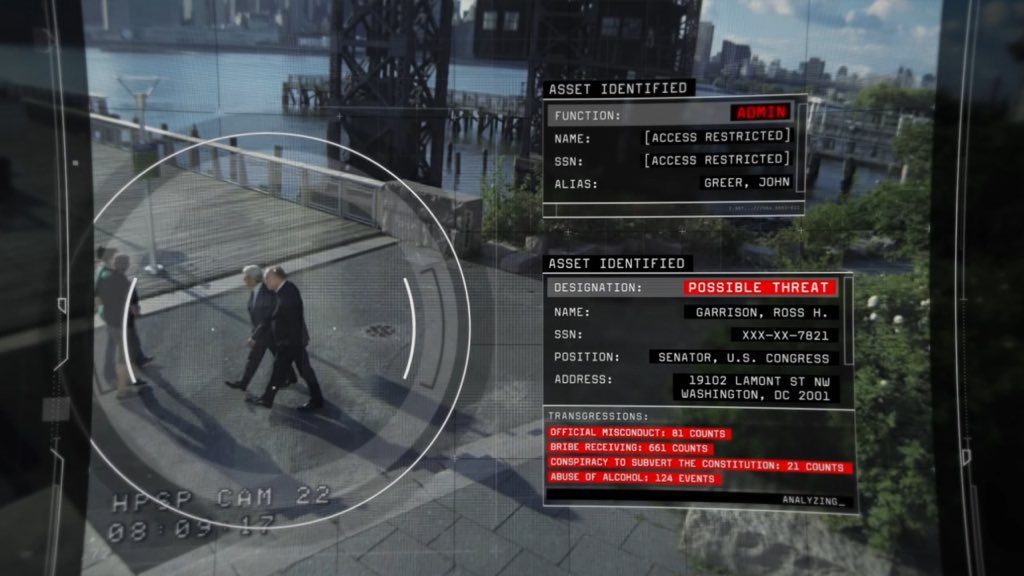

Should we be worried about computerized facial recognition? — from newyorker.com by David Owen

The technology could revolutionize policing, medicine, even agriculture—but its applications can easily be weaponized.

Facial-recognition technology is advancing faster than the people who worry about it have been able to think of ways to manage it. Indeed, in any number of fields the gap between what scientists are up to and what nonscientists understand about it is almost certainly greater now than it has been at any time since the Manhattan Project.

From DSC:

This is why law schools, legislatures, and the federal government need to become much more responsive to emerging technologies. The pace of technological change has changed. But have other important institutions of our society adapted to this new pace of change?

Andrew Ng sees an eternal springtime for AI — from zdnet.com by Tiernan Ray

Former Google Brain leader and Baidu chief scientist Andrew Ng lays out the steps companies should take to succeed with artificial intelligence, and explains why there’s unlikely to be another “AI winter” like in times past.

Google Lens now recognizes over 1 billion products — from venturebeat.com by Kyle Wiggers with thanks to Marie Conway for her tweet on this

Excerpt:

Google Lens, Google’s AI-powered analysis tool, can now recognize over 1 billion products from Google’s retail and price comparison portal, Google Shopping. That’s four times the number of objects Lens covered in October 2017, when it made its debut.

Aparna Chennapragada, vice president of Google Lens and augmented reality at Google, revealed the tidbit in a retrospective blog post about Google Lens’ milestones.

Amazon Customer Receives 1,700 Audio Files Of A Stranger Who Used Alexa — from npr.org by Sasha Ingber

Excerpt:

When an Amazon customer in Germany contacted the company to review his archived data, he wasn’t expecting to receive recordings of a stranger speaking in the privacy of a home.

The man requested to review his data in August under a European Union data protection law, according to a German trade magazine called c’t. Amazon sent him a download link to tracked searches on the website — and 1,700 audio recordings by Alexa that were generated by another person.

“I was very surprised about that because I don’t use Amazon Alexa, let alone have an Alexa-enabled device,” the customer, who was not named, told the magazine. “So I randomly listened to some of these audio files and could not recognize any of the voices.”