McKinsey: automation may wipe out 1/3 of America’s workforce by 2030 — from axios.com by Steve LeVine

Excerpt (emphasis DSC):

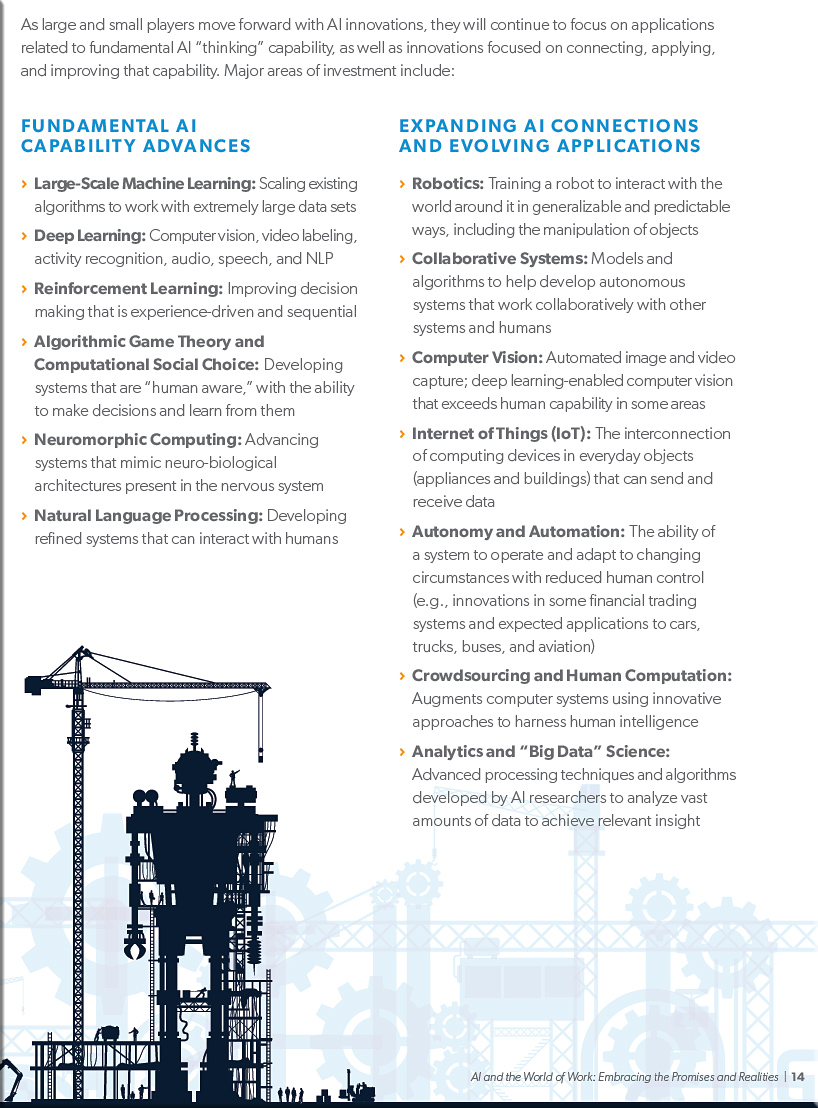

In a new study that is optimistic about automation yet stark in its appraisal of the challenge ahead, McKinsey says massive government intervention will be required to hold societies together against the ravages of labor disruption over the next 13 years. Up to 800 million people—including a third of the work force in the U.S. and Germany—will be made jobless by 2030, the study says.

The bottom line: The economy of most countries will eventually replace the lost jobs, the study says, but many of the unemployed will need considerable help to shift to new work, and salaries could continue to flatline. “It’s a Marshall Plan size of task,” Michael Chui, lead author of the McKinsey report, tells Axios.

In the eight-month study, the McKinsey Global Institute, the firm’s think tank, found that almost half of those thrown out of work—375 million people, comprising 14% of the global work force—will have to find entirely new occupations, since their old one will either no longer exist or need far fewer workers. Chinese will have the highest such absolute numbers—100 million people changing occupations, or 12% of the country’s 2030 work force.

I asked Chui what surprised him the most of the findings. “The degree of transition that needs to happen over time is a real eye opener,” he said.

The transition compares to the U.S. shift from a largely agricultural to an industrial-services economy in the early 1900s forward. But this time, it’s not young people leaving farms, but mid-career workers who need new skills.

From DSC:

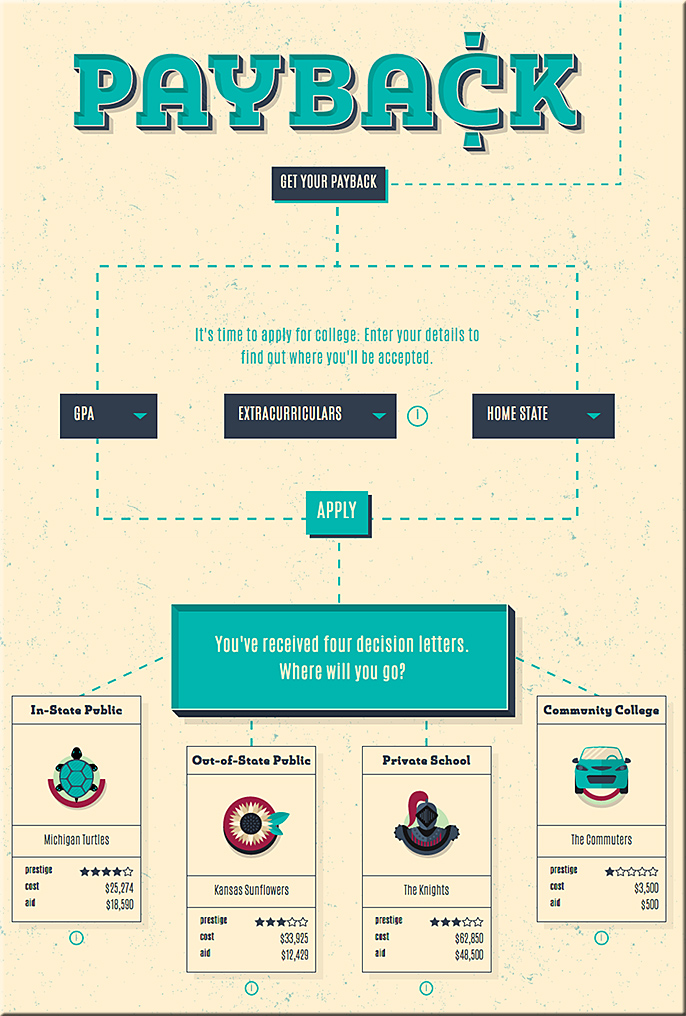

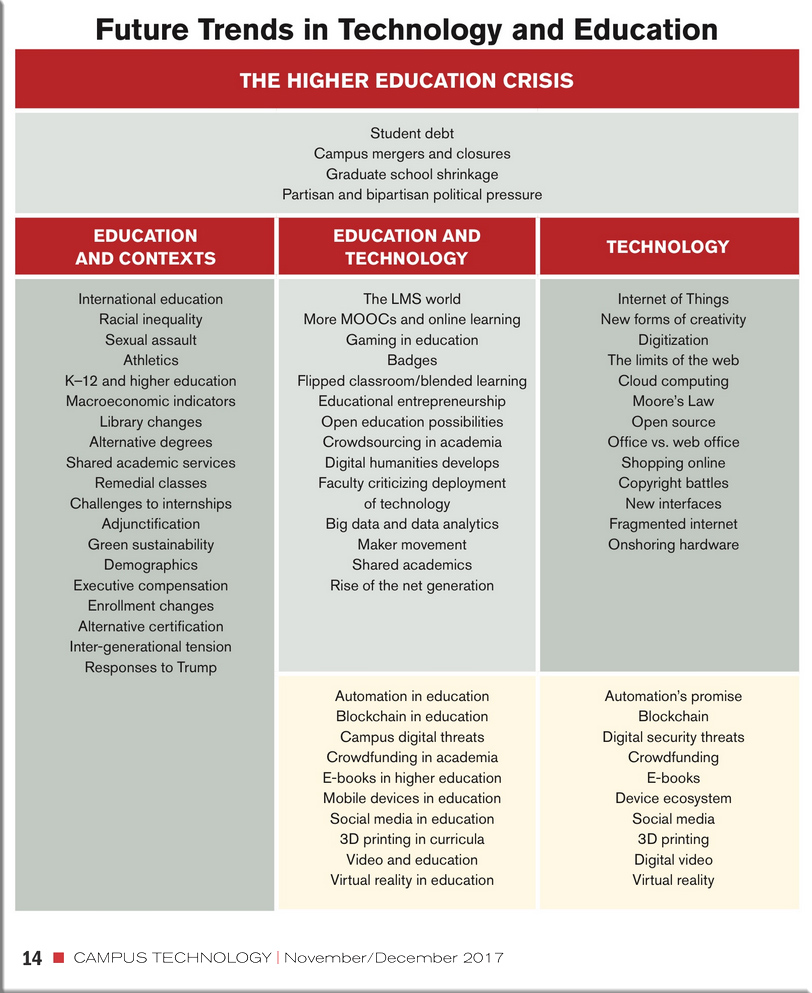

Higher education — and likely (strictly) vocational training outside of higher ed — is simply not ready for this! MAJOR reinvention will be necessary, and as soon as 2018 according to Forrester Research.

One of the key values that institutions of traditional higher education can bring to the table is to help people through this gut wrenching transition — identifying which jobs are going to last for the next 5-10+ years and which ones won’t, and then be about the work of preparing the necessary programs quickly enough to meet the demands of the new economy.

Students/entrepreneurs out there, they say you should look around to see where the needs are and then develop products and/or services to meet those needs. Well, here you go!

As a member of the International Education Committee, at edX we are extremely aware of the changing nature of work and jobs. It is predicted that 50 percent of current jobs will disappear by 2030.

Anant Agarwal, CEO and Founder of edX, and Professor of

Electrical Engineering and Computer Science at MIT

(source)

Addendum:

Automation threatens 800 million jobs, but technology could still save us, says report — from theverge.com by James Vincent

New analysis says governments need to act now to help a labor force in flux

Excerpt:

A new report predicts that by 2030, as many as 800 million jobs could be lost worldwide to automation. The study, compiled by the McKinsey Global Institute, says that advances in AI and robotics will have a drastic effect on everyday working lives, comparable to the shift away from agricultural societies during the Industrial Revolution. In the US alone, between 39 and 73 million jobs stand to be automated — making up around a third of the total workforce.

If a computer can do one-third of your job, what happens next? Do you get trained to take on new tasks, or does your boss fire you, or some of your colleagues? What if you just get a pay cut instead? Do you have the money to retrain, or will you be forced to take the hit in living standards?