A future-facing minister, a young inventor and a shared vision: An AI tutor for every student — from news.microsoft.com by Chris Welsch

The Ministry of Education and Pativada see what has become known as the U.A.E. AI Tutor as a way to provide students with 24/7 assistance as well as help level the playing field for those families who cannot afford a private tutor. At the same time, the AI Tutor would be an aid to teachers, they say. “We see it as a tool that will support our teachers,” says Aljughaiman. “This is a supplement to classroom learning.”

If everything goes according to plan, every student in the United Arab Emirates’ school system will have a personal AI tutor – that fits in their pockets.

It’s a story that involves an element of coincidence, a forward-looking education minister and a tech team led by a chief executive officer who still lives at home with his parents.

In February 2023, the U.A.E.’s education minister, His Excellency Dr. Ahmad Belhoul Al Falasi, announced that the ministry was embracing AI technology and pursuing the idea of an AI tutor to help Emirati students succeed. And he also announced that the speech he presented had been written by ChatGPT. “We should not demonize AI,” he said at the time.

Fostering deep learning in humans and amplifying our intelligence in an AI World — from stefanbauschard.substack.com by Stefan Bauschard

A free 288-page report on advancements in AI and related technology, their effects on education, and our practical support for AI-amplified human deep learning

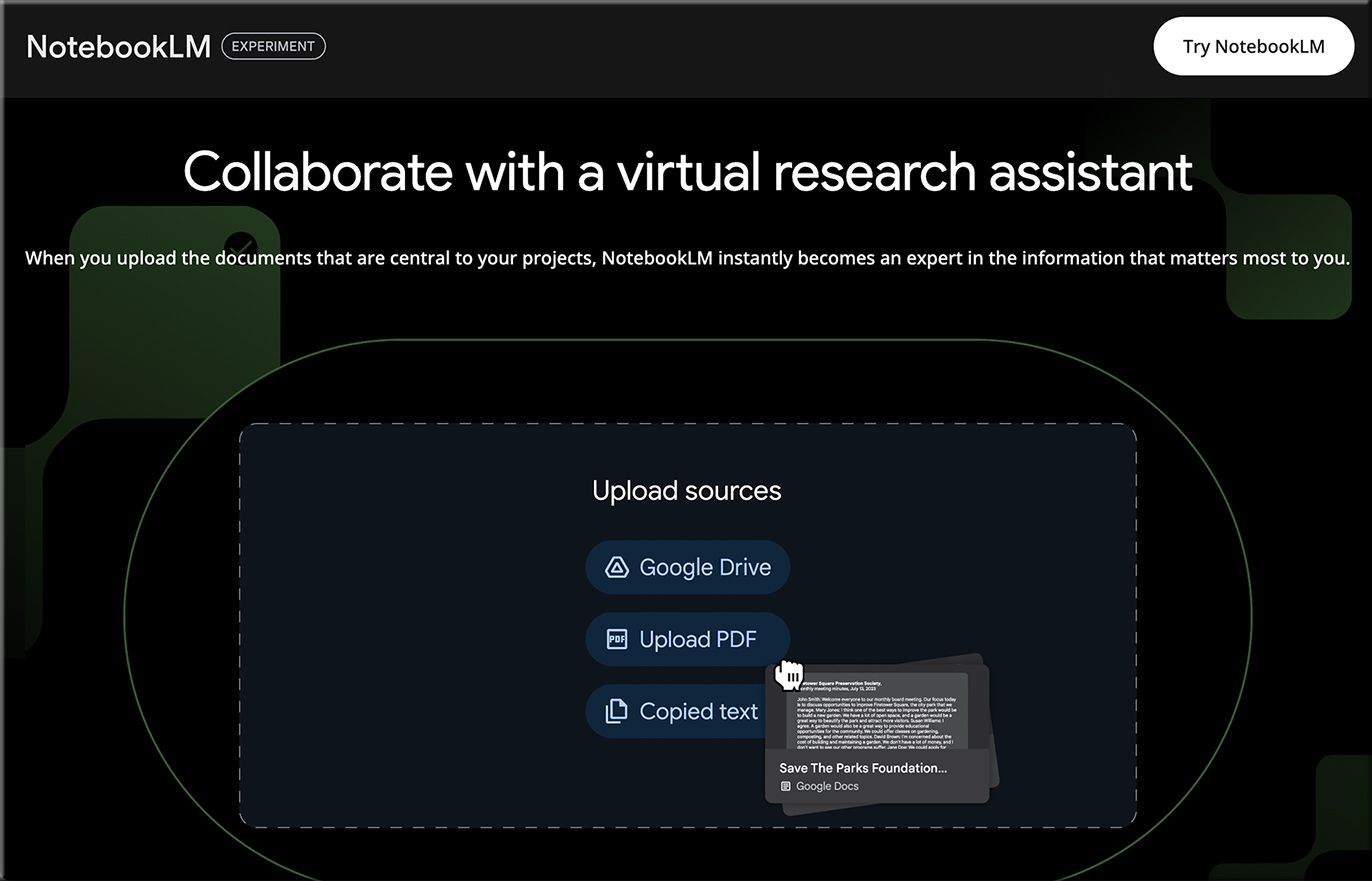

Six weeks ago, Dr. Sabba Quidwai and I accidentally stumbled upon an idea to compare the deep learning revolution in computer science to the mostly lacking deep learning efforts in education (Mehta & Fine). I started writing, and as these things often go with me, I thought there were many other things that would be useful to think through and for educators to know, and we ended up with this 288-page report.

***

Here’s an abstract from that report:

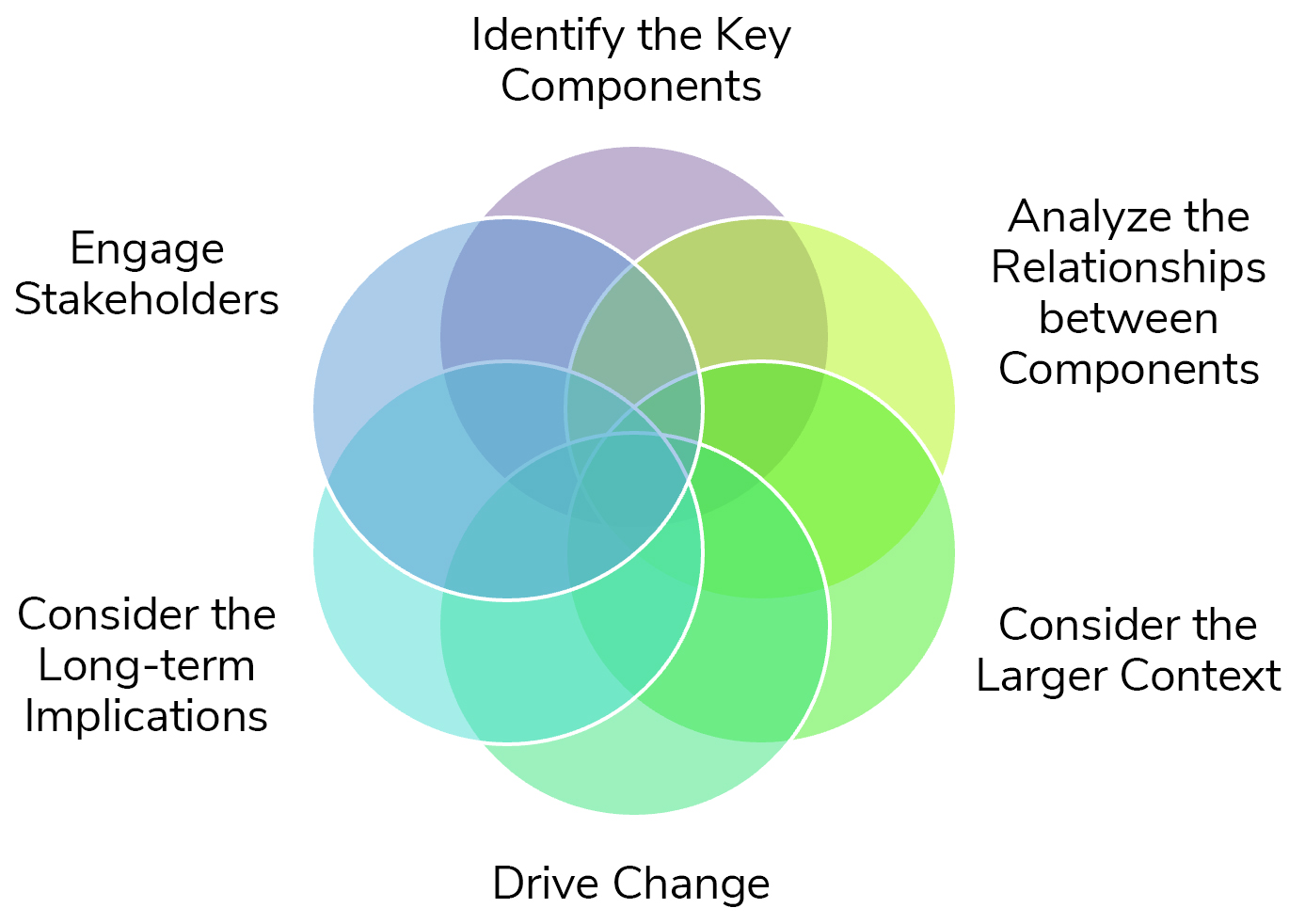

This report looks at the growing gap between the attention paid to the development of intelligence in machines and humans. While computer scientists have made great strides in developing human intelligence capacities in machines using deep learning technologies, including the abilities of machines to learn on their own, a significant part of the education system has not kept up with developing the intelligence capabilities in people that will enable them to succeed in the 21st century. Instead of fully embracing pedagogical methods that place primary emphasis on promoting collaboration, critical thinking, communication, creativity, and self-learning through experiential, interdisciplinary approaches grounded in human deep learning and combined with current technologies, a substantial portion of the educational system continues to heavily rely on traditional instructional methods and goals. These methods and goals prioritize knowledge acquisition and organization, areas in which machines already perform substantially better than people.

Also from Stefan Bauschard, see:

- Debating in the World of AI

Performative assessment, learning to collaborate with humans and machines, and developing special human qualities

13 Nuggets of AI Wisdom for Higher Education Leaders — from jeppestricker.substack.com by Jeppe Klitgaard Stricker

Actionable AI Guidance for Higher Education Leaders

Incentivize faculty AI innovation with AI.

Invest in people first, then technology.

On teaching, learning, and assessment. AI has captured the attention of all institutional stakeholders. Capitalize to reimagine pedagogy and evaluation. Rethink lectures, examinations, and assignments to align with workforce needs. Consider incorporating Problem-Based Learning, building portfolios and proof of work, and conducting oral exams. And use AI to provide individualized support and assess real-world skills.

Actively engage students.

Some thoughts from George Siemens re: AI:

Sensemaking, AI, and Learning (SAIL), a regular look at how AI is impacting learning.

Our education system has a uni-dimensional focus: learning things. Of course, we say we care about developing the whole learner, but the metrics that matter (grade, transcripts) that underpin the education system are largely focused on teaching students things that have long been Google-able but are now increasingly doable by AI. Developments in AI matters in ways that calls into question large parts of what happens in our universities. This is not a statement that people don’t need to learn core concepts and skills. My point is that the fulcrum of learning has shifted. Knowing things will continue to matter less and less going forward as AI improves its capabilities. We’ll need to start intentionally developing broader and broader attributes of learners: metacognition, wellness, affect, social engagement, etc. Education will continue to shift toward human skills and away from primary assessment of knowledge gains disconnected from skills and practice and ways of being.

AI, the Next Chapter for College Librarians — from insidehighered.com by Lauren Coffey

Librarians have lived through the disruptions of fax machines, websites and Wikipedia, and now they are bracing to do it again as artificial intelligence tools go mainstream: “Maybe it’s our time to shine.”

A few months after ChatGPT launched last fall, faculty and students at Northwestern University had many questions about the building wave of new artificial intelligence tools. So they turned to a familiar source of help: the library.

“At the time it was seen as a research and citation problem, so that led them to us,” said Michelle Guittar, head of instruction and curriculum support at Northwestern University Libraries.

In response, Guittar, along with librarian Jeanette Moss, created a landing page in April, “Using AI Tools in Your Research.” At the time, the university itself had yet to put together a comprehensive resource page.

From Dr. Nick Jackson’s recent post on LinkedIn:

Last night the Digitech team of junior and senior teachers from Scotch College Adelaide showcased their 2023 experiments, innovation, successes and failures with technology in education. Accompanied by Student digital leaders, we saw the following:

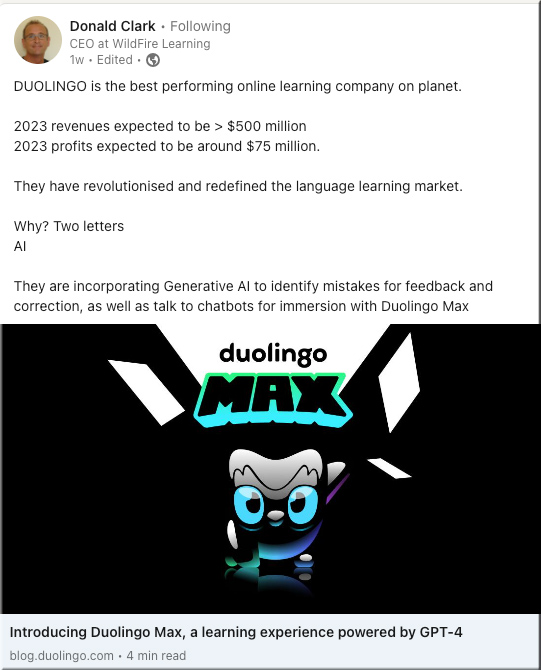

- AI used for languagelearning where avatars can help with accents

- Motioncapture suits being used in mediastudies

- AI used in assessment and automatic grading of work

- AR used in designtechnology

- VR used for immersive Junior school experiences

- A teacher’s AI toolkit that has changed teaching practice and workflow

- AR and the EyeJack app used by students to create dynamic art work

- VR use in careers education in Senior school

- How ethics around AI is taught to Junior school students from Year 1

- Experiments with MyStudyWorks

Almost an Agent: What GPTs can do — from oneusefulthing.org by Ethan Mollick

What would a real AI agent look like? A simple agent that writes academic papers would, after being given a dataset and a field of study, read about how to compose a good paper, analyze the data, conduct a literature review, generate hypotheses, test them, and then write up the results, all without intervention. You put in a request, you get a Word document that contains a draft of an academic paper.

A process kind of like this one:

What I Learned From an Experiment to Apply Generative AI to My Data Course — from edsurge.com by Wendy Castillo

As an educator, I have a duty to remain informed about the latest developments in generative AI, not only to ensure learning is happening, but to stay on top of what tools exist, what benefits and limitations they have, and most importantly, how students might be using them.

However, it’s also important to acknowledge that the quality of work produced by students now requires higher expectations and potential adjustments to grading practices. The baseline is no longer zero, it is AI. And the upper limit of what humans can achieve with these new capabilities remains an unknown frontier.

Artificial Intelligence in Higher Education: Trick or Treat? — from tytonpartners.com by Kristen Fox and Catherine Shaw

.