Thinking with Colleagues: AI in Education — from campustechnology.com by Mary Grush

A Q&A with Ellen Wagner

Wagner herself recently relied on the power of collegial conversations to probe the question: What’s on the minds of educators as they make ready for the growing influence of AI in higher education? CT asked her for some takeaways from the process.

We are in the very early days of seeing how AI is going to affect education. Some of us are going to need to stay focused on the basic research to test hypotheses. Others are going to dive into laboratory “sandboxes” to see if we can build some new applications and tools for ourselves. Still others will continue to scan newsletters like ProductHunt every day to see what kinds of things people are working on. It’s going to be hard to keep up, to filter out the noise on our own. That’s one reason why thinking with colleagues is so very important.

Mary and Ellen linked to “What Is Top of Mind for Higher Education Leaders about AI?” — from northcoasteduvisory.com. Below are some excerpts from those notes:

We are interested how K-12 education will change in terms of foundational learning. With in-class, active learning designs, will younger students do a lot more intensive building of foundational writing and critical thinking skills before they get to college?

- The Human in the Loop: AI is built using math: think of applied statistics on steroids. Humans will be needed more than ever to manage, review and evaluate the validity and reliability of results. Curation will be essential.

- We will need to generate ideas about how to address AI factors such as privacy, equity, bias, copyright, intellectual property, accessibility, and scalability.

- Have other institutions experimented with AI detection and/or have held off on emerging tools related to this? We have just recently adjusted guidance and paused some tools related to this given the massive inaccuracies in detection (and related downstream issues in faculty-elevated conduct cases)

Even though we learn repeatedly that innovation has a lot to do with effective project management and a solid message that helps people understand what they can do to implement change, people really need innovation to be more exciting and visionary than that. This is the place where we all need to help each other stay the course of change.

Along these lines, also see:

What people ask me most. Also, some answers. — from oneusefulthing.org by Ethan Mollick

A FAQ of sorts

I have been talking to a lot of people about Generative AI, from teachers to business executives to artists to people actually building LLMs. In these conversations, a few key questions and themes keep coming up over and over again. Many of those questions are more informed by viral news articles about AI than about the real thing, so I thought I would try to answer a few of the most common, to the best of my ability.

I can’t blame people for asking because, for whatever reason, the companies actually building and releasing Large Language Models often seem allergic to providing any sort of documentation or tutorial besides technical notes. I was given much better documentation for the generic garden hose I bought on Amazon than for the immensely powerful AI tools being released by the world’s largest companies. So, it is no surprise that rumor has been the way that people learn about AI capabilities.

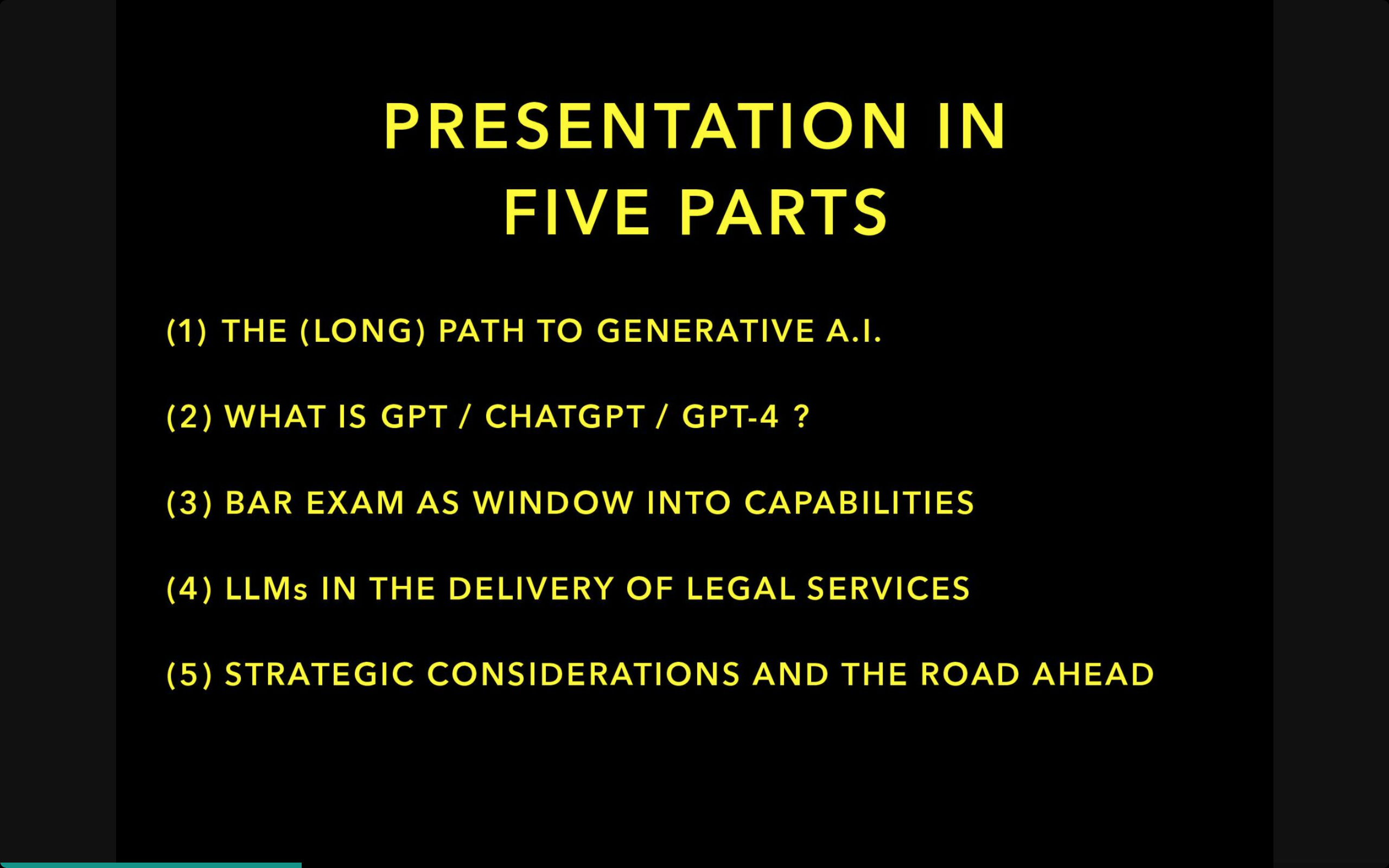

Currently, there are only really three AIs to consider: (1) OpenAI’s GPT-4 (which you can get access to with a Plus subscription or via Microsoft Bing in creative mode, for free), (2) Google’s Bard (free), or (3) Anthropic’s Claude 2 (free, but paid mode gets you faster access). As of today, GPT-4 is the clear leader, Claude 2 is second best (but can handle longer documents), and Google trails, but that will likely change very soon when Google updates its model, which is rumored to be happening in the near future.