The AI Education Revolution — from linkedin.com by Whitney Kilgore

We’re witnessing the biggest shift in education since the textbook—and most institutions are still deciding whether to allow it.

Ben Bernanke and Janet Yellen: The Fed Must Be Independent — an opinion from nytimes.com by Ben Bernanke and Janet Yellen; this is a gifted article

As former chairs of the Federal Reserve, we know from our experiences and our reading of history that the ability of the central bank to act independently is essential for its effective stewardship of the economy. Recent attempts to compromise that independence, including the president’s demands for a radical reduction in interest rates and his threats to fire its chair, Jerome Powell, if the Fed does not comply, risk lasting and serious economic harm. They undermine not only Mr. Powell but also all future chairs and, indeed, the credibility of the central bank itself.

Independence for the Federal Reserve to set interest rates does not imply a lack of democratic accountability. Congress has set in law the goals that the Fed must aim to achieve — maximum employment and stable prices — and Fed leaders report regularly to congressional committees on their progress toward those goals. Rather, independence means that monetary policymakers are permitted to use fact-based analysis and their best professional judgment in determining how best to reach their mandated goals, without regard to short-term political pressures.

Of course, Fed policymakers, being human, make mistakes. But an overwhelming amount of evidence, drawn from the experiences of both the United States and other countries, has shown that keeping politics out of monetary policy decisions leads to better economic outcomes.

9 Therefore God exalted him to the highest place

and gave him the name that is above every name,

10 that at the name of Jesus every knee should bow,

in heaven and on earth and under the earth,

11 and every tongue acknowledge that Jesus Christ is Lord,

to the glory of God the Father.

4 Show me your ways, Lord,

teach me your paths.

5 Guide me in your truth and teach me,

for you are God my Savior,

and my hope is in you all day long.

6 Remember, Lord, your great mercy and love,

for they are from of old.

11 For the sake of your name, Lord,

forgive my iniquity, though it is great.

12 Who, then, are those who fear the Lord?

He will instruct them in the ways they should choose.[a]

In Iowa, Trump Begins Task of Selling His Bill to the American Public — from nytimes.com by Tyler Pager

President Trump has spent days cajoling Republicans to support his spending bill. He will also have to sell it to a skeptical public as Democrats focus on all the ways it helps the wealthy.

President Trump took a victory lap on Thursday night after the House passed his sprawling domestic policy bill, which he muscled through Congress even as many in his party fear it will leave them vulnerable to political attacks ahead of next year’s midterm elections. (From DSC: Which it likely will do just that, and very possibly way beyond the midterm elections also.)

…

Just 29 percent of voters support the legislation, according to a recent Quinnipiac University poll. Roughly two-thirds of Republicans supported the bill in that poll, a relatively low figure from the president’s own party for his signature legislation, and independents opposed it overwhelmingly.

From DSC:

Did you get that? Just ***29%*** of voters supported the legislation. But it passed anyway. I’m left thinking…so much for democracy. And I’m also disheartened by the caving of the other two branches of our government. The lack of leadership is staggering. But I guess when you remove all leaders that oppose your way of thinking, you have only Yes men/followers and Yes women/followers left. It’s taken years for the Republican Party to carefully orchestrate the ownership of those other branches. (BTW, I celebrate the handful of Republican leaders in the Senate like Sen. Thom Tillis and in the House who did not cave to Trump and Johnson, but instead voted with their own hearts and minds. They showed true strength of conviction and courage. It will likely cost them, but they can look in the mirror and feel good about themselves and what they’ve done.)

Look out Republicans (and I’ve voted for both Republican and Democrat Presidents in the past). Perhaps July 4th, 2025 will mark the downfall of the Republican Party in America. Time will tell. But I’m hopeful that we can find more common ground.

Regardless, it says a lot about who we, as Americans, are these days — that he’s even in the presidency. I highly doubt he would have been there even a generation or two ago. We’re a nation in decline. It’s been hard to watch this through the years. I’m no saint, but I’m also not the President.

Speaking of matters of faith…I can’t help but wonder what the LORD is doing in this. Is He humbling America or is it something far worse…? He’s justified in whatever He has decided to do. Americans have been dissing Him for decades, while refusing to give Him the credit due His Name. Time will tell my friends…time will tell.

Also see:

…

The House passed a sweeping bill to extend tax cuts and slash social safety net programs. The budget office reported the measure would increase U.S. national debt by at least $3.4 trillion over a decade.

Kenny Holston/The New York Times

Also see:

- Trump ignored GOP warnings to gamble on a politically risky bill — from washingtonpost.com by Liz Goodwin, Marianna Sotomayor, Theodoric Meyer, and Emily Davies

In the president’s eagerness to score a win and extend tax cuts, he walked away from a key campaign promise on Medicaid.

To get his bill over the line in time for a self-imposed Friday deadline, Trump pressured Republican lawmakers to set aside their concerns about the political consequences of yanking benefits from voters while adding trillions to the federal deficit.

How the national debt affects the U.S. — and you — in 10 charts — from washingtonpost.com by Jacob Bogage; this is a GIFTED article

The national debt already exceeds $36 trillion and is growing at historic rates. That has cascading consequences for the government and economy.

From DSC:

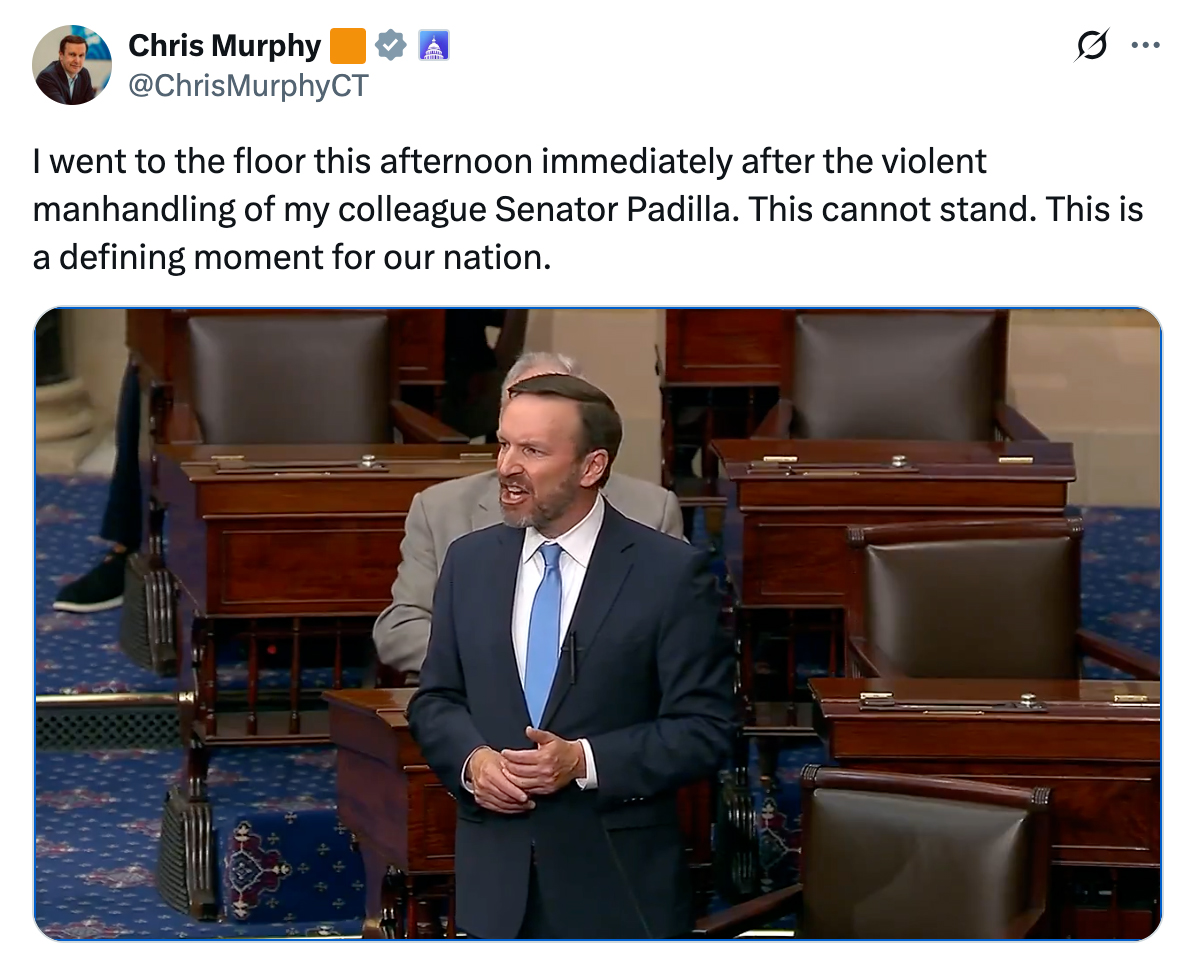

As you can see and hear below, Senator Alex Padilla had been trying to get answers for several weeks now from Homeland Security, but wasn’t hearing much back. So he heard that the Secretary of Homeland Security, Kristi Noem, was holding a press conference down the hallway and he attended it to see if he could get some answers to his questions. And while I don’t have all the details on how this situation unfolded, there is NO WAY that a U.S. Senator should be pushed out of a conference room and then pushed to the ground and handcuffed for trying to get answers for his constituents! No way!

As others in the videos below assert, a line has been crossed in our country!

Let’s move to impeach Donald Trump and also rid his administration of these incompetent individuals who are destroying our democracy! If they don’t like the Constitution and how our country has been governed for over 200 years, then perhaps they should consider leaving this country.

The actions they are taking are NOT making America great again. They are making America the stench of the world.

And it’s not just Donald Trump and members of his administration that should be held accountable. Let’s also start holding Donald’s instruments of power — such as his ICE Agents and others who behave like them — accountable. To any ICE agents out there, take those damn masks off. You shouldn’t be hiding behind masks.

By the way, the silence from the Republicans is deafening.

.

If that’s what they do to a United States Senator with a question, imagine what they can do to any American that dares to speak up. We will hold this administration accountable. pic.twitter.com/ZMExvMmZfE

— Senator Alex Padilla (@SenAlexPadilla) June 12, 2025

BREAKING: Sen. Alex Padilla, D-Calif., speaks out after being forcibly removed and cuffed from DHS Sec. Noem’s press conference. pic.twitter.com/3yZWDPYICl

— MSNBC (@MSNBC) June 12, 2025

URGENT BREAKING: Democrat US Senator Alex Padilla was just thrown to the ground and arrested for trying to speak at a DHS Press Conference by Secretary Kristi Noem.

This is Trump’s America. This is so incredibly pathetic.

I am so sad for the state of this country. pic.twitter.com/yI9fKdoYoW

— Brian Krassenstein (@krassenstein) June 12, 2025

This is a United States Senator being violently removed from a public briefing. Many of are reaching out to Senator Padilla right now and there are facts we need to gather, but this is a very, very serious moment. https://t.co/2a9Zb1cnTk

— Chris Murphy ? (@ChrisMurphyCT) June 12, 2025

If this is how this administration responds to a Senator with a question, you can only imagine what they’re doing to farmworkers, to cooks, and to day laborers throughout California and across the country.

We will hold this administration accountable. pic.twitter.com/ijy3FRVbWx

— Senator Alex Padilla (@SenAlexPadilla) June 12, 2025

The forcible removal of @SenAlexPadilla from a public press conference accelerates Trump’s authoritarian power grab.

The silence from Republicans is deafening.

Via @SenatorLujan pic.twitter.com/22X7BlainK

— Senate Democrats (@SenateDems) June 12, 2025

BREAKING: Adam Schiff just delivered one of the best speeches we’ve ever seen. This is MAGA’s worst nightmare. Every American needs to see this.pic.twitter.com/dr0Fpbc3fY

— Democratic Wins Media (@DemocraticWins) June 12, 2025

12 PM: I asked Democratic Governors what they would say if President Trump sent federal agents to arrest them.

2 PM: Sitting Senator @SenAlexPadilla was violently detained and handcuffed by federal agents.

You can’t make this stuff up. pic.twitter.com/yK0wLgKczX

— Congressman Maxwell Alejandro Frost (@RepMaxwellFrost) June 12, 2025

Today they have crossed a deep red line.

We, the people, must hold the president and his appointees accountable for this outrageous abuse against American liberty. pic.twitter.com/iPIlR4U5Fv

— Pete Buttigieg (@PeteButtigieg) June 12, 2025

Calif. Senator Forcibly Removed and Handcuffed After Interrupting Noem — from nytimes.com by Shawn Hubler, Jennifer Medina, and Jill Cowan (this is a gifted article)

Alex Padilla, Democrat of California, was shoved out of a room and handcuffed after he tried to question Kristi Noem, the homeland security secretary, during a news conference.

In the tense hyperpartisanship of the moment, the episode quickly swelled into a cause célèbre for both parties. Democratic senators, House members and governors rushed to denounce the treatment of a sitting senator, framing it as the latest escalation in authoritarian actions by the Trump administration. It followed the indictment on Tuesday of Representative LaMonica McIver of New Jersey and the arrest of Mayor Ras Baraka of Newark, after the officials, both Democrats, tried to visit a new immigration detention facility in the city.

Republicans just as eagerly tried to frame Mr. Padilla’s behavior as in line with what they have called the lawlessness of the political left as President Trump tries to combat illegal immigration.

From DSC:

My brother lost a lifelong friend recently and I’ve lost friends and family members waaaay too soon as well. It made me reflect, once again, on the brevity of our lives here on Earth.

Teach us to number our days, that we may gain a heart of wisdom.

Why, you do not even know what will happen tomorrow. What is your life? You are a mist that appears for a little while and then vanishes.

You have made my days a mere handbreadth; the span of my years is as nothing before you. Everyone is but a breath, even those who seem secure.

Lord, what are human beings that you care for them, mere mortals that you think of them? They are like a breath; their days are like a fleeting shadow.

Navigating Career Transitions — from er.educause.edu by Jay James, Mike Richichi, Sarah Buszka, and Wes Johnson

In this episode, we hear from professionals at different stages of their career journeys as they reflect on risk, resilience, and growth. They share advice on stepping into leadership roles, recognizing when it may be time for a change, and overcoming imposter syndrome.

.

.