Learners’ Edition: AI-powered Coaching, Professional Certifications + Inspiring conversations about mastering your learning & speaking skills — from linkedin.com by Tomer Cohen

Excerpts:

1. Your own AI-powered coaching

Learners can go into LinkedIn Learning and ask a question or explain a challenge they are currently facing at work (we’re focusing on areas within Leadership and Management to start). AI-powered coaching will pull from the collective knowledge of our expansive LinkedIn Learning library and, instantaneously, offer advice, examples, or feedback that is personalized to the learner’s skills, job, and career goals.

What makes us so excited about this launch is we can now take everything we as LinkedIn know about people’s careers and how they navigate them and help accelerate them with AI.

…

3. Learn exactly what you need to know for your next job

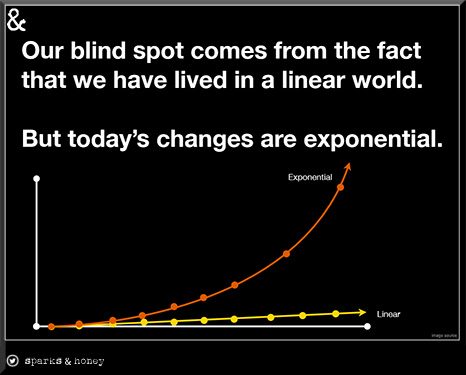

When looking for a new job, it’s often the time we think about refreshing our LinkedIn profiles. It’s also a time we can refresh our skills. And with skill sets for jobs having changed by 25% since 2015 – with the number expected to increase by 65% by 2030– keeping our skills a step ahead is one of the most important things we can do to stand out.

There are a couple of ways we’re making it easier to learn exactly what you need to know for your next job:

When you set a job alert, in addition to being notified about open jobs, we’ll recommend learning courses and Professional Certificate offerings to help you build the skills needed for that role.

When you view a job, we recommend specific courses to help you build the required skills. If you have LinkedIn Learning access through your company or as part of a Premium subscription, you can follow the skills for the job, that way we can let you know when we launch new courses for those skills and recommend you content on LinkedIn that better aligns to your career goals.

2024 Edtech Predictions from Edtech Insiders — from edtechinsiders.substack.com by Alex Sarlin, Ben Kornell, and Sarah Morin

Omni-modal AI, edtech funding prospects, higher ed wake up calls, focus on career training, and more!

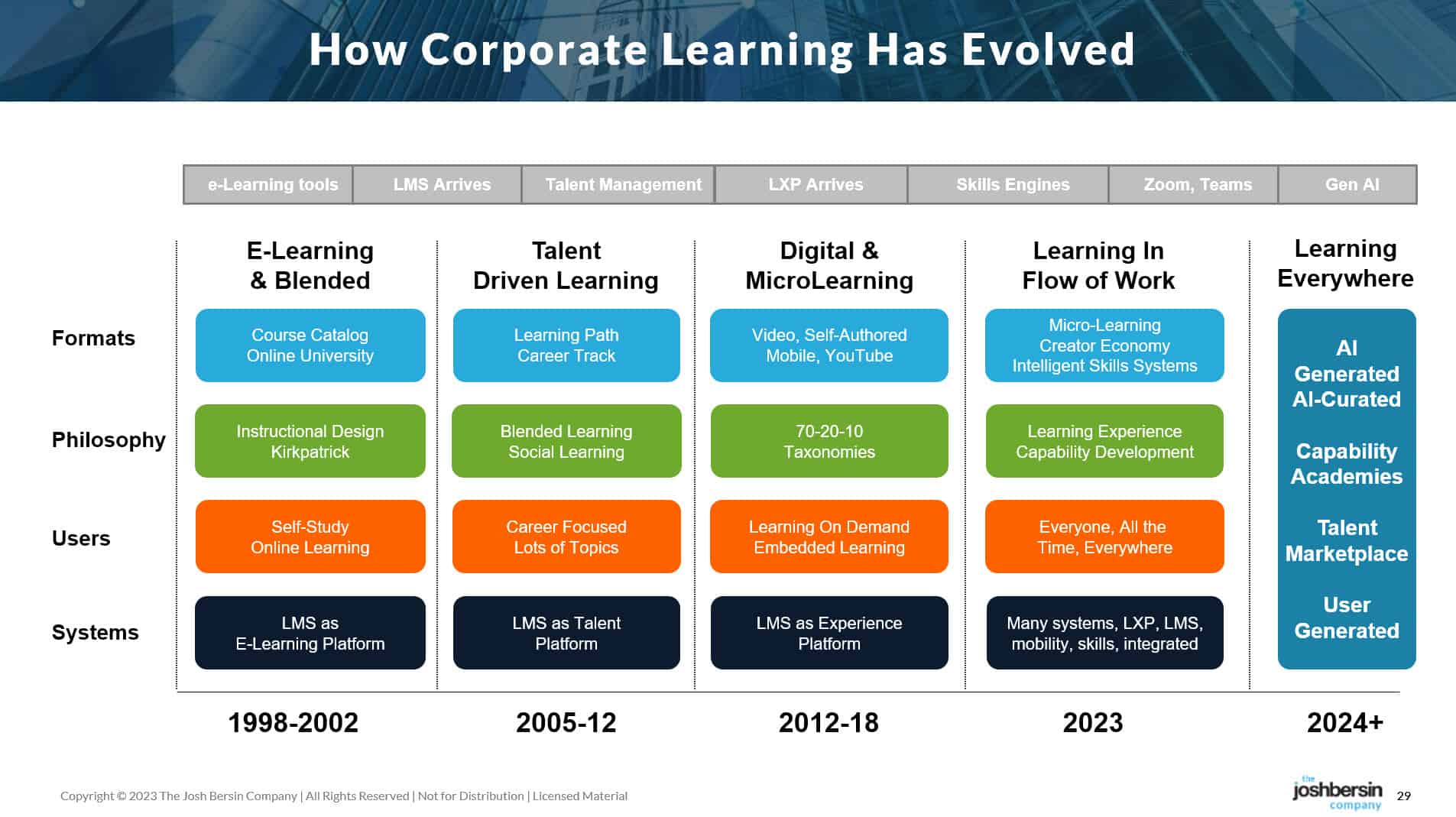

Alex: I talked to the 360 Learning folks at one point and they had this really interesting epiphany, which is basically that it’s been almost impossible for every individual company in the past to create a hierarchy of skills and a hierarchy of positions and actually organize what it looks like for people to move around and upskill within the company and get to new paths.

Until now. AI actually can do this very well. It can take not only job description data, but it can take actual performance data. It can actually look at what people do on a daily basis and back fit that to training, create automatic training based on it.

From DSC:

I appreciated how they addressed K-12, higher ed, and the workforce all in one posting. Nice work. We don’t need siloes. We need more overall design thinking re: our learning ecosystems — as well as more collaborations. We need more on-ramps and pathways in a person’s learning/career journey.