Artificial Intelligence in Education: Where It’s At, Where It’s Headed — from gettingsmart.com by Cameron Paterson

Excerpt:

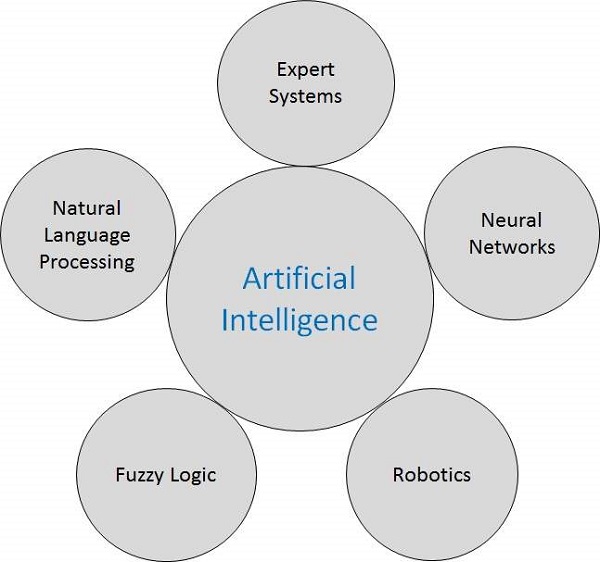

Artificial intelligence is predicted to fundamentally alter the nature of society by 2040. Investment in AI start-ups was estimated at $6-$9 billion in 2016, up from US$415 million four years earlier. While futurist Ray Kurzweil argues that AI will help us to address the grand challenges facing humanity, Elon Musk warns us that artificial intelligence will be our “biggest existential threat.” Others argue that artificial intelligence is the future of growth. Everything depends on how we manage the transition to this AI-era.

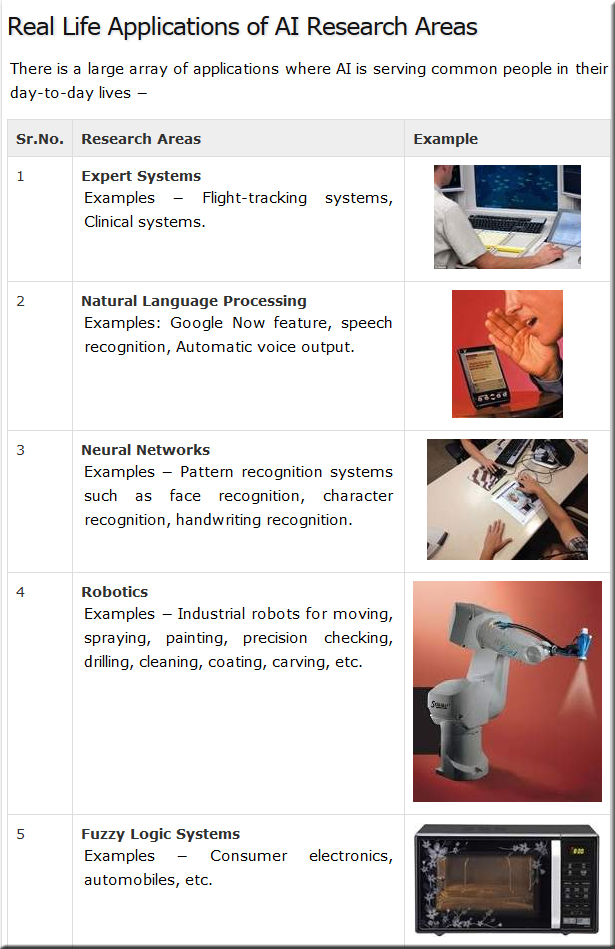

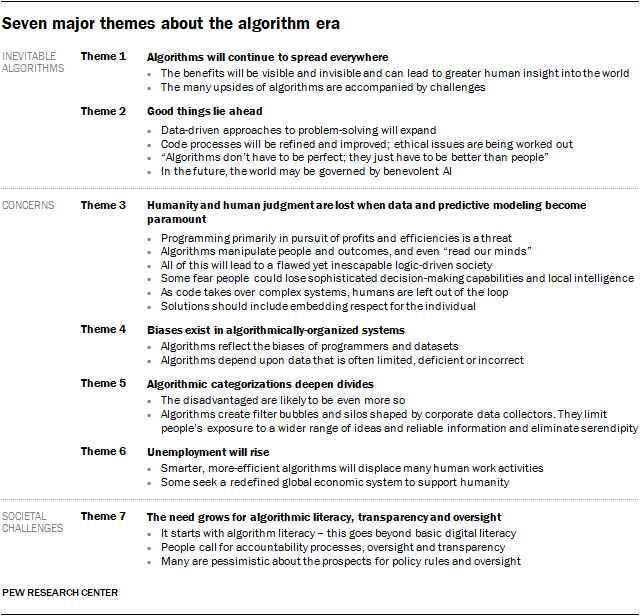

In 2016 the Obama administration released a national strategic plan for artificial intelligence and, while we do not all suddenly now need a plan for artificial intelligence, we do need to stay up to date on how AI is being implemented. Much of AI’s potential is yet to be realized, but AI is already running our lives, from Siri to Netflix recommendations to automated air traffic control. We all need to become more aware of how we are algorithmically shaped by our tools.

This Australian discussion paper on the implications of AI, automation and 21st-century skills, shows how AI will not just affect blue-collar truck drivers and cleaners, it will also affect white-collar lawyers and doctors. Automated pharmacy systems with robots dispensing medication exist, Domino’s pizza delivery by drone has already occurred, and a fully automated farm is opening in Japan.

Education reformers need to plan for our AI-driven future and its implications for education, both in schools and beyond. The never-ending debate about the sorts of skills needed in the future and the role of schools in teaching and assessing them is becoming a whole lot more urgent and intense.

AI Experts Want to End ‘Black Box’ Algorithms in Government — from wired.com by Tom Simonite

Excerpt:

The right to due process was inscribed into the US constitution with a pen. A new report from leading researchers in artificial intelligence cautions it is now being undermined by computer code.

Public agencies responsible for areas such as criminal justice, health, and welfare increasingly use scoring systems and software to steer or make decisions on life-changing events like granting bail, sentencing, enforcement, and prioritizing services. The report from AI Now, a research institute at NYU that studies the social implications of artificial intelligence, says too many of those systems are opaque to the citizens they hold power over.

The AI Now report calls for agencies to refrain from what it calls “black box” systems opaque to outside scrutiny. Kate Crawford, a researcher at Microsoft and cofounder of AI Now, says citizens should be able to know how systems making decisions about them operate and have been tested or validated. Such systems are expected to get more complex as technologies such as machine learning used by tech companies become more widely available.

“We should have equivalent due-process protections for algorithmic decisions as for human decisions,” Crawford says. She says it can be possible to disclose information about systems and their performance without disclosing their code, which is sometimes protected intellectual property.

UAE appoints first-ever Minister for Artificial Intelligence — from tribune.com.pk

“We announce the appointment of a minister for artificial intelligence. The next global wave is artificial intelligence and we want the UAE to be more prepared for it.”

Tech Giants Are Paying Huge Salaries for Scarce A.I. Talent — from nytimes.com by Cade Metz

Nearly all big tech companies have an artificial intelligence project, and they are willing to pay experts millions of dollars to help get it done.

Excerpt:

Tech’s biggest companies are placing huge bets on artificial intelligence, banking on things ranging from face-scanning smartphones and conversational coffee-table gadgets to computerized health care and autonomous vehicles. As they chase this future, they are doling out salaries that are startling even in an industry that has never been shy about lavishing a fortune on its top talent.

Typical A.I. specialists, including both Ph.D.s fresh out of school and people with less education and just a few years of experience, can be paid from $300,000 to $500,000 a year or more in salary and company stock, according to nine people who work for major tech companies or have entertained job offers from them. All of them requested anonymity because they did not want to damage their professional prospects.

With so few A.I. specialists available, big tech companies are also hiring the best and brightest of academia. In the process, they are limiting the number of professors who can teach the technology.

Where will AI play? By Mike Quindazzi.

Where will #AI play? #ArtificialIntelligence aims to drive #innovation and #disruption across every industry. #MachineLearning #Robotics pic.twitter.com/SdcxQwDifK

— Mike Quindazzi ? (@MikeQuindazzi) October 23, 2017