Dr. Hardman’s post on LinkedIn

and/or

See Dr. Hardman’s post on substack.com entitled:

- The Illusion AI Productivity Gains

Why your AI tools aren’t delivering the ROI you were promised — and what to do about it

The TalentLMS 2026 Annual L&D Benchmark Report — from talentlms.com

From year-over-year training benchmarks to learner–leader gaps, see the data that defines the new era of learning. To turn insight into action, the report lays out 10 evidence-backed interventions to hardwire development. Plus, lift the lid on Learning Debt: What it is and how to spot it.

Executive summary

The skills economy is being rewritten in real time. AI is reshaping what people need to know, do, and deliver, faster than organizational structures can adapt. The result is a workplace caught between acceleration and inertia. Companies are racing to reskill for an AI-driven future while relying on structures built for yesterday’s world.

This TalentLMS 2026 L&D Benchmark Report captures that inflection point. Based on data collected through 2025, and compared with earlier findings from 2022 to 2024, it explores how learning is evolving and what’s holding it back.

Our research integrates two vantage points: HR leaders overseeing learning initiatives and employees receiving formal training. Together, they offer a dual perspective on how learning is managed and how it’s experienced.

The analysis also draws on insights from external research and leading L&D practitioners, anchoring the report in both evidence and practice.

Combined, the findings point to a structural fault line: Learning is expanding in scope but contracting in space. Organizations are multiplying programs, tools, and ambitions, yet the conditions for learning — time, focus, and cognitive bandwidth — keep shrinking.

The data from this report underscores this critical conflict: According to half of the surveyed employees and learning leaders, high workloads leave little room for training, even when it’s needed.

Employees work inside a permanent sprint, where attention is fragmented and reflection is sidelined. The space for learning is collapsing under the weight of doing. Sixty-five percent of employees say performance expectations have risen this year, yet lack of time remains the biggest barrier to learning.

The numbers confirm what employees and learning leaders both feel: Technology can advance overnight. But people and cultures can’t.

Which Jobs Are Most at Risk From AI? New Anthropic Data Offers Clues. — from builtin.com by Matthew Urwin

Anthropic set out in its latest study to predict how artificial intelligence could impact the labor market. Instead, its findings raise more questions than answers for tech workers as the U.S. government refuses to regulate the AI industry.

Summary:

In its latest labor market study, Anthropic found that artificial intelligence poses the greatest threat to software jobs, women and younger professionals. As the Trump administration takes a hands-off approach to AI, tech workers may be left to grapple with these findings on their own.

Matthew links to:

Labor market impacts of AI: A new measure and early evidence — from anthropic.com

Key findings

- We introduce a new measure of AI displacement risk, observed exposure, that combines theoretical LLM capability and real-world usage data, weighting automated (rather than augmentative) and work-related uses more heavily

- AI is far from reaching its theoretical capability: actual coverage remains a fraction of what’s feasible

- Occupations with higher observed exposure are projected by the BLS to grow less through 2034

- Workers in the most exposed professions are more likely to be older, female, more educated, and higher-paid

- We find no systematic increase in unemployment for highly exposed workers since late 2022, though we find suggestive evidence that hiring of younger workers has slowed in exposed occupations

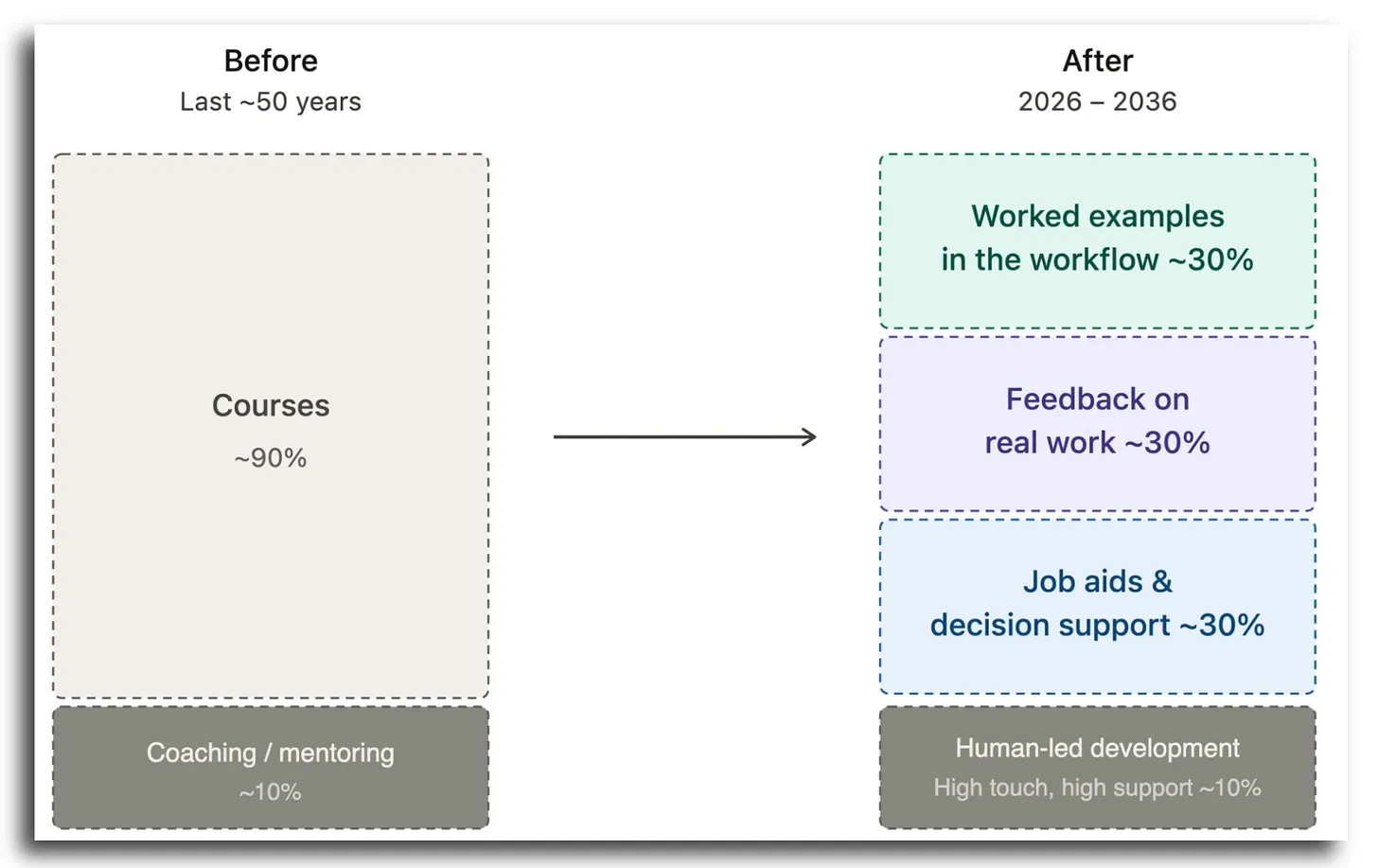

The Course Is Dying as the Unit of Learning — from drphilippahardman.substack.com by Dr Philippa Hardman

Here’s why, and what’s replacing It

What the Bleeding Edge Looks like in Practice

So what does “the new stack” actually look like when organisations lean into this? Here are four real patterns already in play.

Engineering: from engine courses to in-workflow AI coaching.

Product development: from courses to craft-specific agents.

Compliance: from annual course to nudge systems.|

Enablement systems, not catalogues.

…

Here is Chris Martin’s posting on LinkedIn.com:

Here is Dominik Mate Kovacs’ posting on LinkedIn.com:

The AI ‘hivemind’: Why so many student essays sound alike — from hechingerreport.org by Jill Barshay

A study of more than 70 large language models found similar answers to brainstorming and creative writing prompts

The answers were frequently indistinguishable across different models by different companies that have different architectures and use different training data. The metaphors, imagery, word choices, sentence structures — even punctuation — often converged. Jiang’s team called this phenomenon “inter-model homogeneity” and quantified the overlaps and similarities. To drive the point home, Jiang titled her paper, the “Artificial Hivemind.” The study won a best paper award at the annual conference on Neural Information Processing Systems in December 2025, one of the premier gatherings for AI research.

AI Has No Moral Compass. Do You? — from michelleweise.substack.com by Michelle Weise & Dana Walsh

Why the Age of AI Demands We Take Character Formation Seriously

Here’s something to chew on:

Anthropic, the company behind Claude — a chatbot used by 30 million users per month — has exactly one person (whom we know of) working on AI ethics. One. A young Scottish philosopher is doing the vital work of training a large language model to discern right from wrong.

I don’t say this to shame Anthropic. In fact, Anthropic appears to be the only company (that we know of) being explicit about the moral foundations and reasoning of its chatbot. Hundreds of millions of users worldwide are leveraging tools from other LLMs that do not appear to have an explicit moral compass being cultivated from within.

I raise this because this is yet another example of where we are: extraordinary technical power advancing without an equally strong moral infrastructure to support it.

Why do we keep producing people who are skilled but not wise?

Here is Pradnya’s posting out on LinkedIn.com:

From DSC…note these excerpts from Pradnya’s posting:

- I’m not manually assigning 400 people anymore ? They’re actually taking relevant courses now

Shows me the data ? Suggests courses to fix it ? I look like a strategic genius

Completion rates up 34% ? Nobody’s “stuck” anymore

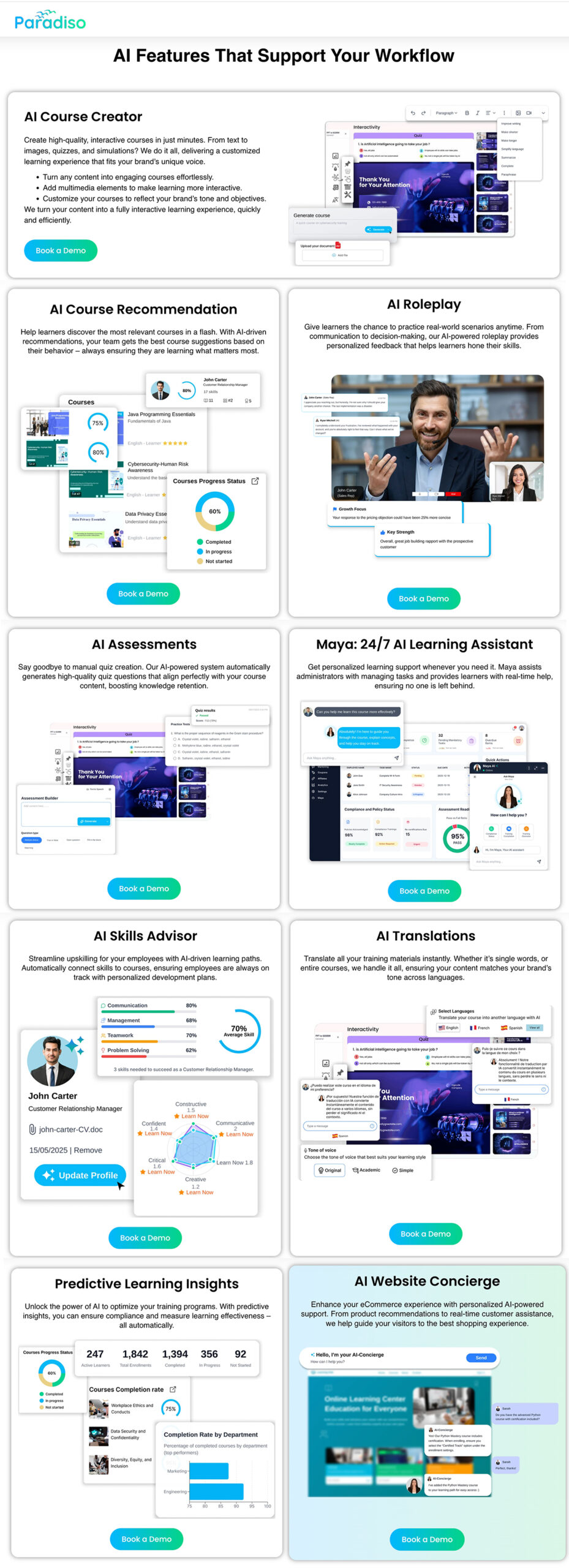

Pradnya links to a page out at ParadisoSolutions.com. Check out some of the functionality this AI-powered system provides:

How to Get Consistent, On-Brand Course Images from Any AI Image Tool — from drphilippahardman.substack.com by Dr. Philippa Hardman

A 3-step workflow that works every time — whatever AI tool you’re using

Most designers try to describe their way to an image. That’s the wrong approach. The goal is to show the tool the world it should be working in, then give it the minimum it needs to place your subject inside that world.

Every long, over-specified prompt is a sign that your visual inputs aren’t doing enough work.

The fix is an 3-step process which gives you superpowers in AI image generation…

How AI Could Transform, or Replace, the LMS — from futureupodcast.com by Jeff Selingo, Michael Horn, and Matthew Pittinsky

Tuesday, March 10, 2026 – For 30 years now, colleges have relied on the Learning Management System, or LMS, as a key portal for professors and students to teach and learn. It’s a tool that has helped colleges adapt to online learning and bring digital tools to classroom teaching. But generative AI seems poised to disrupt the LMS. And it’s unclear whether the LMS will evolve—or be replaced altogether. For this episode, Jeff and Michael talk with a pioneer of the technology, Matthew Pittinsky, about the lessons of past moments of tech disruption like the smartphone and cloud computing and about what could be different this time. This episode is made with support from Ascendium Education Group.

Gemini, Explained — from wondertools.substack.com by Jeremy Caplan

5 features worth your time — tested and compared

Google’s AI, Gemini, has quickly become one of the AI tools I rely on most. It builds dashboards and creates remarkable infographics. It spins out comprehensive research reports in minutes that would once have taken days to assemble.

It’s improving every month. On March 13, Google announced Ask Maps, so you can query Gemini about things like “Which nearby tennis courts are open with lights so I can play tonight?” On March 10, Gemini added new integrations to build, summarize, and analyze your Google Docs, Sheets, and Slides.

In today’s post below: catch up on the Gemini features worth your time, candid comparisons with other AI tools, and answers to the questions I hear most.

How we’re reimagining Maps with Gemini — from blog.google

Ask Maps answers your real-world questions with a conversation, and Immersive Navigation makes your route more intuitive.

Today, Google Maps is fundamentally changing what a map can do. By bringing together the world’s freshest map with our most capable Gemini models, we’re transforming exploration into a simple conversation and making driving more intuitive than ever with our biggest navigation upgrade in over a decade.

Ask anything about any place

We’re introducing Ask Maps, a new conversational experience that answers complex, real-world questions a map could never answer before. Now you can ask for things like, “My phone is dying — where can I charge it without having to wait in a long line for coffee?” or “Is there a public tennis court with lights on that I can play at tonight?” Previously, finding this information meant lots of research and sifting through reviews. But now, you can just tap the “Ask Maps” button and get your questions answered conversationally, with a customized map to help you visualize your options.

The Future of Learning Looks Like Workforce Infrastructure — from workshift.org by Bruno V. Manno

For years, “ed tech” was an umbrella term grouping schools, online platforms, courses, credentials, and software under one idea: technology applied to education. That shorthand made it easier for investors, policymakers, and institutions to talk about innovation without rethinking how learning fits into the economy. Today, it no longer explains what’s happening.

That’s the central insight of “The European Learning & Work Funding Report” by Brighteye Ventures, a research and advisory firm tracking investment at the intersection of learning, work, and productivity. The report’s seventh edition shows that learning is no longer funded primarily as education. It is increasingly funded as part of how work gets done.

Across Europe, and increasingly the U.S., capital is flowing not toward courses or credentials but toward systems that are closer to production, including hiring platforms, staffing firms, clinical decision tools, payroll systems, and compliance software. These are not educational products, though learning is embedded throughout them.

In these systems, learning is not the point. Outcomes are.

Build hybrid institutions that erase boundaries. Stop forcing learners to navigate disconnected systems. Create partnerships that blend K-12 schools, community colleges, training providers, and employers into one integrated system, so students move through one coherent system, not four separate bureaucracies.

Teach Smarter with AI — from wondertools.substack.com by Jeremy Caplan and Lance Eaton

10 tested strategies from two educators who actually use them

I recently talked with Lance Eaton, Senior Associate Director of AI and Teaching & Learning at Northeastern University and writer of AI + Education = Simplified. We traded ideas about what’s actually working. We came up with 10 specific, practical ways anyone who teaches, coaches, or leads can put AI to work.

Watch the full conversation above, or read highlights below.

Beyond Audio Summaries: How to Use NotebookLM to *Actually* Design Better Learning — from drphilippahardman.substack.com by Dr. Philippa Hardman

Five methods to maximise the value of NotebookLM’s features

In practice, what makes NotebookLM different for learning designers is four things:

- Answers grounded in your sources (with citations):

- Source toggling:

- Multi-format studio & multi-source summaries:

- Persistent workspace:

…

5 Evidence-Based Methods NotebookLM Operationalises…

Shadow AI Isn’t a Threat: It’s a Signal — from campustechnology.com by Damien Eversmann

Unofficial AI use on campus reveals more about institutional gaps than misbehavior.

Key Takeaways

- Shadow AI is widespread in higher education: Faculty, researchers, students, and staff are using AI tools outside official IT channels, including consumer platforms and public cloud services that may involve sensitive data.

- Unauthorized AI use creates data, compliance, and cost risks: Consumer AI tools may store or reuse user data, while uncoordinated adoption drives redundant licenses, unpredictable cloud costs, and weaker security oversight.

- Institutions are shifting from restriction to enablement: Some campuses are making approved paths easier by offering ready-to-use research environments, campus-managed AI tools, clear guidance on data and vendors, and streamlined approval processes.

How L&D Can Lead in the Age of AI Even If Your Company’s Not Ready — from learningguild.com

How to lead even when your company doesn’t allow AI

Even if your corporation isn’t ready for AI, you can still research tools personally to stay ahead of the curve, so when organizational restrictions lift, you are ready to use AI for learning right away. Here are some tools you can test at home if they’re restricted in your workplace:

- Content generation – Start testing text-based tools to get a taste of how AI can accelerate content creation. Then take it to the next level by exploring tools that generate voices, music, and sound effects.

- AI coaching tools – Have AI pose as a customer co-worker or customer to get a taste of what it’s like to use it as a conversation coach. Next, use the voice and video capabilities in an app like ChatGPT to explore how AI can coach someone through tasks.

- In-the-flow learning assistants – Test turning documents into a conversational avatar and interacting with it to see how it feels. Then think about how the technology could potentially transform static content into dynamic learning experiences for employees.

- Vibe-coded simulations – Experiment with this technology by creating a simple, fun game. Afterwards, brainstorm some ideas on how it could quickly create simulations for your learners in the future.

The Higher Ed Playbook for AI Affordability — from campustechnology.com by Jason Dunn-Potter

Key Takeaways

- Affordable AI adoption focuses on evolving existing systems: Universities are embedding AI into current devices, workflows, and legacy systems rather than rebuilding infrastructure or investing in new data centers.

- Edge AI reduces costs and improves access: Running AI models on local devices or networks lowers cloud processing costs, enhances security, and supports learning use cases such as tutoring, translation, transcription, and adaptive learning.

- Enterprise integration and governance drive impact: Institutions are applying AI across admissions, advising, facilities, and research workflows, supported by shared resource hubs, data governance, AI literacy, and outcome-driven implementation.

Claude is quietly becoming the go-to AI tool for learning designers. Here’s a 101 guide. — from Linkedin.com by Dr. Philippa Hardman

L&D Global Sentiment Survey 2026 — from linkedin.com by Donald H. Taylor

“But what’s happening right now is exponential.” — from linkedin.com by Josh Cavalier

Excerpt:

I need to be honest with you. I’ve been running experiments this week with Claude Code and Opus 4.6, and we have reached the precipice in the collapse of time required to produce high-quality text-based ID outputs.

This includes performance consulting reports, learning needs analyses, action mapping, scripts, storyboards, facilitator guides, rubrics, and technical specs.

I just mapped the entire performance consulting process into a multimodal AI integration architecture (diagram image). Every phase. Entry and contracting. Performance analysis. Cause analysis. Solution design. Implementation. Evaluation. Thirty files. System specifications for each. The next step is to vet out each “skill” with an expert performance consultant.

Then I attempted a learning output: an 8-module course built with a cognitive scaffold that moves beyond content delivery to facilitate deliberate practice, meaning-making, and guided reflection within the learner’s own context.

The result:

AI and human-centered learning — from linkedin.com by Patrick Blessinger

Democratizing opportunities

AI adaptive learning can adapt learning in real-time. These tools have the potential to provide a more personalized learning experience, but only if used properly.

The California State University system uses ChatGPT Edu (OpenAI, 2025). Students use it for AI-assisted tutoring, study aids, and writing support. These resources provide 24/7 availability of subject-matter expertise tailored to students’ learning needs. It is not a replacement for professors. Rather, it extends the reach of mentorship by reducing access barriers.

However, we must proceed with intellectual humility and ethical responsibility. Even though AI can customize messages, it cannot replace the encouragement of a teacher or professor, or the social and emotional aspects of learning. It’s at the intersection of humanistic values and knowledge development that education must find its balance.

Centering work-based learning on the 4 As—authenticity, aspiration, ability, agency — from explore.gpsed.org

In the rush to expand work-based learning (WBL), it is easy to focus on the “placement”—the logistics of getting a student into a workplace. But a placement alone isn’t a strategy. If an experience doesn’t help a student build the internal capacity to navigate their own future, we are simply checking a box.

At GPS Ed, we believe WBL is most powerful when viewed as a sequenced journey of career literacy. It starts with early awareness and exploration, giving students the chance to “try on” different roles, and scales up to intensive, hands-on experiences. By centering this journey on the 4 As—authenticity, aspiration, ability, agency—we ensure that the time invested by students, schools, and employers yields a lifelong return.

Also see:

Amid AI and Labor Market Changes, Companies Look to Grow Their Own Skilled Workers — from workshift.org by Colleen Connolly

The explosion of artificial intelligence, combined with slowing growth in the labor force, has many companies reconsidering how they hire and develop workers. Where they once relied on colleges and universities for training, a growing number of companies are now looking in-house.

Investment in developing employees and would-be hires is becoming a key differentiator for companies, according to a new report from the Learning Society, a collaborative effort led out of the Stanford Center on Longevity. And that’s true even as AI adoption grows.

The Big Idea: The report authors interviewed 15 human resources executives from major firms, which ranged in size from Hubbell, an electric and utility product manufacturer with about 17K employees, to Walmart with more than 2M employees. The authors asked about four topics: the impact of AI and technology on work, skill building and talent development, supporting workers over longer working lives, and new partnerships between businesses and higher education.