Let AI Interview You — from wondertools.substack.com by Jeremy Caplan & Jay Dixit

A smarter way to get past the blank page

There’s nothing wrong with using AI to get answers to your questions. But there’s another mode of interacting with AI that many people never consider — one I find much more useful for my creative process.

Here’s what I do instead: I flip the script and let the AI ask the questions. Instead of prompting AI, I get the AI to prompt me.

Dr. Hardman’s post on LinkedIn

and/or

See Dr. Hardman’s post on substack.com entitled:

- The Illusion AI Productivity Gains

Why your AI tools aren’t delivering the ROI you were promised — and what to do about it

The TalentLMS 2026 Annual L&D Benchmark Report — from talentlms.com

From year-over-year training benchmarks to learner–leader gaps, see the data that defines the new era of learning. To turn insight into action, the report lays out 10 evidence-backed interventions to hardwire development. Plus, lift the lid on Learning Debt: What it is and how to spot it.

Executive summary

The skills economy is being rewritten in real time. AI is reshaping what people need to know, do, and deliver, faster than organizational structures can adapt. The result is a workplace caught between acceleration and inertia. Companies are racing to reskill for an AI-driven future while relying on structures built for yesterday’s world.

This TalentLMS 2026 L&D Benchmark Report captures that inflection point. Based on data collected through 2025, and compared with earlier findings from 2022 to 2024, it explores how learning is evolving and what’s holding it back.

Our research integrates two vantage points: HR leaders overseeing learning initiatives and employees receiving formal training. Together, they offer a dual perspective on how learning is managed and how it’s experienced.

The analysis also draws on insights from external research and leading L&D practitioners, anchoring the report in both evidence and practice.

Combined, the findings point to a structural fault line: Learning is expanding in scope but contracting in space. Organizations are multiplying programs, tools, and ambitions, yet the conditions for learning — time, focus, and cognitive bandwidth — keep shrinking.

The data from this report underscores this critical conflict: According to half of the surveyed employees and learning leaders, high workloads leave little room for training, even when it’s needed.

Employees work inside a permanent sprint, where attention is fragmented and reflection is sidelined. The space for learning is collapsing under the weight of doing. Sixty-five percent of employees say performance expectations have risen this year, yet lack of time remains the biggest barrier to learning.

The numbers confirm what employees and learning leaders both feel: Technology can advance overnight. But people and cultures can’t.

Why Sal Khan’s AI revolution hasn’t happened yet, according to Sal Khan — from chalkbeat.org by Matt Barnum

Three years ago, as Khan Academy founder Sal Khan rolled out an AI-powered tutoring chatbot, he predicted a revolution in learning.

So far, the revolution hasn’t happened, he acknowledges.

“For a lot of students, it was a non-event,” Khan told me recently about his eponymous chatbot, Khanmigo. “They just didn’t use it much.”

Khan gives this analogy: Imagine he walked into a class, sat in the back of the room, and waited for students to seek out help. “Some will; most won’t,” he said. That’s been the experience with AI tutoring, he said. It doesn’t necessarily make students motivated to learn or fill in gaps in knowledge needed to ask questions.

“AI is going to help,” said Khan of this reimagined Khan Academy. “But I think our biggest lever is really investing in the human systems.”

Recording at LegalWeek in New York, Zach sits down with Shlomo Klapper (founder of Learned Hand) and Bridget McCormack, former Chief Justice of the Michigan Supreme Court and now CEO of the American Arbitration Association, to challenge one of the biggest double standards in legal AI: “AI for me, but not for thee.” Lawyers are now widely using AI like #Harvey and #Legora — and now more than ever #claude — but the moment it touches judges or arbitrators, support drops off.

That hesitation comes as courts are under real strain, with judges handling thousands of cases a year and only minutes to decide each one, and no realistic way to keep up. Shlomo describes Learned Hand’s “AI law clerk,” built to support judicial research, analysis, and drafting, while Bridget brings the perspective of someone who has both made decisions on the bench and has pioneered the American Arbitration Association’s AI Arbitrator, a first of its kind. The conversation moves beyond AI as an assistant and into a harder shift: AI as part of decision-making itself, and whether the system can continue to function without it.

Also see:

Are Judges the Next To Adopt AI? Is That a Good Thing? — from legallydisrupted.com by Zach Abramowitz

Episode 46 of Legally Disrupted Has the Two Best Experts on the Topic

This brings us to an admitted, glaring double standard between lawyers and judges. Lawyers are totally fine with lawyers using AI, but those same lawyers become apoplectic at the thought of judges or arbitrators using AI. It is very much “AI for me, but not for thee.” A survey last year from White & Case and Queen Mary University of London School of Law showed that nearly 90% of lawyers were deeply supportive of AI for their own research and analytics, but that support drops to just 23% when it comes to a judge or arbitrator using it to make a decision.

…

Yet, despite that hullabaloo, there is a massive need for alternative forms of intelligence in our courts. Right now, the system is drowning. We have state court trial judges disposing of 2,500 cases a year, meaning they have barely half an hour to spend on a single case. We are simply not going to lawyer our way out of this 50-year backlog. If we just use humans, we have a massive demand for intelligence but a severely limited supply. AI could step in to give these judges the capacity they desperately need for the courts to actually function.

An Attack on Sam Altman Sends a Terrifying Message — from the nytimes.com; this is a gifted opinion article by Aaron Zamost

Lawless political violence landed on Silicon Valley’s doorstep this month when an attacker hurled a Molotov cocktail at the San Francisco compound of Sam Altman, OpenAI’s chief executive. The incident was a disturbing sign that simmering public anger about A.I. is spilling out of polling data and social media posts and into the real world.

The attack shook many tech employees, who in quiet conversations about safety wondered whether this was a watershed moment for the industry. I believe it should be — the whole thing is disturbing and jarring, but I’m hopeful it will change how some tech leaders deal with the societal consequences of their success.

…

If these companies sold food, cars, medicine or any other consumer goods, their products would almost certainly be recalled while federal regulators investigated the allegations.

…

You would think an industry creating this kind of outrage would reflect or recalibrate. Business experts teach us that companies facing customer backlash should acknowledge the failure, change their approach and earn back public trust. But the titans of tech no longer seem interested in convincing the public.

The foundation of Silicon Valley’s appeal has always been the implicit promise that great technology serves you, and that the people behind it understand your problems and want to solve them. That promise is starting to feel broken. Fixing it requires something much of Silicon Valley has forgotten how to do: listen and learn.

A Molotov cocktail is the absolute wrong way to send a message to tech. Its leaders need to hear it anyway.

What the Future of Learning Looks Like in the Era of AI — from the Center for Academic Innovation at the University of Michigan, by Sean Corp

AI & the Future of Learning Summit brings industry, education leaders together to discuss higher education’s opportunity to lead, what students need, and what partnerships are possible

As artificial intelligence rapidly reshapes the nature of work and learning, speakers at the University of Michigan’s AI & the Future of Learning Summit delivered a clear message: higher education must take a leading role in defining what comes next.

One CEO of a leading educational technology company put it like this: “The only bad thing would be universities standing still.”

Universities must embrace their roles as providers of continuous, lifelong learning that evolves alongside technological change.

…

This shift is already affecting early-career pathways. Employers are placing greater emphasis on experience, while traditional entry-level roles are becoming less accessible. There is often a gap between what a credential represents and the expectations of employers.

That gap is particularly evident in access to internships. Chris Parrish, co-founder and president of Podium, noted that millions of students compete for a limited number of internships each year, making it increasingly difficult to gain the experience employers demand.

“If you miss out on an internship, you’re twice as likely to be unemployed,” Parrish said.

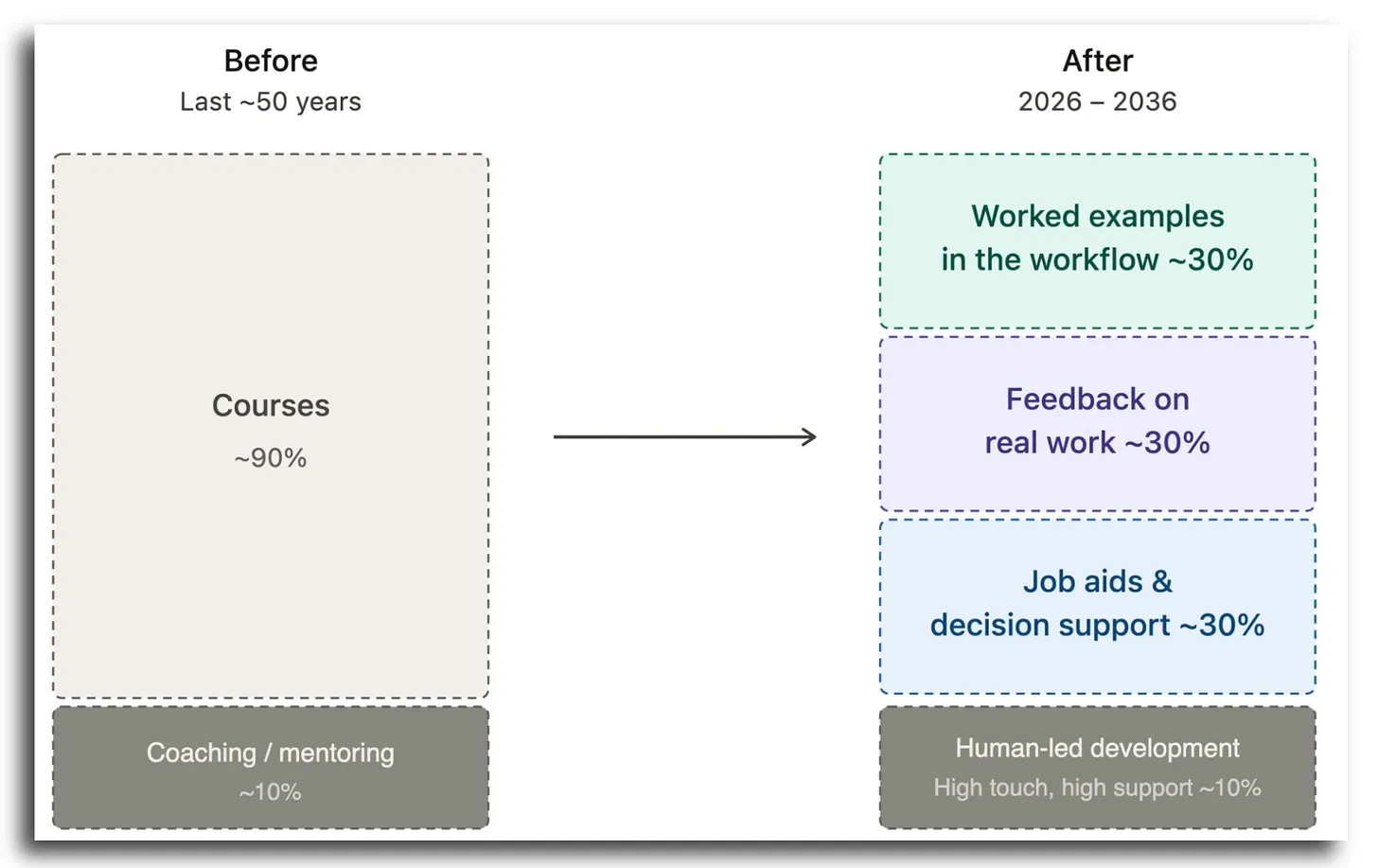

The Course Is Dying as the Unit of Learning — from drphilippahardman.substack.com by Dr Philippa Hardman

Here’s why, and what’s replacing It

What the Bleeding Edge Looks like in Practice

So what does “the new stack” actually look like when organisations lean into this? Here are four real patterns already in play.

Engineering: from engine courses to in-workflow AI coaching.

Product development: from courses to craft-specific agents.

Compliance: from annual course to nudge systems.|

Enablement systems, not catalogues.

…

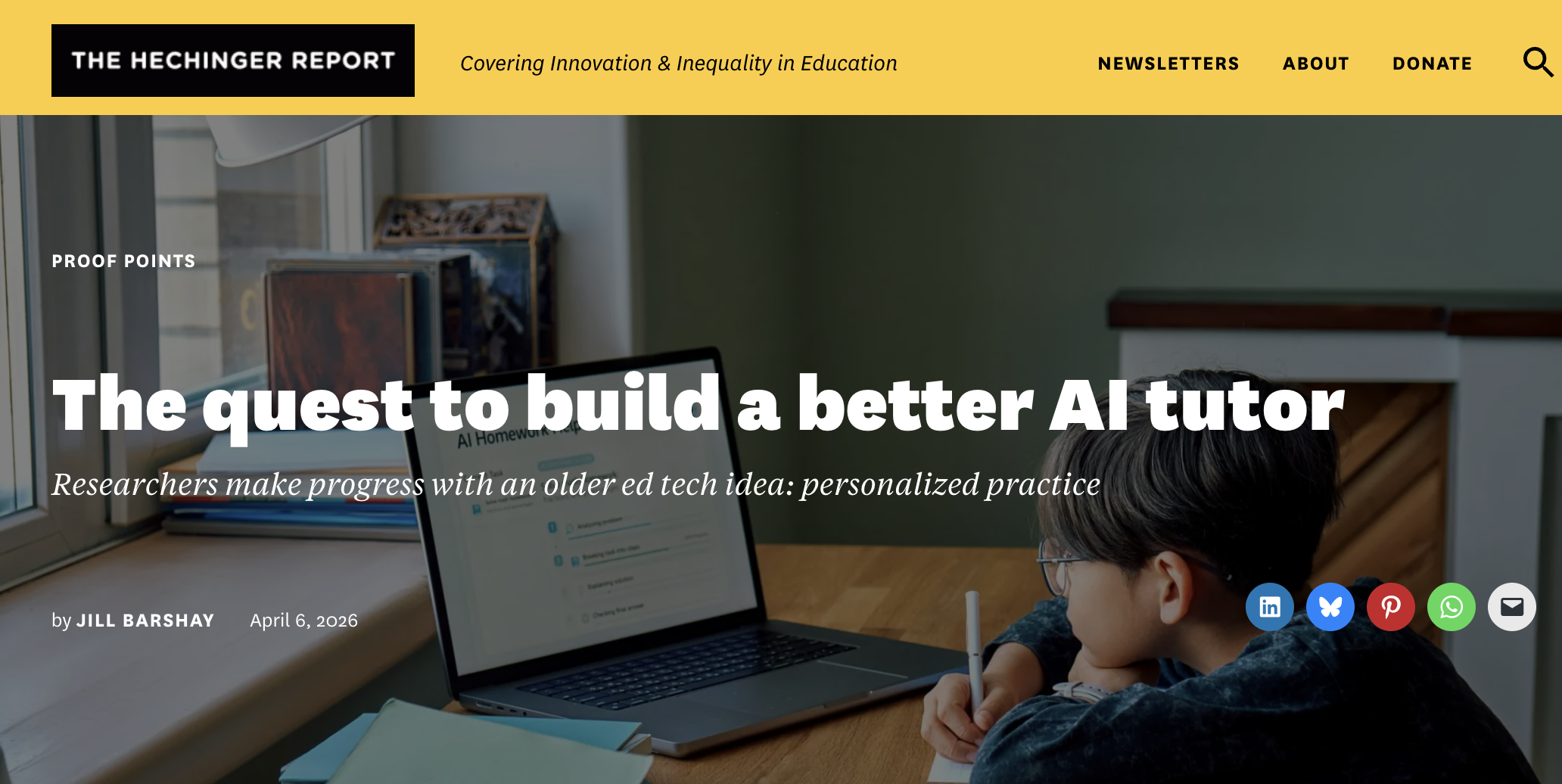

The quest to build a better AI tutor — from hechingerreport.org by Jill Barshay

Researchers make progress with an older ed tech idea: personalized practice

One promising idea has less to do with how an AI tutor explains concepts and more with what it asks students to practice next.

A team at the University of Pennsylvania, which included some AI skeptics, recently tested this approach in a study of close to 800 Taiwanese high school students learning Python programming. All the students used the same AI tutor, which was designed not to give away answers.

But there was one key difference. Half the students were randomly assigned to a fixed sequence of practice problems, progressing from easy to hard. The other half received a personalized sequence with the AI tutor continuously adjusting the difficulty of each problem based on how the student was performing and interacting with the chatbot.

The idea is based on what educators call the “zone of proximal development.” When problems are too easy, students get bored. When they’re too hard, students get frustrated. The goal is to keep students in a sweet spot: challenged, but not overwhelmed.

The researchers found that students in the personalized group did better on a final exam than students in the fixed problem group. The difference was characterized as the equivalent of 6 to 9 months of additional schooling, an eye-catching claim for an after-school online course that lasted only five months.

…

To address this, Chung’s team combined a large language model with a separate machine-learning algorithm that analyzes how students interact with the online course platform — how they answer the practice questions, how many times they revise or edit their coding, and the quality of their conversations with the chatbot — and uses that information to decide which problem to serve up next.

AI and the Law: What Educators Need to Know About Responsible Use in a Rapidly Changing Landscape — from rdene915.com by Dr. Rachelle Dené Poth, JD

As both an attorney and educator who has spent more than eight years researching, teaching, presenting, and writing about AI, I have worked with schools across K–12 and higher education that are navigating these exact questions. The legal implications of AI are not barriers to innovation, but I consider them to serve as guardrails that assist schools with adopting technology responsibly. The key is protecting students, educators, and institutions and staying informed. Understanding the legal landscape and any potential legal implications as a result of the use of AI in classrooms helps schools move forward with confidence rather than hesitation.

Sections of Rachelle’s posting include:

- Why AI and the Law Matter in Education

- Key Laws That Shape AI Use in Schools

- Data Privacy and Vendor Responsibility

- Transparency Builds Trust With Students and Families

- Accessibility, Equity, and Emerging Legal Considerations

- Teaching Digital Citizenship With AI Literacy

- Supporting Schools and Organizations Through AI and Legal Guidance

- Moving Forward With Confidence

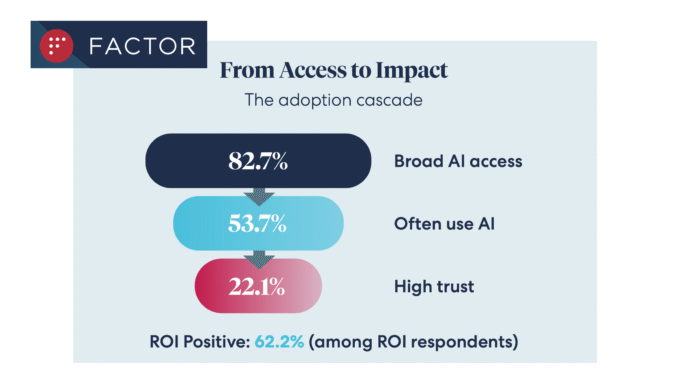

Legal AI Access at 83%, But Trust Issues Remain — from artificiallawyer.com

A new survey of over 200 inhouse and law firm leaders provides solid evidence that while AI tools are now ‘standard’ across our sector, that trust in AI outputs fundamentally drives usage, along with ROI – and vice versa.

The data, from ALSP Factor, shows that 83% had ‘broad AI access’, which is up from 61% in 2025, and in itself is a very positive development that tells us legal AI is now becoming ubiquitous for commercial lawyers, with around 54% using such tools ‘often’.

From DSC:

I have been proposing that the AI-based learning platform of the future will be constantly doing this — every single day. It will know what the in-demand skills are — at any given moment in time. It will then be able to direct you to resources that will help you gain those skills. Though in my vision, the system is querying actual/open job descriptions, not analyzing learning data from enterprise learners. Perhaps I should add that to the vision.

Coursera’s Job Skills Report 2026: Top skills for your students — from coursera.org

The Job Skills Report 2026 analyzes learning data from more than 6 million enterprise learners to identify the future job skills organizations need most. It’s designed for HR and L&D leaders; data, IT, and software & product development leaders; higher education administrators; and government agencies seeking actionable insights on workforce skills trends and AI-driven transformation.

…

Drawing on data from 6 million enterprise learners across nearly 7,000 organizations, the Job Skills Report 2026 guides you through the skills reshaping the global economy. This year’s analysis spans Data, IT, and Software & Product Development—and the Generative AI skills becoming essential for every role.

From DSC:

The types of postings/articles (such as the one below) make me ask, are we not shooting ourselves in the foot with AI and recent college graduates? If the bottom rungs continue to disappear, internships and apprenticeships can only go so far. There aren’t enough of them — especially valuable ones. So as this article points out, there will be threats to the long-term health of our talent pipelines unless we can take steps to thwart those impacts — and to do so fairly soon.

To me…vocational training and jobs are looking better all the time — i.e., plumbers, carpenters, electricians, mechanics, and more.

Can New Graduates Compete With AI? — from builtin.combyRichard Johnson

The increasing adoption of AI automation is compressing early-career jobs. How should new graduates get a foothold in the economy now?

Summary: AI is hollowing out entry-level roles by automating routine tasks, eliminating a rung on the career ladder. New graduates face intense competition and a rising skill floor. While firms gain short-term productivity, they risk a long-term talent shortage by eliminating junior training grounds.

Conversations about AI have covered all grounds: hype, fear and slop. But while some roll their eyes at yet another automation headline, soon?to?be graduates are watching the labor market with a very different level of urgency. They’re entering a world where the old paradox of needing experience to get experience is colliding with a new reality: AI is absorbing the standardized, routine tasks that once defined entry?level work. The result isn’t just a shift in job descriptions or skill-requirements, but rather a structural reshaping of the career pipeline.

Entry-level workers face an outsized disruption to their long-term career trajectories. They have the least buffer to adapt given their lack of relevant job market experience and heightened financial pressure to secure a job quickly with the student-debt repayment periods for recent graduates looming.

Momentum early in one’s career matters, and the first job on a resume shapes future compensation bands and opportunities. It also serves as a signal for perceived specialization or, at minimum, interest. Losing that foothold has compounding effects to one’s career ladder.

Also relevant/see:

New Anthropic Institute to Study Risks and Economic Effects of Advanced AI — from campustechnology.com by John K. Waters

Key Takeaways

- Anthropic has launched the Anthropic Institute, a new research effort focused on the biggest societal challenges posed by more powerful AI systems.

- The institute will study how advanced AI could affect the economy, the legal system, public safety, and broader social outcomes.

- Anthropic co-founder Jack Clark will lead the institute in a new role as the company’s head of public benefit.

- The new unit brings together Anthropic’s existing red-teaming, societal impacts, and economic research work, while adding new hires and new research areas.