Law Firm AI Adoption: So Many Choices — from abovethelaw.com by Stephen Embry

Firms need to recognize reality, define what their legal professionals need, and then determine how to adopt and govern the use of AI tools.

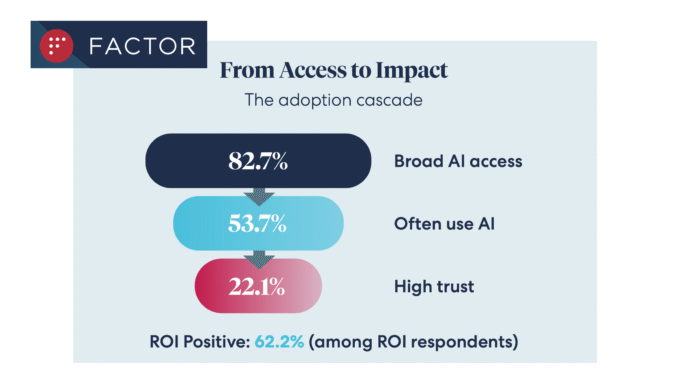

It’s tough to be a law firm managing partner in the age of AI. So many choices, so little time. It’s like the proverbial kid in the candy store who has so many choices that they either can’t pick out anything or reach for too much. We see evidence of the first option in 8am’s recent outstanding Legal Industry Report, authored by Niki Black.

8am’s Legal Industry Report

One thing that stood out in the report was the discrepancy between use of AI by individual legal professionals and what firms are doing when it comes to AI adoption and guidance. Almost 75% of those who responded said they were using general purpose AI tools like ChatGPT and Claude for work purposes. That’s pretty significant.

Legalweek: It’s time to re-engineer how legal work is delivered — from legaltechnology.com by Caroline Hill

AI for good

While focusing on the risks of AI going wrong, it is only fair to mention the conversations I had around using AI for good. Two in particular stand out.

The first is the news from Everlaw that its Everlaw for Good Program has, over the past year, supported more than 675 active cases across 235 organisations, and expanded its support to a growing network of non-profit organisations.

The program extends Everlaw’s technology to organisations working to advance access to justice. In a recent survey by Everlaw, 88% of legal aid professionals said they are optimistic about AI’s potential to help narrow the justice gap.

“Mission-driven organizations are increasingly handling complex investigations and litigation with limited resources,” said Joanne Sprague, head of Everlaw for Good. “Expanding access to powerful, easy-to-use technology helps level the playing field so these teams can uncover critical evidence, take on more complex matters, and yield stronger results for the communities they serve.”

LawNext on Location: Visiting Everlaw’s Headquarters For A Conversation with AJ Shankar, Founder and CEO — from lawnext.com by Bob Ambrogi

The bulk of our conversation focuses on generative AI, and how Everlaw has approached it differently than much of the market. Rather than bolting on a chatbot, AJ says, Everlaw embedded AI deliberately throughout the platform — document summarization, coding suggestions, deposition analysis, fact extraction — always grounding responses in the actual documents at hand and citing sources so users can verify the work. The December launch of Deep Dive, which lets litigators pose a question and get a synthesized, cited answer drawn from an entire document corpus in about a minute, is the feature AJ calls a “new era” for discovery — one he genuinely believes represents a categorical shift.