Also see:

What the Future of Learning Looks Like in the Era of AI — from the Center for Academic Innovation at the University of Michigan, by Sean Corp

AI & the Future of Learning Summit brings industry, education leaders together to discuss higher education’s opportunity to lead, what students need, and what partnerships are possible

As artificial intelligence rapidly reshapes the nature of work and learning, speakers at the University of Michigan’s AI & the Future of Learning Summit delivered a clear message: higher education must take a leading role in defining what comes next.

One CEO of a leading educational technology company put it like this: “The only bad thing would be universities standing still.”

Universities must embrace their roles as providers of continuous, lifelong learning that evolves alongside technological change.

…

This shift is already affecting early-career pathways. Employers are placing greater emphasis on experience, while traditional entry-level roles are becoming less accessible. There is often a gap between what a credential represents and the expectations of employers.

That gap is particularly evident in access to internships. Chris Parrish, co-founder and president of Podium, noted that millions of students compete for a limited number of internships each year, making it increasingly difficult to gain the experience employers demand.

“If you miss out on an internship, you’re twice as likely to be unemployed,” Parrish said.

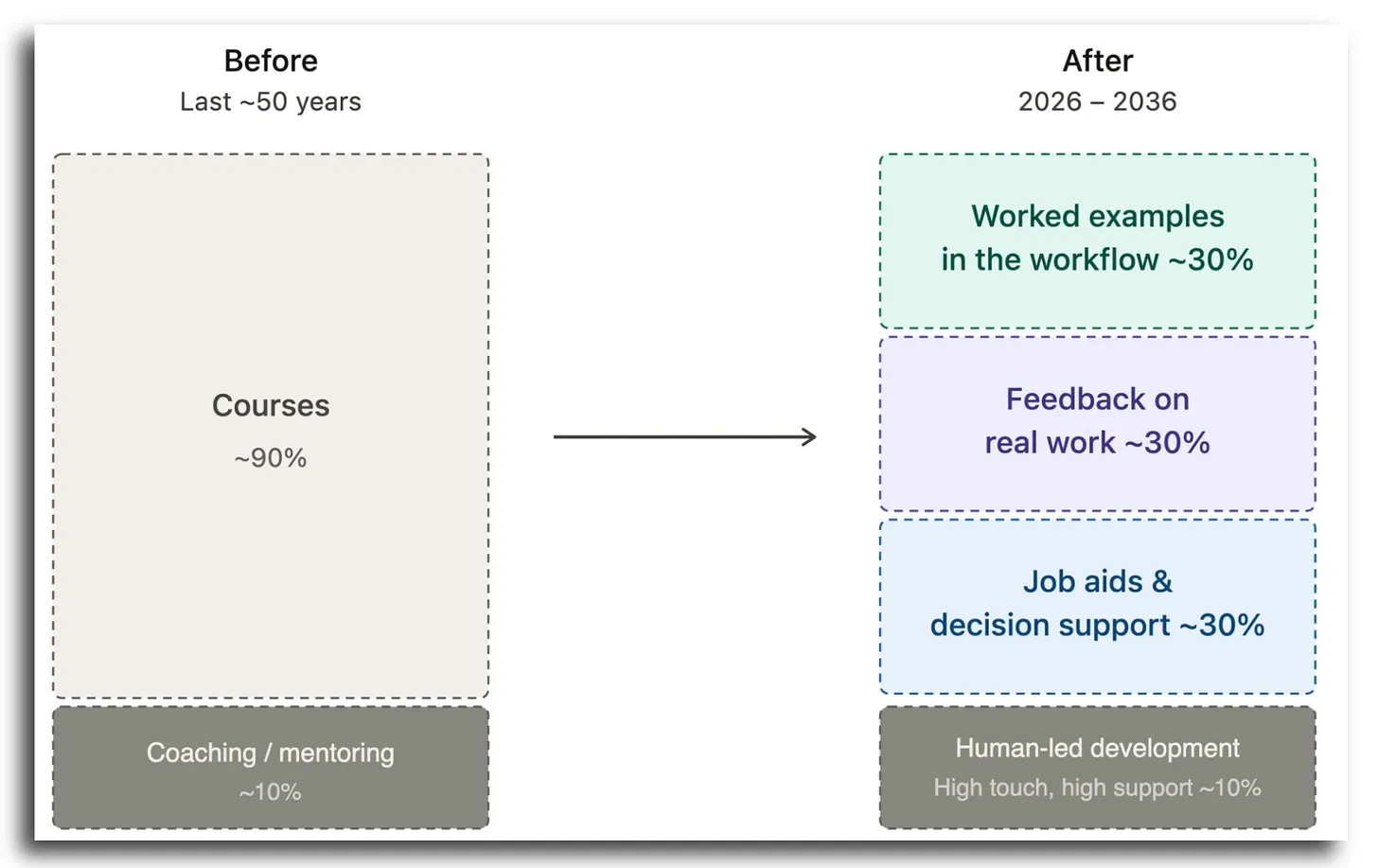

The Course Is Dying as the Unit of Learning — from drphilippahardman.substack.com by Dr Philippa Hardman

Here’s why, and what’s replacing It

What the Bleeding Edge Looks like in Practice

So what does “the new stack” actually look like when organisations lean into this? Here are four real patterns already in play.

Engineering: from engine courses to in-workflow AI coaching.

Product development: from courses to craft-specific agents.

Compliance: from annual course to nudge systems.|

Enablement systems, not catalogues.

…

The quest to build a better AI tutor — from hechingerreport.org by Jill Barshay

Researchers make progress with an older ed tech idea: personalized practice

One promising idea has less to do with how an AI tutor explains concepts and more with what it asks students to practice next.

A team at the University of Pennsylvania, which included some AI skeptics, recently tested this approach in a study of close to 800 Taiwanese high school students learning Python programming. All the students used the same AI tutor, which was designed not to give away answers.

But there was one key difference. Half the students were randomly assigned to a fixed sequence of practice problems, progressing from easy to hard. The other half received a personalized sequence with the AI tutor continuously adjusting the difficulty of each problem based on how the student was performing and interacting with the chatbot.

The idea is based on what educators call the “zone of proximal development.” When problems are too easy, students get bored. When they’re too hard, students get frustrated. The goal is to keep students in a sweet spot: challenged, but not overwhelmed.

The researchers found that students in the personalized group did better on a final exam than students in the fixed problem group. The difference was characterized as the equivalent of 6 to 9 months of additional schooling, an eye-catching claim for an after-school online course that lasted only five months.

…

To address this, Chung’s team combined a large language model with a separate machine-learning algorithm that analyzes how students interact with the online course platform — how they answer the practice questions, how many times they revise or edit their coding, and the quality of their conversations with the chatbot — and uses that information to decide which problem to serve up next.

The Most Obvious Fix in Education — from michelleweise.substack.com by Michelle Weise

The No-Brainer Nobody’s Doing

We know what better learning looks like. We have known for a while.

Real problems. Real roles. Built-in conflict. Conditions that simulate the messiness of actual work. Reflection that asks not just what did you do but who are you becoming? These are not radical ideas. They are not untested theories. The research is clear, employers are asking for exactly this, and students consistently report that the closest they got to real work was the most valuable part of their education.

So why aren’t universities doing more of it?

That is the question worth sitting with — because the gap between what we know and what we do is not a knowledge problem. It is a design problem, an incentive problem, and if we’re being candid, a courage problem.

Because in the meantime, learners are paying the price. They graduate credentialed but untested. They enter labor markets that want proof of performance and experience, not transcripts. They lack the networks, the exposure, and the scar tissue that comes from navigating real work.

Also relevant, see:

The Apprenticeship (R)Evolution — from insidehighered.com by Sara Weissman and Colleen Flaherty

Once synonymous with hard hats and tool belts, apprenticeships are branching into health care, artificial intelligence, business services, advanced manufacturing and more.

Such programs also challenge stereotypes about apprenticeships—namely that they’re only in construction, an earn-and-learn catchall for traditionally apprenticeable occupations such as bricklayer, plumber, carpenter and electrician. In integrating robotics, automation, machining and logistics, the manufacturing development program is a bridge to understanding how apprenticeships are evolving to support some of the nation’s fastest-growing industries. These include advanced manufacturing, but also health care, information technology and other business services.

From DSC:

I have been proposing that the AI-based learning platform of the future will be constantly doing this — every single day. It will know what the in-demand skills are — at any given moment in time. It will then be able to direct you to resources that will help you gain those skills. Though in my vision, the system is querying actual/open job descriptions, not analyzing learning data from enterprise learners. Perhaps I should add that to the vision.

Coursera’s Job Skills Report 2026: Top skills for your students — from coursera.org

The Job Skills Report 2026 analyzes learning data from more than 6 million enterprise learners to identify the future job skills organizations need most. It’s designed for HR and L&D leaders; data, IT, and software & product development leaders; higher education administrators; and government agencies seeking actionable insights on workforce skills trends and AI-driven transformation.

…

Drawing on data from 6 million enterprise learners across nearly 7,000 organizations, the Job Skills Report 2026 guides you through the skills reshaping the global economy. This year’s analysis spans Data, IT, and Software & Product Development—and the Generative AI skills becoming essential for every role.

Is the eLearning authoring tool dead? — from linkedin.com by Melissa Milloway & Tim Slade

…which links to the video below:

Here is Chris Martin’s posting on LinkedIn.com:

Here is Dominik Mate Kovacs’ posting on LinkedIn.com:

The AI ‘hivemind’: Why so many student essays sound alike — from hechingerreport.org by Jill Barshay

A study of more than 70 large language models found similar answers to brainstorming and creative writing prompts

The answers were frequently indistinguishable across different models by different companies that have different architectures and use different training data. The metaphors, imagery, word choices, sentence structures — even punctuation — often converged. Jiang’s team called this phenomenon “inter-model homogeneity” and quantified the overlaps and similarities. To drive the point home, Jiang titled her paper, the “Artificial Hivemind.” The study won a best paper award at the annual conference on Neural Information Processing Systems in December 2025, one of the premier gatherings for AI research.

AI Has No Moral Compass. Do You? — from michelleweise.substack.com by Michelle Weise & Dana Walsh

Why the Age of AI Demands We Take Character Formation Seriously

Here’s something to chew on:

Anthropic, the company behind Claude — a chatbot used by 30 million users per month — has exactly one person (whom we know of) working on AI ethics. One. A young Scottish philosopher is doing the vital work of training a large language model to discern right from wrong.

I don’t say this to shame Anthropic. In fact, Anthropic appears to be the only company (that we know of) being explicit about the moral foundations and reasoning of its chatbot. Hundreds of millions of users worldwide are leveraging tools from other LLMs that do not appear to have an explicit moral compass being cultivated from within.

I raise this because this is yet another example of where we are: extraordinary technical power advancing without an equally strong moral infrastructure to support it.

Why do we keep producing people who are skilled but not wise?

Across the divide: reimagining faculty-staff collaboration in higher education — from timeshighereducation.com by Saskia van de Gevel

Academic units do best when they harness different viewpoints – from field scientists and curriculum designers to extension professionals – to drive innovation and relevance. Saskia van de Gevel offers proactive advice

Universities are not sustained by individual leaders or isolated units. They are sustained by teams of people who bring different kinds of expertise to a shared mission. When faculty and professional staff collaborate as genuine partners – aligned around outcomes, clear about roles and committed to mutual respect – institutions become more resilient, innovative and effective.

Also from timeshighereducation.com, see:

Again, we don’t send them 200 CVs. We might send 20, but they’re meticulously shortlisted. The employer saves time, the student feels they are being taken seriously and trust builds quickly on both sides.

And because we work closely with employers, we learn something universities often struggle to find out early enough: what the market is asking for now.

What academics need to know: we can’t do this without you

If I could say one thing to academic colleagues anywhere, it’s that employability can’t sit next to the curriculum. It has to live with it.

Here is Pradnya’s posting out on LinkedIn.com:

From DSC…note these excerpts from Pradnya’s posting:

Pradnya links to a page out at ParadisoSolutions.com. Check out some of the functionality this AI-powered system provides:

5 Tech Strategies to Enhance Student-Led Learning — from edutopia.org by Rachelle Dené Poth

While technology has potential to distract students, it can also boost engagement and help them actively demonstrate their learning.

Over the years, I have learned that engagement doesn’t happen simply by adding technology. It increases when we give students more ownership by designing experiences that allow them to build, collaborate, reflect, and teach one another. Depending on how we use it, technology can either amplify engagement or distract from it. Technology can help build students’ confidence in learning, but it can also lead to passivity. When technology is used to amplify students’ voice, choice, and ownership in learning, their engagement will naturally increase.

Here are five strategies and some digital tools that can be used across grade levels and content areas to boost student engagement, build confidence, foster collaboration, and support meaningful learning experiences.

Project-Based Learning (PBL)

Implementing a PBL Design Challenge in Your School — from edutopia.org by Lisa Beck & Kim Mishkin

A weeklong, schoolwide project-based learning challenge encourages students to try to tackle meaningful problems.

For the past five years, Hudson Lab School (HLS), a K–8 progressive school committed to project?based learning (PBL), has kicked off each school year with an exciting tradition: Design Challenge Week. In five days, students take on a real?world problem, explore each phase of the design process, and present what they created and learned to an authentic audience. Design Challenge Week introduces concepts that students will revisit all year and offers a model for how any educational setting could experiment with PBL on a smaller scale. Even short, well?designed challenges can lead to deeply engaged learning experiences.

How to Give Students Directions They Actually Understand — from edutopia.org by Mary Davenport

Making small changes in your instructions can have a significant impact on students’ understanding and engagement.

No more than a minute after you’ve provided instruction on the day’s targeted content and given students directions for their next task, some brave soul utters the line that brings tired teachers to their knees: “What are we supposed to be doing?”

None of us want this. As teachers, we all want students to fully understand what they’re supposed to be doing so that they can be successful as they do it.

Good news: A few small changes in how we give directions can be the lever that boosts student understanding and engagement.

How to Get Consistent, On-Brand Course Images from Any AI Image Tool — from drphilippahardman.substack.com by Dr. Philippa Hardman

A 3-step workflow that works every time — whatever AI tool you’re using

Most designers try to describe their way to an image. That’s the wrong approach. The goal is to show the tool the world it should be working in, then give it the minimum it needs to place your subject inside that world.

Every long, over-specified prompt is a sign that your visual inputs aren’t doing enough work.

The fix is an 3-step process which gives you superpowers in AI image generation…

How AI Could Transform, or Replace, the LMS — from futureupodcast.com by Jeff Selingo, Michael Horn, and Matthew Pittinsky

Tuesday, March 10, 2026 – For 30 years now, colleges have relied on the Learning Management System, or LMS, as a key portal for professors and students to teach and learn. It’s a tool that has helped colleges adapt to online learning and bring digital tools to classroom teaching. But generative AI seems poised to disrupt the LMS. And it’s unclear whether the LMS will evolve—or be replaced altogether. For this episode, Jeff and Michael talk with a pioneer of the technology, Matthew Pittinsky, about the lessons of past moments of tech disruption like the smartphone and cloud computing and about what could be different this time. This episode is made with support from Ascendium Education Group.

Gemini, Explained — from wondertools.substack.com by Jeremy Caplan

5 features worth your time — tested and compared

Google’s AI, Gemini, has quickly become one of the AI tools I rely on most. It builds dashboards and creates remarkable infographics. It spins out comprehensive research reports in minutes that would once have taken days to assemble.

It’s improving every month. On March 13, Google announced Ask Maps, so you can query Gemini about things like “Which nearby tennis courts are open with lights so I can play tonight?” On March 10, Gemini added new integrations to build, summarize, and analyze your Google Docs, Sheets, and Slides.

In today’s post below: catch up on the Gemini features worth your time, candid comparisons with other AI tools, and answers to the questions I hear most.

How we’re reimagining Maps with Gemini — from blog.google

Ask Maps answers your real-world questions with a conversation, and Immersive Navigation makes your route more intuitive.

Today, Google Maps is fundamentally changing what a map can do. By bringing together the world’s freshest map with our most capable Gemini models, we’re transforming exploration into a simple conversation and making driving more intuitive than ever with our biggest navigation upgrade in over a decade.

Ask anything about any place

We’re introducing Ask Maps, a new conversational experience that answers complex, real-world questions a map could never answer before. Now you can ask for things like, “My phone is dying — where can I charge it without having to wait in a long line for coffee?” or “Is there a public tennis court with lights on that I can play at tonight?” Previously, finding this information meant lots of research and sifting through reviews. But now, you can just tap the “Ask Maps” button and get your questions answered conversationally, with a customized map to help you visualize your options.

U.S. Department of Labor Defines 5 Key Areas of AI Literacy — from campustechnology.com by Rhea Kelly

Key Takeaways

The Future of Learning Looks Like Workforce Infrastructure — from workshift.org by Bruno V. Manno

For years, “ed tech” was an umbrella term grouping schools, online platforms, courses, credentials, and software under one idea: technology applied to education. That shorthand made it easier for investors, policymakers, and institutions to talk about innovation without rethinking how learning fits into the economy. Today, it no longer explains what’s happening.

That’s the central insight of “The European Learning & Work Funding Report” by Brighteye Ventures, a research and advisory firm tracking investment at the intersection of learning, work, and productivity. The report’s seventh edition shows that learning is no longer funded primarily as education. It is increasingly funded as part of how work gets done.

Across Europe, and increasingly the U.S., capital is flowing not toward courses or credentials but toward systems that are closer to production, including hiring platforms, staffing firms, clinical decision tools, payroll systems, and compliance software. These are not educational products, though learning is embedded throughout them.

In these systems, learning is not the point. Outcomes are.

Build hybrid institutions that erase boundaries. Stop forcing learners to navigate disconnected systems. Create partnerships that blend K-12 schools, community colleges, training providers, and employers into one integrated system, so students move through one coherent system, not four separate bureaucracies.

Teach Smarter with AI — from wondertools.substack.com by Jeremy Caplan and Lance Eaton

10 tested strategies from two educators who actually use them

I recently talked with Lance Eaton, Senior Associate Director of AI and Teaching & Learning at Northeastern University and writer of AI + Education = Simplified. We traded ideas about what’s actually working. We came up with 10 specific, practical ways anyone who teaches, coaches, or leads can put AI to work.

Watch the full conversation above, or read highlights below.

Beyond Audio Summaries: How to Use NotebookLM to *Actually* Design Better Learning — from drphilippahardman.substack.com by Dr. Philippa Hardman

Five methods to maximise the value of NotebookLM’s features

In practice, what makes NotebookLM different for learning designers is four things:

…

5 Evidence-Based Methods NotebookLM Operationalises…

Shadow AI Isn’t a Threat: It’s a Signal — from campustechnology.com by Damien Eversmann

Unofficial AI use on campus reveals more about institutional gaps than misbehavior.

Key Takeaways

How L&D Can Lead in the Age of AI Even If Your Company’s Not Ready — from learningguild.com

How to lead even when your company doesn’t allow AI

Even if your corporation isn’t ready for AI, you can still research tools personally to stay ahead of the curve, so when organizational restrictions lift, you are ready to use AI for learning right away. Here are some tools you can test at home if they’re restricted in your workplace:

The Higher Ed Playbook for AI Affordability — from campustechnology.com by Jason Dunn-Potter

Key Takeaways