Let AI Interview You — from wondertools.substack.com by Jeremy Caplan & Jay Dixit

A smarter way to get past the blank page

There’s nothing wrong with using AI to get answers to your questions. But there’s another mode of interacting with AI that many people never consider — one I find much more useful for my creative process.

Here’s what I do instead: I flip the script and let the AI ask the questions. Instead of prompting AI, I get the AI to prompt me.

6 Reasons Universities Are Building Media Labs Now — from edtechmagazine.com by Brad Grimes

Digital production centers help institutions close the gap between academic training and professional practice.

Higher education is undergoing a significant transformation in how it prepares the next generation of media professionals. Across the country, universities are investing in state-of-the-art media labs — facilities built not around traditional classroom instruction, but around the tools, workflows and collaborative environments that define today’s professional production landscape. These spaces represent a fundamental rethinking of what it means to train students for careers in film, animation, gaming and digital storytelling.

Dr. Hardman’s post on LinkedIn

and/or

See Dr. Hardman’s post on substack.com entitled:

- The Illusion AI Productivity Gains

Why your AI tools aren’t delivering the ROI you were promised — and what to do about it

Why Sal Khan’s AI revolution hasn’t happened yet, according to Sal Khan — from chalkbeat.org by Matt Barnum

Three years ago, as Khan Academy founder Sal Khan rolled out an AI-powered tutoring chatbot, he predicted a revolution in learning.

So far, the revolution hasn’t happened, he acknowledges.

“For a lot of students, it was a non-event,” Khan told me recently about his eponymous chatbot, Khanmigo. “They just didn’t use it much.”

Khan gives this analogy: Imagine he walked into a class, sat in the back of the room, and waited for students to seek out help. “Some will; most won’t,” he said. That’s been the experience with AI tutoring, he said. It doesn’t necessarily make students motivated to learn or fill in gaps in knowledge needed to ask questions.

“AI is going to help,” said Khan of this reimagined Khan Academy. “But I think our biggest lever is really investing in the human systems.”

Recording at LegalWeek in New York, Zach sits down with Shlomo Klapper (founder of Learned Hand) and Bridget McCormack, former Chief Justice of the Michigan Supreme Court and now CEO of the American Arbitration Association, to challenge one of the biggest double standards in legal AI: “AI for me, but not for thee.” Lawyers are now widely using AI like #Harvey and #Legora — and now more than ever #claude — but the moment it touches judges or arbitrators, support drops off.

That hesitation comes as courts are under real strain, with judges handling thousands of cases a year and only minutes to decide each one, and no realistic way to keep up. Shlomo describes Learned Hand’s “AI law clerk,” built to support judicial research, analysis, and drafting, while Bridget brings the perspective of someone who has both made decisions on the bench and has pioneered the American Arbitration Association’s AI Arbitrator, a first of its kind. The conversation moves beyond AI as an assistant and into a harder shift: AI as part of decision-making itself, and whether the system can continue to function without it.

Also see:

Are Judges the Next To Adopt AI? Is That a Good Thing? — from legallydisrupted.com by Zach Abramowitz

Episode 46 of Legally Disrupted Has the Two Best Experts on the Topic

This brings us to an admitted, glaring double standard between lawyers and judges. Lawyers are totally fine with lawyers using AI, but those same lawyers become apoplectic at the thought of judges or arbitrators using AI. It is very much “AI for me, but not for thee.” A survey last year from White & Case and Queen Mary University of London School of Law showed that nearly 90% of lawyers were deeply supportive of AI for their own research and analytics, but that support drops to just 23% when it comes to a judge or arbitrator using it to make a decision.

…

Yet, despite that hullabaloo, there is a massive need for alternative forms of intelligence in our courts. Right now, the system is drowning. We have state court trial judges disposing of 2,500 cases a year, meaning they have barely half an hour to spend on a single case. We are simply not going to lawyer our way out of this 50-year backlog. If we just use humans, we have a massive demand for intelligence but a severely limited supply. AI could step in to give these judges the capacity they desperately need for the courts to actually function.

What the Future of Learning Looks Like in the Era of AI — from the Center for Academic Innovation at the University of Michigan, by Sean Corp

AI & the Future of Learning Summit brings industry, education leaders together to discuss higher education’s opportunity to lead, what students need, and what partnerships are possible

As artificial intelligence rapidly reshapes the nature of work and learning, speakers at the University of Michigan’s AI & the Future of Learning Summit delivered a clear message: higher education must take a leading role in defining what comes next.

One CEO of a leading educational technology company put it like this: “The only bad thing would be universities standing still.”

Universities must embrace their roles as providers of continuous, lifelong learning that evolves alongside technological change.

…

This shift is already affecting early-career pathways. Employers are placing greater emphasis on experience, while traditional entry-level roles are becoming less accessible. There is often a gap between what a credential represents and the expectations of employers.

That gap is particularly evident in access to internships. Chris Parrish, co-founder and president of Podium, noted that millions of students compete for a limited number of internships each year, making it increasingly difficult to gain the experience employers demand.

“If you miss out on an internship, you’re twice as likely to be unemployed,” Parrish said.

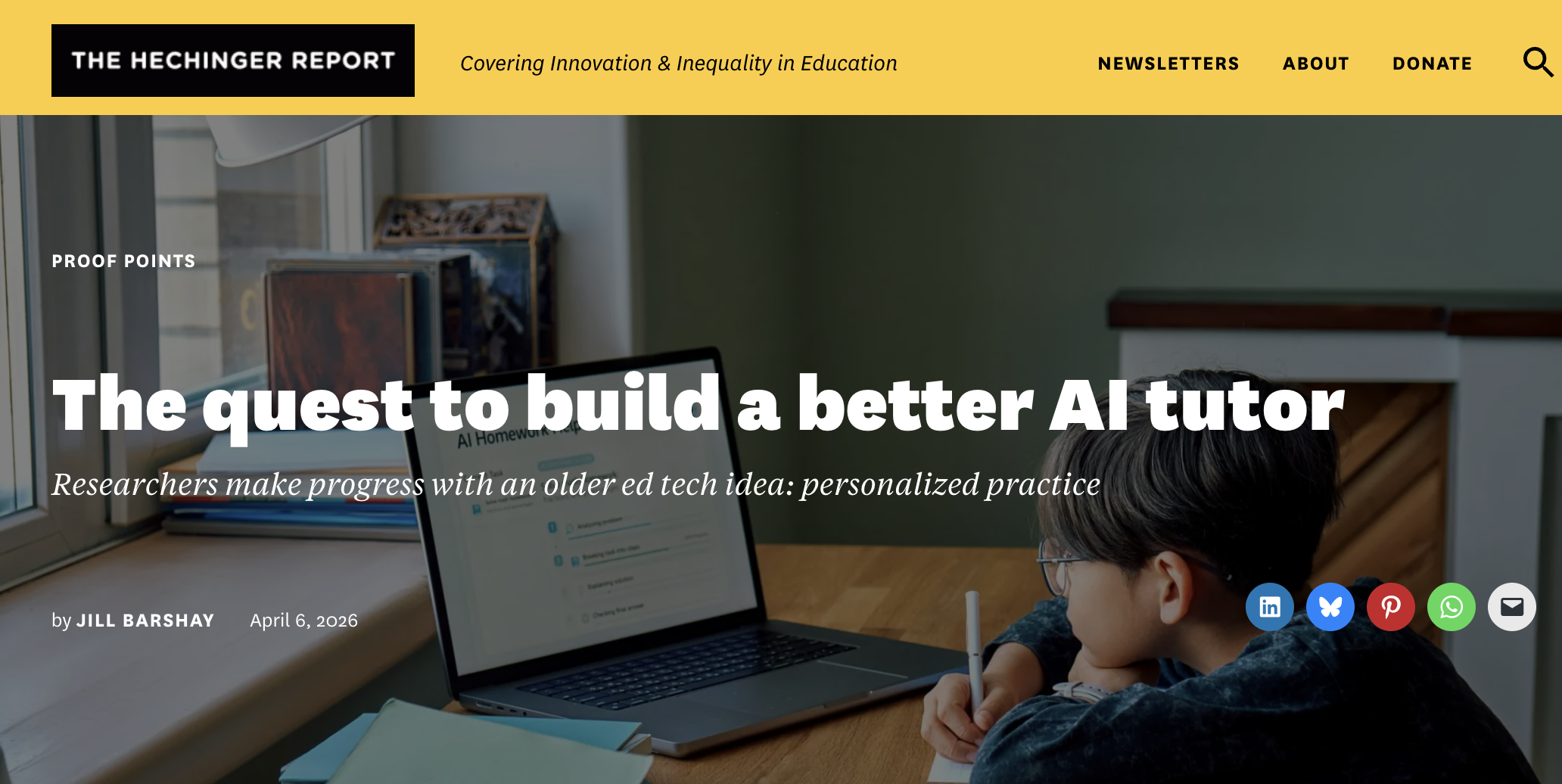

The quest to build a better AI tutor — from hechingerreport.org by Jill Barshay

Researchers make progress with an older ed tech idea: personalized practice

One promising idea has less to do with how an AI tutor explains concepts and more with what it asks students to practice next.

A team at the University of Pennsylvania, which included some AI skeptics, recently tested this approach in a study of close to 800 Taiwanese high school students learning Python programming. All the students used the same AI tutor, which was designed not to give away answers.

But there was one key difference. Half the students were randomly assigned to a fixed sequence of practice problems, progressing from easy to hard. The other half received a personalized sequence with the AI tutor continuously adjusting the difficulty of each problem based on how the student was performing and interacting with the chatbot.

The idea is based on what educators call the “zone of proximal development.” When problems are too easy, students get bored. When they’re too hard, students get frustrated. The goal is to keep students in a sweet spot: challenged, but not overwhelmed.

The researchers found that students in the personalized group did better on a final exam than students in the fixed problem group. The difference was characterized as the equivalent of 6 to 9 months of additional schooling, an eye-catching claim for an after-school online course that lasted only five months.

…

To address this, Chung’s team combined a large language model with a separate machine-learning algorithm that analyzes how students interact with the online course platform — how they answer the practice questions, how many times they revise or edit their coding, and the quality of their conversations with the chatbot — and uses that information to decide which problem to serve up next.

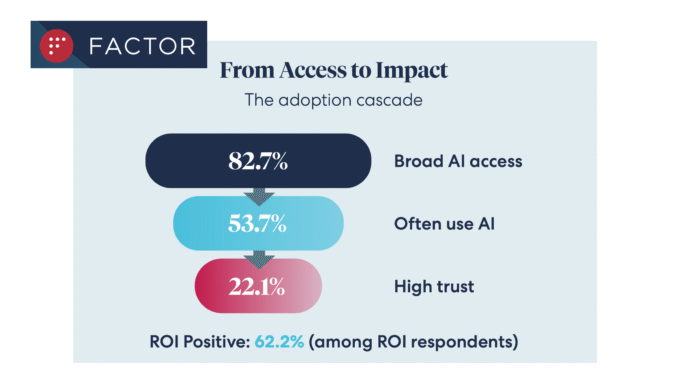

Legal AI Access at 83%, But Trust Issues Remain — from artificiallawyer.com

A new survey of over 200 inhouse and law firm leaders provides solid evidence that while AI tools are now ‘standard’ across our sector, that trust in AI outputs fundamentally drives usage, along with ROI – and vice versa.

The data, from ALSP Factor, shows that 83% had ‘broad AI access’, which is up from 61% in 2025, and in itself is a very positive development that tells us legal AI is now becoming ubiquitous for commercial lawyers, with around 54% using such tools ‘often’.

From DSC:

I have been proposing that the AI-based learning platform of the future will be constantly doing this — every single day. It will know what the in-demand skills are — at any given moment in time. It will then be able to direct you to resources that will help you gain those skills. Though in my vision, the system is querying actual/open job descriptions, not analyzing learning data from enterprise learners. Perhaps I should add that to the vision.

Coursera’s Job Skills Report 2026: Top skills for your students — from coursera.org

The Job Skills Report 2026 analyzes learning data from more than 6 million enterprise learners to identify the future job skills organizations need most. It’s designed for HR and L&D leaders; data, IT, and software & product development leaders; higher education administrators; and government agencies seeking actionable insights on workforce skills trends and AI-driven transformation.

…

Drawing on data from 6 million enterprise learners across nearly 7,000 organizations, the Job Skills Report 2026 guides you through the skills reshaping the global economy. This year’s analysis spans Data, IT, and Software & Product Development—and the Generative AI skills becoming essential for every role.

Is the eLearning authoring tool dead? — from linkedin.com by Melissa Milloway & Tim Slade

…which links to the video below:

Here is Chris Martin’s posting on LinkedIn.com:

Here is Dominik Mate Kovacs’ posting on LinkedIn.com:

The AI ‘hivemind’: Why so many student essays sound alike — from hechingerreport.org by Jill Barshay

A study of more than 70 large language models found similar answers to brainstorming and creative writing prompts

The answers were frequently indistinguishable across different models by different companies that have different architectures and use different training data. The metaphors, imagery, word choices, sentence structures — even punctuation — often converged. Jiang’s team called this phenomenon “inter-model homogeneity” and quantified the overlaps and similarities. To drive the point home, Jiang titled her paper, the “Artificial Hivemind.” The study won a best paper award at the annual conference on Neural Information Processing Systems in December 2025, one of the premier gatherings for AI research.

AI Has No Moral Compass. Do You? — from michelleweise.substack.com by Michelle Weise & Dana Walsh

Why the Age of AI Demands We Take Character Formation Seriously

Here’s something to chew on:

Anthropic, the company behind Claude — a chatbot used by 30 million users per month — has exactly one person (whom we know of) working on AI ethics. One. A young Scottish philosopher is doing the vital work of training a large language model to discern right from wrong.

I don’t say this to shame Anthropic. In fact, Anthropic appears to be the only company (that we know of) being explicit about the moral foundations and reasoning of its chatbot. Hundreds of millions of users worldwide are leveraging tools from other LLMs that do not appear to have an explicit moral compass being cultivated from within.

I raise this because this is yet another example of where we are: extraordinary technical power advancing without an equally strong moral infrastructure to support it.

Why do we keep producing people who are skilled but not wise?

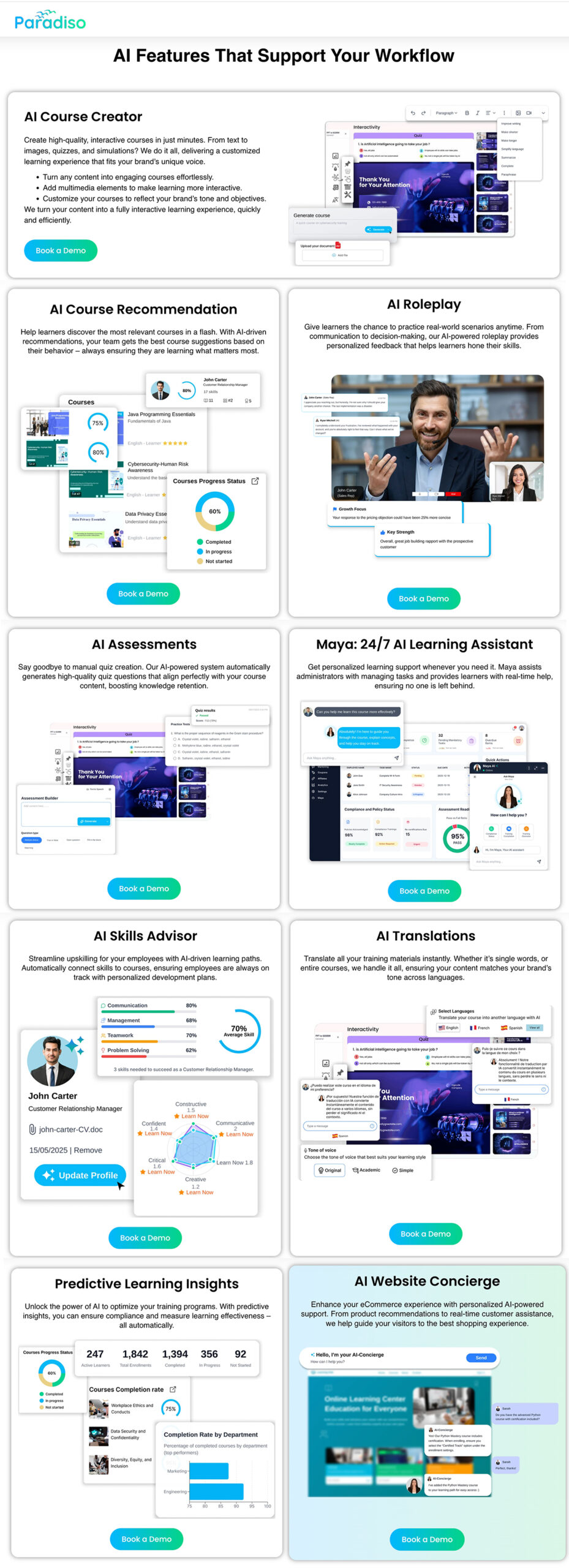

Here is Pradnya’s posting out on LinkedIn.com:

From DSC…note these excerpts from Pradnya’s posting:

- I’m not manually assigning 400 people anymore ? They’re actually taking relevant courses now

Shows me the data ? Suggests courses to fix it ? I look like a strategic genius

Completion rates up 34% ? Nobody’s “stuck” anymore

Pradnya links to a page out at ParadisoSolutions.com. Check out some of the functionality this AI-powered system provides:

The Future of College in an AI World — from linkedin.com by Jeff Selingo

In today’s issue: The tension over AI in higher ed; application inflation continues and testing is back; what’s the future of the original classroom technology, the learning management system.

Hundreds of higher ed and industry leaders gathered Tuesday for a summit

on AI and the future of learning at the University of Michigan.

.

Conversations like the one we had at Michigan this week are necessary, but the action rarely matches the ambition.

- We say the humanities are the operating system of an AI world, yet students and parents don’t believe it. They’re voting with their feet toward STEM, business, and narrowly tailored majors they believe will lead to a job.

- Meanwhile, colleges are quietly eliminating the very humanities degrees the panelists were championing, employers are cutting the entry rungs off the career ladder for new graduates, and as Podium Education co-founder Christopher Parrish reminded us yesterday, there’s a yawning gap between demand for experience and the internships that actually exist.

AI Music Generators: Teaching With These Catchy AI Tools — from techlearning.com by Erik Ofgang

AI music generators are getting better and better, and there are more applications in the classroom as a result.

Are All AI Music Generators More Or Less The Same?

No. After experimenting with a few various free ones, I found a wide range of quality with the same prompts.

Gemini is the only one I’d currently recommend. It’s user-friendly but limited and only creates 30-second clips. Other music generators could potentially outperform Gemini with prompt adjustments. The ones I tried did better with the instrumentals but struggled more with the lyrics, and that kind of defeated the purpose of the tool for me.

ChatDOC: Teaching With The AI Summarizing Tool — from techlearning.com by Erik Ofgang

ChatDOC lets users turn any PDF into an AI chatbot that can summarize the text, answer questions, and generate quizzes.

What Is ChatDOC?

ChatDOC is an AI designed to help users interact with PDFs of various types, be it research papers, short stories, or chapters from larger works. Users upload a PDF and then have the opportunity to “chat” with that document, that is speak with a chatbot that bases its answers off of the uploaded text.

ChatDOC can perform tasks such as provide a short summary, search for specific terms, explain the overall theme if it’s a work of literature, or unpack the science in a research paper.

Other similar tools are out there, but ChatDOC is definitely one of the better PDF readers I’ve used. Its free version is quick and easy-to-use, and delivers on its promise of providing an AI that can discuss a given document with users and even quiz them on it.

From AI access to workforce readiness — from chieflearningofficer.com by Johnny Hamilton, Amy Stratbucker, & Brad Bigelow

Is your workforce using the right tool with an outdated mindset and playbook? Why old playbooks fall short — and what learning leaders must do next.

The leadership opportunity

Organizations do not need to predict every future AI capability. They need systems that allow people to explore with curiosity, practice safely, reflect deeply and adapt continuously — starting with what they already have and extending as capabilities evolve.

For CLOs, this is a moment to lead from the center of change — designing workforce readiness that keeps pace with accelerating technology while making work more rewarding for employees and more valuable for the organization. That is how AI moves from the promise of transformation to demonstrated readiness and, ultimately, from promise to performance.

Addendums on 3/19/26:

How to Build Practice-Based Learning Activities with AI — from drphilippahardman.substack.com by Dr Philippa Hardman

Four evidence-based methods for designing, building & deploying active learning activities with your favourite LLM

Most L&D teams are using AI to make content faster. The real opportunity is using it as a practice engine.

The Synthesia 2026 AI in L&D Report f2026 AI in L&D Report found that the fastest-growing areas of planned AI adoption aren’t in content creation — they’re in assessments and simulations (36%), adaptive pathways (33%), and AI tutors (29%). In other words: L&D teams are starting to realise that the most powerful use of AI isn’t producing learning materials. It’s creating environments where learners actually practise.

And you can build these right now — no dev team, no custom platform, no code. Each method below includes a prompt you can paste into your preferred AI tool to generate a working interactive prototype: a self-contained practice activity with a briefing screen, a live AI interaction, and a debrief — all running in the browser, ready to share with stakeholders or deploy to learners.

OpenAI Adds Interactive Math and Science Learning Tools to ChatGPT — from campustechnology.com by Rhea Kelly

Key Takeaways

- ChatGPT adds interactive learning tools: OpenAI introduced interactive math and science visualizations that allow users to explore formulas, variables, and relationships in real time.

- The tool currently covers over 70 core math and science topics and is aimed initially at high school and college-level learners.

- Users can adjust variables, manipulate formulas, and immediately see how changes affect graphs and outcomes.

2026 Survey of College and University Presidents — from insidehighered.com, Liaison, & Jenzabar

Download and explore exclusive insights from the 2026 Survey of College and University Presidents to see how these campus leaders are responding to financial volatility, political interference, rapid advances in AI, and where they believe the biggest risks and opportunities lie as they look toward 2030.

In this year’s survey, presidents share perspectives on:

- How presidents assess the second Trump administration’s impact on higher education

- Which emerging or evolving educational models they plan to add or expand in the coming years

- How effective they believe higher education has been in shaping national conversations arout AI

- The issues presidents expect will have the greatest impact on higher education by 2030