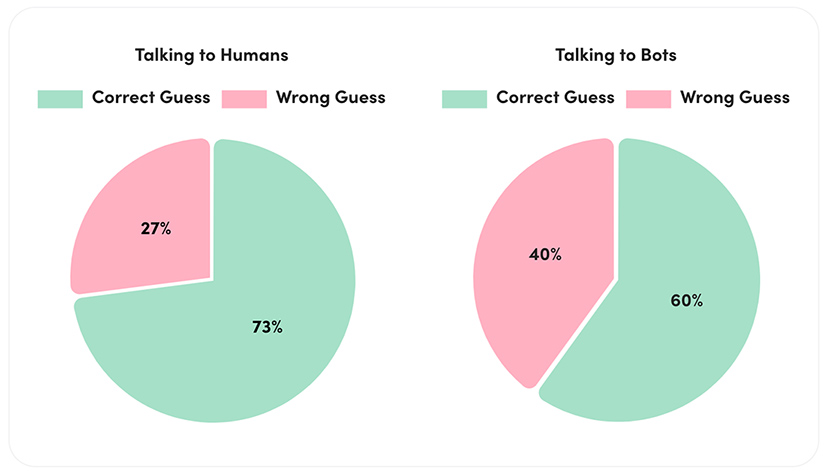

AI21 Labs concludes largest Turing Test experiment to date — from ai21.com

As part of an ongoing social and educational research project, AI21 Labs is thrilled to share the initial results of what has now become the largest Turing Test in history by scale.

.

? So much has happened in the world of AI these past 7 days.

It’s going to change many industries you thought you knew.

12 recent AI developments that are not just reshaping the industry, but our everyday lives:

— Barsee ? (@heyBarsee) May 30, 2023

From DSC:

I also wanted to highlight the item below, which Barsee also mentioned above, as it will likely hit the world of education and training as well:

Last night, Jensen Huang of NVIDIA gave his very first live keynote in 4-years.

The most show-stopping moment from the event was when he showed off the real-time AI in video games. A human speaks, the NPC responds, in real time and the dialogue was generated with AI on the fly. pic.twitter.com/TDoUM1zSiy

— Matt Wolfe (@mreflow) May 29, 2023

Also relevant/see:

- Digital Renaissance: NVIDIA Neuralangelo Research Reconstructs 3D Scenes — from blogs.nvidia.com

The perils of consulting an Electric Monk — from jordanfurlong.substack.com by Jordan Furlong

Don’t blame ChatGPT for the infamous incident of the made-up cases. And don’t be too hard on the lawyer, either. We’re all susceptible to a machine that tells us exactly what we want to hear.

Excerpt:

But then the “ChatGPT Lawyer” story happened, and all hell broke loose on LawTwitter and LawLinkedIn, and I felt I needed to make three points, one of which involves an extra-terrestrial robot.

My first two points are pretty straightforward:

- The tsunami of gleeful overreaction from lawyers on social media, urging bans on the use of ChatGPT and predicting prison time for the hapless practitioner, speaks not only to their fear and loathing of generative AI, but also to their desperate hope that it’s all really nothing but hype and won’t disturb their happy status quo. Good luck with that.

- The condemnation and mockery of the lawyer himself, who made a bad mistake but who’s been buried by an utterly disproportionate avalanche of derision, speaks to the lack of compassion in this profession, whose members should pray that their worst day as a lawyer never makes it to the front page of The New York Times. There but for the grace of God.

Are you looking for evidence to support the side that’s hired you? Or are you looking for the truth? Choosing the first option has never been easier. It’s also never been more dangerous.

As referenced topic-wise by Jordan above, also see:

A lawyer used ChatGPT to prepare a court filing. It went horribly awry. — from cbsnews.com by Megan Cerullo

What I learned at CLOC 2023 — from alexofftherecord.com by Alex Su

This week I attended the premier legal operations conference. Here’s what I heard.

Excerpt:

Theme 1: Generative AI isn’t going anywhere

This was a huge theme throughout the conference. Whether it was vendors announcing GPT integrations, or panels discussing how to use AI, there was just an enormous amount of attention on generative AI. I’m certainly no stranger to all this hype, but I’d always wondered if it was all from my Silicon Valley bubble. It wasn’t.

What was driving all this interest in AI? Well, the ubiquity of ChatGPT. Everyone’s talking about it and trying to figure out how to incorporate it into the business. And not just in the U.S. It’s a worldwide trend. Word on the street is that it’s a CEO-level priority. Everywhere. So naturally it trickles down to the legal department.

We need to talk about ChatGPT — from mnbar.org by Damien Riehl

Excerpt:

How well do LLMs perform on legal tasks?

Personal experience and anecdotal evidence indicate that LLMs’ current state provides impressive output in various legal tasks. Specifically, they provide extraordinary results on the following:

- Drafting counterarguments.

- Exploring client fact inquiries (e.g., “How did you lose money?”).

- Ideating voir dire questions (and rating responses).

- Summarizing statutes.

- Calculating works’ copyright expiration.

- Drafting privacy playbooks.

- Drafting motions to dismiss.

- Responding to cease-and-desist letters.

- Crafting decision trees.

- Creating chronologies.

- Drafting contracts.

- Extracting key elements from depositions.

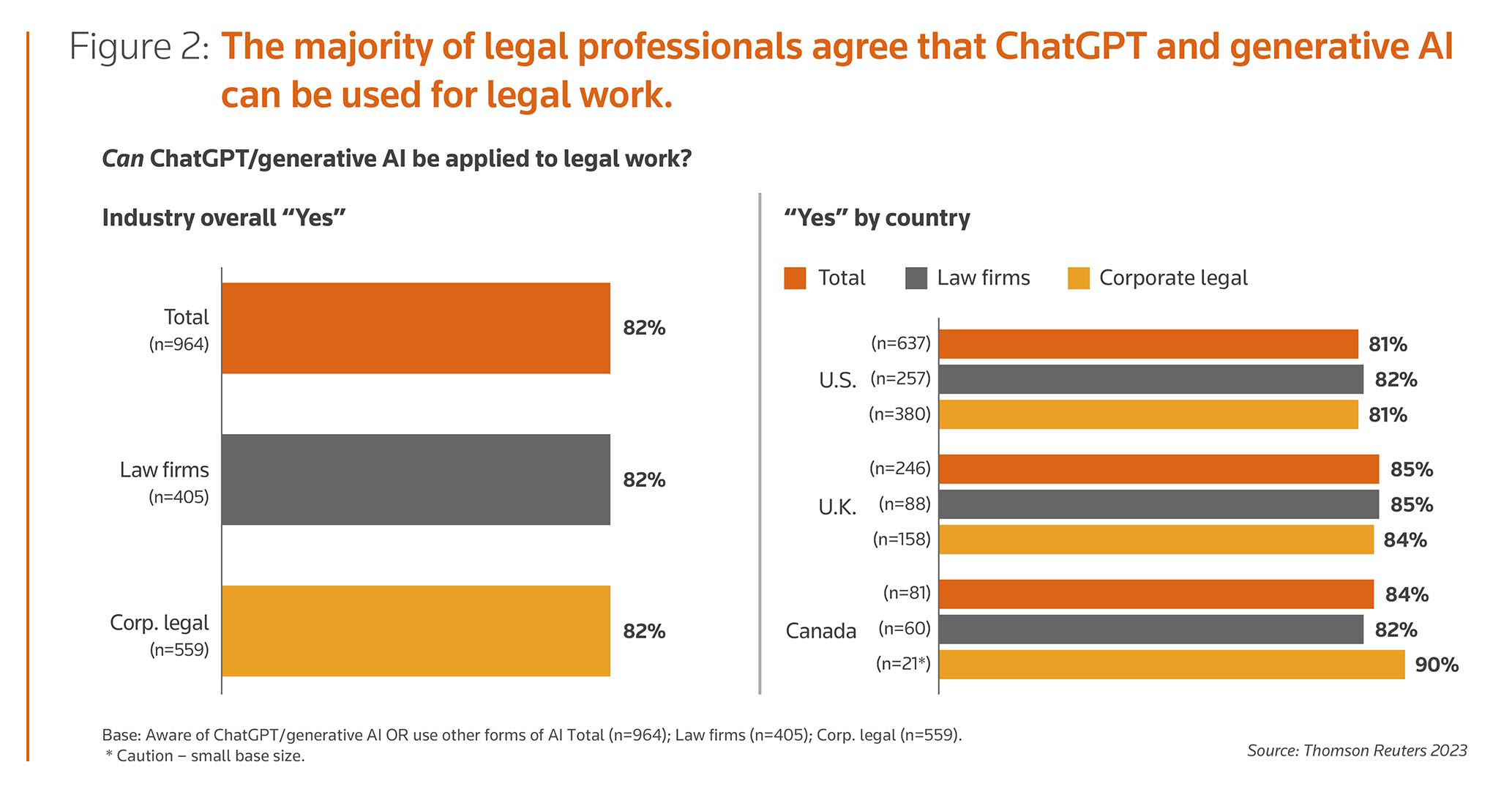

Corporate legal departments see use cases for generative AI & ChatGPT, new report finds — from thomsonreuters.com

New legal tech tools showcased at CLOC 2023 — from legaldive.comRobert Freedman

Innovations include a better way to evaluate law firm proposals, centralize all in-house legal requests in a single intake function and analyze agreements.

Guest post: CLOC 2023 – Key insights into how to drive value during changing economic times — from legaltechnology.com by Valerie Chan

Excerpt:

Typically, Legalweek has always been focused on eDiscovery, while CLOC was focused on matter management and contracts management. This time I noticed more balance in the vendor hall and sessions, with a broader range of services providers than before, including staffing providers, contracts management vendors and other new entrants in addition to eDiscovery vendors.

One theme dominated the show floor conversations: Over and over, the legal operators I talked with said if their technologies and vendors were able to develop better workflows, achieve more cost savings and report on the metrics that mattered to their GC, the GC could function as more of a business advisor to the C-suite.

AI is already being used in the legal system—we need to pay more attention to how we use it — by phys.org Morgiane Noel

Excerpt:

While ChatGPT and the use of algorithms in social media get lots of attention, an important area where AI promises to have an impact is law.

The idea of AI deciding guilt in legal proceedings may seem far-fetched, but it’s one we now need to give serious consideration to.

That’s because it raises questions about the compatibility of AI with conducting fair trials. The EU has enacted legislation designed to govern how AI can and can’t be used in criminal law.

Legal Innovation as a Service, Now Enhanced with AI — from denniskennedy.com by Dennis Kennedy

Excerpt:

Over the last semester, I’ve been teaching two classes at Michigan State University College of Law, one called AI and the Law and the other called New Technologies and the Law, and a class at University of Michigan Law School called Legal Technology Literacy and Leadership. All three classes pushed me to keep up-to-date with the nearly-daily developments in AI, ChatGPT, and LLMs. I also did quite a lot of experiments, primarily with ChatGPT, especially GPT-4, and with Notion AI.

Emerging Tech Trends: The rise of GPT tools in contract analysis — from abajournal.com by Nicole Black

Excerpt:

Below, you’ll learn about many of the solutions currently available. Keep in mind that this overview is not exhaustive. There are other similar tools currently available and the number of products in this category will undoubtedly increase in the months to come.

Politicians need to learn how AI works—fast — link.wired.com

Excerpt:

This week we’ll hear from someone who has deep experience in assessing and regulating potentially harmful uses of automation and artificial intelligence—valuable skills at a moment when many people, including lawmakers, are freaking out about the chaos that the technology could cause.

This company adopted AI. Here’s what happened to its human workers — from npr.org by Greg Rosalsky|

Excerpt:

What the economists found offers potentially great news for the economy, at least in one dimension that is crucial to improving our living standards: AI caused a group of workers to become much more productive. Backed by AI, these workers were able to accomplish much more in less time, with greater customer satisfaction to boot. At the same time, however, the study also shines a spotlight on just how powerful AI is, how disruptive it might be, and suggests that this new, astonishing technology could have economic effects that change the shape of income inequality going forward.

The article links to:

Generative AI at Work — from nber.org by Erik Brynjolfsson, Danielle Li & Lindsey R. Raymond

We study the staggered introduction of a generative AI-based conversational assistant using data from 5,179 customer support agents. Access to the tool increases productivity, as measured by issues resolved per hour, by 14 percent on average, with the greatest impact on novice and low-skilled workers, and minimal impact on experienced and highly skilled workers. We provide suggestive evidence that the AI model disseminates the potentially tacit knowledge of more able workers and helps newer workers move down the experience curve. In addition, we show that AI assistance improves customer sentiment, reduces requests for managerial intervention, and improves employee retention.

In a talk from the cutting edge of technology, OpenAI cofounder Greg Brockman explores the underlying design principles of ChatGPT and demos some mind-blowing, unreleased plug-ins for the chatbot that sent shockwaves across the world. After the talk, head of TED Chris Anderson joins Brockman to dig into the timeline of ChatGPT’s development and get Brockman’s take on the risks, raised by many in the tech industry and beyond, of releasing such a powerful tool into the world.

Also relevant/see:

- OpenAI’s CEO Says the Age of Giant AI Models Is Already Over — from wired.com by Will Knight

Sam Altman says the research strategy that birthed ChatGPT is played out and future strides in artificial intelligence will require new ideas.

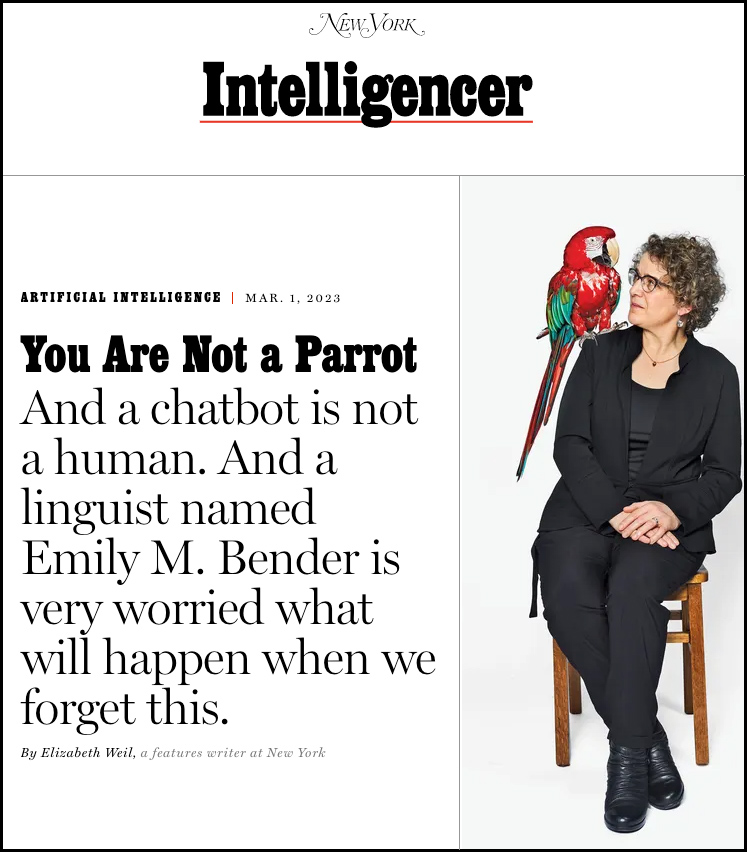

You are not a parrot — from nymag.com by Elizabeth Weil and Emily M. Bender

Excerpts:

A handful of companies control what PricewaterhouseCoopers called a “$15.7 trillion game changer of an industry.” Those companies employ or finance the work of a huge chunk of the academics who understand how to make LLMs. This leaves few people with the expertise and authority to say, “Wait, why are these companies blurring the distinction between what is human and what’s a language model? Is this what we want?”

…

Bender knows she’s no match for a trillion-dollar game changer slouching to life. But she’s out there trying. Others are trying too. LLMs are tools made by specific people — people who stand to accumulate huge amounts of money and power, people enamored with the idea of the singularity. The project threatens to blow up what is human in a species sense. But it’s not about humility. It’s not about all of us. It’s not about becoming a humble creation among the world’s others. It’s about some of us — let’s be honest — becoming a superspecies. This is the darkness that awaits when we lose a firm boundary around the idea that humans, all of us, are equally worthy as is.

How ChatGPT is going to change the future of work and our approach to education — from livemint.com

From DSC:

I thought that the article made a good point when it asserted:

The pace of technological advancement is booming aggressively and conversations around ChatGPT snatching away jobs are becoming more and more frequent. The future of work is definitely going to change and that makes it clear that the approach toward education is also demanding a big shift.

…

A report from Dell suggests that 85% of jobs that will be around in 2030 do not exist yet. The fact becomes important as it showcases that the jobs are not going to vanish, they will just change and most of the jobs by 2030 will be new.

The Future of Human Agency — from pewresearch.org by Janna Anderson and Lee Rainie

Excerpt:

Thus the question: What is the future of human agency? Pew Research Center and Elon University’s Imagining the Internet Center asked experts to share their insights on this; 540 technology innovators, developers, business and policy leaders, researchers, academics and activists responded. Specifically, they were asked:

By 2035, will smart machines, bots and systems powered by artificial intelligence be designed to allow humans to easily be in control of most tech-aided decision-making that is relevant to their lives?

The results of this nonscientific canvassing:

-

- 56% of these experts agreed with the statement that by 2035 smart machines, bots and systems will not be designed to allow humans to easily be in control of most tech-aided decision-making.

- 44% said they agreed with the statement that by 2035 smart machines, bots and systems will be designed to allow humans to easily be in control of most tech-aided decision-making.

What are the things humans really want agency over? When will they be comfortable turning to AI to help them make decisions? And under what circumstances will they be willing to outsource decisions altogether to digital systems?

The next big threat to AI might already be lurking on the web — from zdnet.com by Danny Palmer; via Sam DeBrule

Artificial intelligence experts warn attacks against datasets used to train machine-learning tools are worryingly cheap and could have major consequences.

Excerpts:

Data poisoning occurs when attackers tamper with the training data used to create deep-learning models. This action means it’s possible to affect the decisions that the AI makes in a way that is hard to track.

By secretly altering the source information used to train machine-learning algorithms, data-poisoning attacks have the potential to be extremely powerful because the AI will be learning from incorrect data and could make ‘wrong’ decisions that have significant consequences.

Why AI Won’t Cause Unemployment — from pmarca.substack.com by Marc Andreessen

Excerpt:

Normally I would make the standard arguments against technologically-driven unemployment — see good summaries by Henry Hazlitt (chapter 7) and Frédéric Bastiat (his metaphor directly relevant to AI). And I will come back and make those arguments soon. But I don’t even think the standand arguments are needed, since another problem will block the progress of AI across most of the economy first.

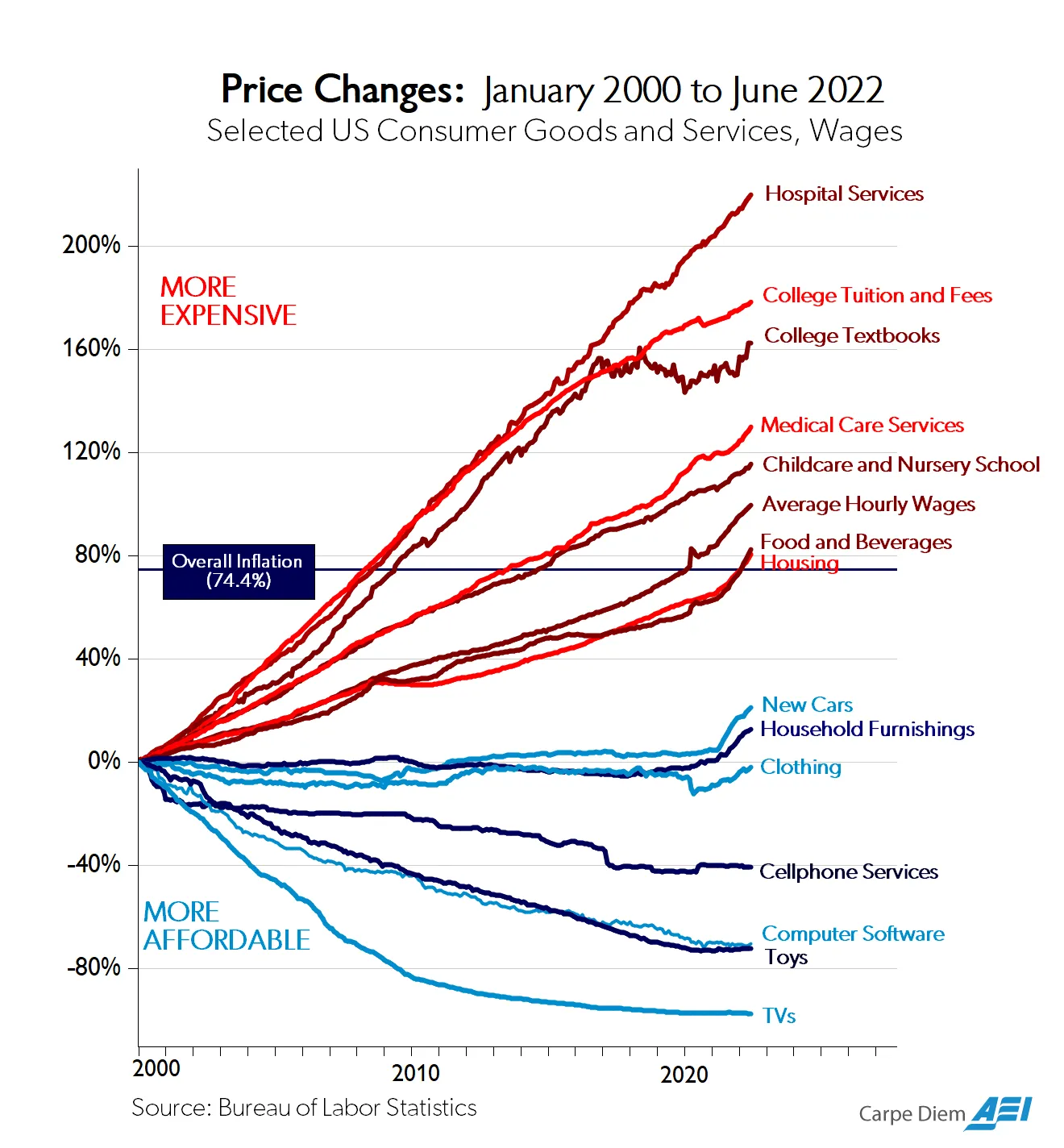

Which is: AI is already illegal for most of the economy, and will be for virtually all of the economy.

How do I know that? Because technology is already illegal in most of the economy, and that is becoming steadily more true over time.

How do I know that? Because:

From DSC:

And for me, it boils down to an inconvenient truth: What’s the state of our hearts and minds?

AI, ChatGPT, Large Language Models (LLMs), and the like are tools. How we use such tools varies upon what’s going on in our hearts and minds. A fork can be used to eat food. It can also be used as a weapon. I don’t mean to be so blunt, but I can’t think of another way to say it right now.

- Do we care about one another…really?

- Has capitalism gone astray?

- Have our hearts, our thinking, and/or our mindsets gone astray?

- Do the products we create help or hurt others? It seems like too many times our perspective is, “We will sell whatever they will buy, regardless of its impact on others — as long as it makes us money and gives us the standard of living that we want.” Perhaps we could poll some former executives from Philip Morris on this topic.

- Or we will develop this new technology because we can develop this new technology. Who gives a rat’s tail about the ramifications of it?

Everything that happened in AI this January.

Ready for February? pic.twitter.com/dWwuIYmXB7

— Lior? (@AlphaSignalAI) February 2, 2023

Also from Lior see:

This is a sneak peak into the future of medicine..

GlassAI launched an LLM-based tool capable of generating a diagnosis or clinical plan based on symptoms.

Also, ChatGPT recently passed the US Medical Licensing Exam.

Demo: https://t.co/Ij95Nx9Mo3 @GlassHealthHQ pic.twitter.com/SVi2TJhTIb

— Lior? (@AlphaSignalAI) February 1, 2023

“After testing GPT3 within Ironclad for several months, it’s clear that this is much more than novelty; it’s a groundbreaking innovation.”

“Today I’m thrilled to announce Ironclad’s latest AI advancement, AI Assist.™” https://t.co/a8hbyahENn

— Adam.GPT (@TheRealAdamG) February 1, 2023

Contracts Company Ironclad Taps Into GPT-3 For Instant Document Redlining Based On A Company’s Playbook — from lawnext.com by Robert Ambrogi

Excerpt:

The contract lifecycle management company Ironclad has tapped into the power of OpenAI’s GPT-3 to introduce AI Assist, a beta feature that instantly redlines contracts based on a company’s playbook of approved clauses and language.

The redlines, made using GPT-3’s generative artificial intelligence, appear as tracked changes in Microsoft Word, where a user can then scan the recommended changes and either accept or reject them.

Addendum:

- Will Generative AI Expedite Legal Tech Adoption? (Zach Abramowitz – Killer Whale Strategies) — from tlpodcast.com by Chad Main