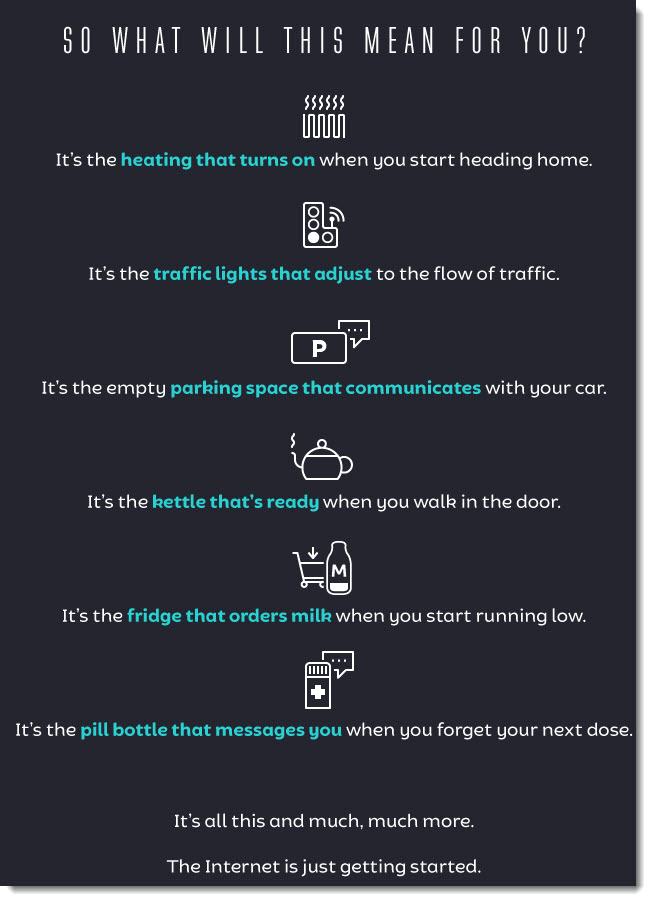

How the internet of things will change your life — from uk.rs-online.com

‘Internet of Things’ is transforming health care, Geneia president says — from unionleader.com by Kim Haas

What are the issues with Fog Computing? — from yourtechdiet.com by Brian Curtis

Advantages:

• It reduces the amount of data transferred to the cloud by having an edge location.

• Supports mobility and improves system response time.

• It minimizes network latency and conserves network bandwidth.

• Data can be processed with no bandwidth availability.

• Acts like an intermediate b/w IOT devices and Cloud computing infrastructure.

Disadvantages:

• It has some wireless security issues and privacy concerns.

• Authentication issues and trusted certificates concern

Fog computing helps in building some of the Smart Hi-Tech Cities, Buildings, Vehicle networks and Software Defined Networks (SDN).

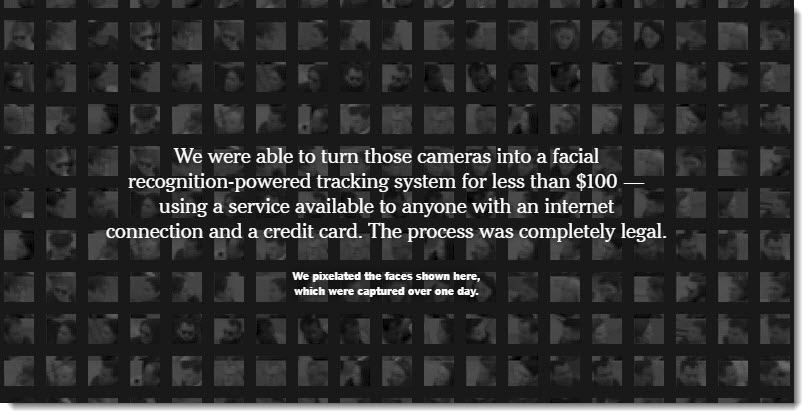

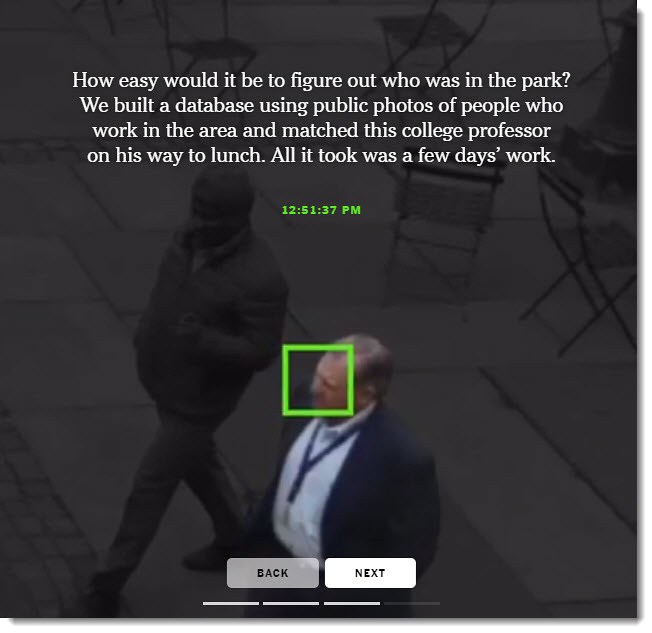

Smart building complexity is creating dangerous new hacking opportunities — from techradar.com by Ian Heritage

Complex IoT environments can spell bad news for IT professionals

How 5G will fast track the internet of things — from .inkstonenews.com by Zheping Huang

Excerpt:

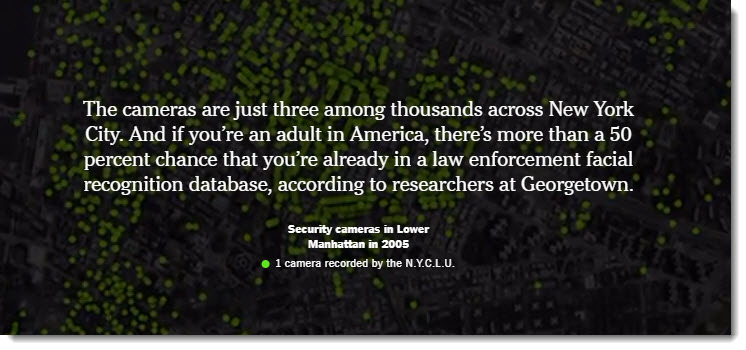

In Hangzhou, police officers are notified of major car accidents soon after they happen, traffic lights automatically adjust to changes in the volume of vehicles on the road and, in emergencies, fire trucks and ambulances are not stopped by a single red light until they arrive at the scene.

The city in eastern China’s Zhejiang province is one of the country’s major tech hubs. Its smart infrastructure powers the City Brain project, a cloud computing and AI-driven urban traffic-management system. It covers a total area of 162 square miles – that’s seven times the size of Manhattan.

When 5G mobile services start to roll out worldwide next year, smart cities such as Hangzhou will get even smarter as next-generation wireless technology helps industries realize the full potential of the internet of things (IoT).

“5G, from the beginning of its infrastructure design, has the internet of things in mind,” said Cui Kai, a Beijing-based IoT analyst with research firm International Data Corporation (IDC).

The stakes are high for industries around the world, as global spending on IoT is forecast to exceed $1 trillion in 2022, up from an estimated $745 billion this year, according to IDC.

With peak data rates up to 20 times faster than 4G, 5G will serve as “the connective tissue” for IoT, autonomous cars, smart cities and new mobile applications – providing the backbone for the industrial internet, according to a Deloitte report.

How the Internet of Things (IoT) can change the face of your business — from yourtechdiet.com by Brian Curtis

What is an IoT platform?

IOT platform is the support software which connects hardware, data networks and access points to other parts, i.e. to end-user application. Moreover, the IOT platform helps to automate their environment. So you can consider it as a middleware solution between data and the user.

…

Here are the five reasons why your business needs IoT.

- Helps to know your customer better

- Strengthen business operations

- IoT can help to automate the jobs so your resource can work on what’s more required for your business.

- Supply-chain analytics- IOT can manage your inventory so that you can save tons of time.