Shares of two big online education stocks tank more than 10% as students use ChatGPT — from cnbc.com by Michelle Fox; via Robert Gibson on LinkedIn

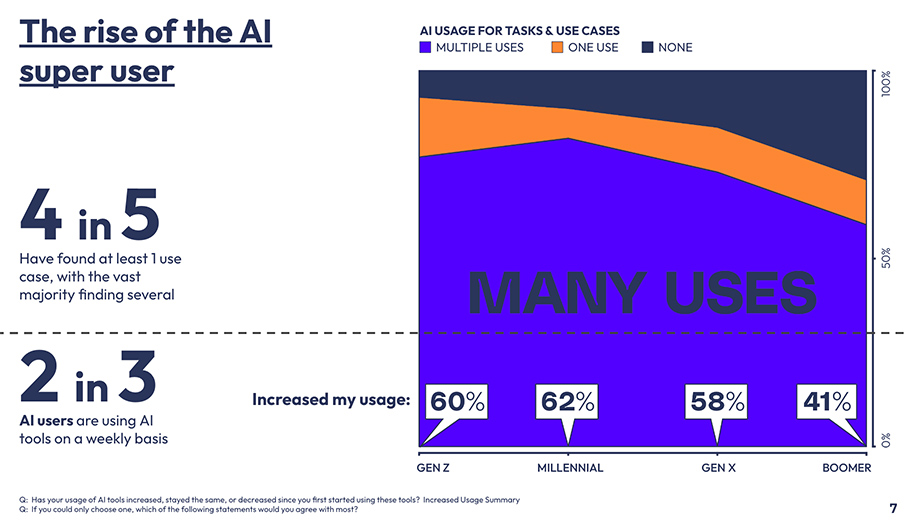

The rapid rise of artificial intelligence appears to be taking a toll on the shares of online education companies Chegg and Coursera.

Both stocks sank by more than 10% on Tuesday after issuing disappointing guidance in part because of students using AI tools such as ChatGPT from OpenAI.

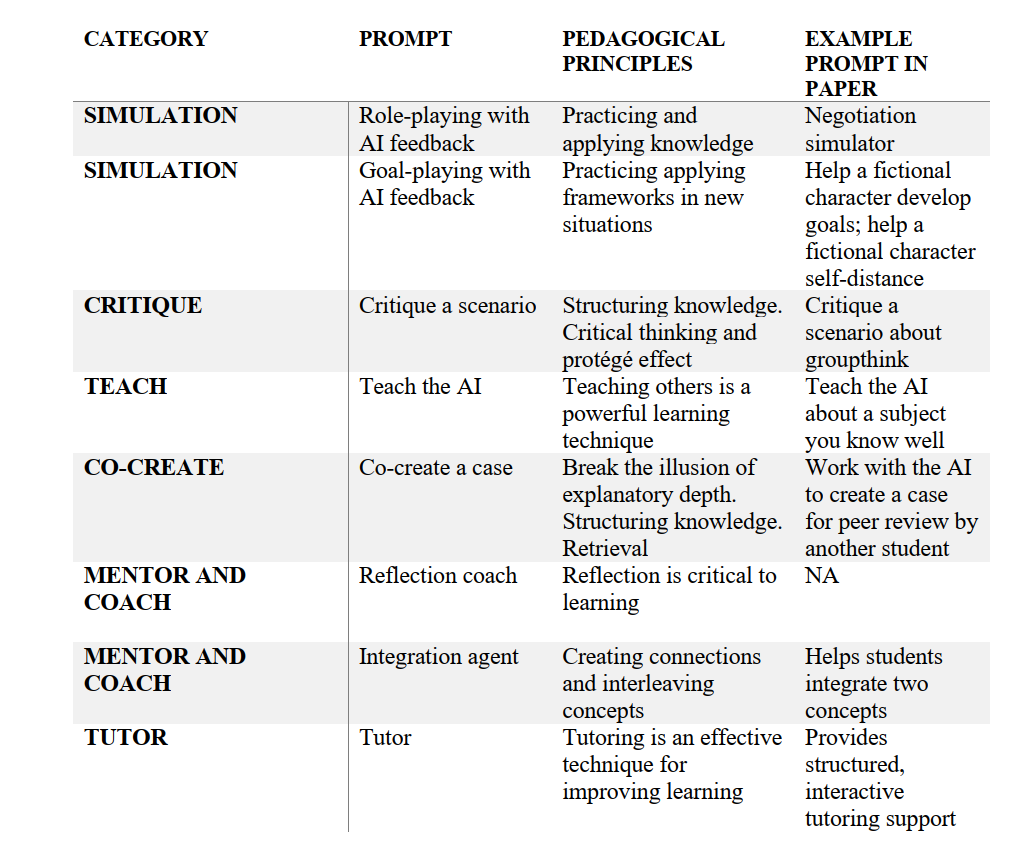

“Combining AI Literacy with Core Humanities Learning Goals: Practical, Critical, and Playful Approaches”

It was amazing to get to do an in-person keynote at @csunorthridge‘s AI Pedagogy Showcase.

Sharing slides: https://t.co/LDIGAZ3ORO 1/2— Anna Mills, annamillsoer.bsky.social, she/her (@AnnaRMills) May 3, 2024

Synthetic Video & AI Professors — from drphilippahardman.substack.com by Dr. Philippa Hardman

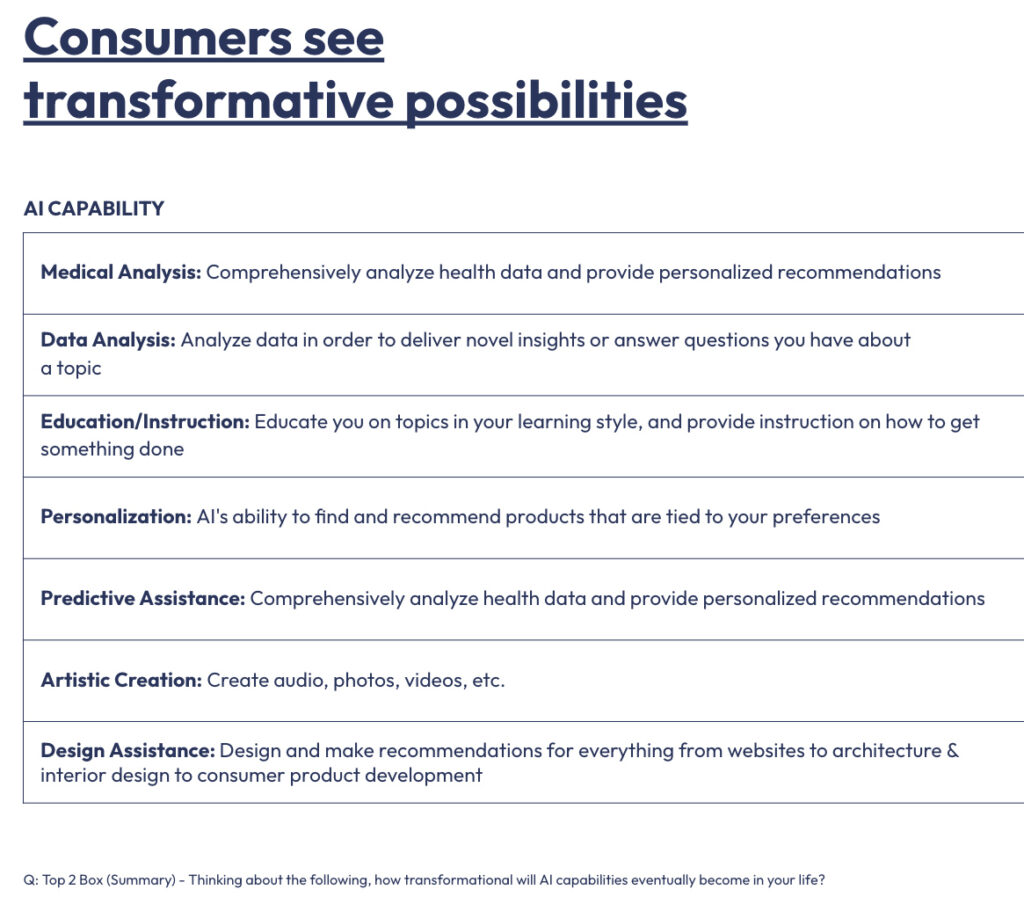

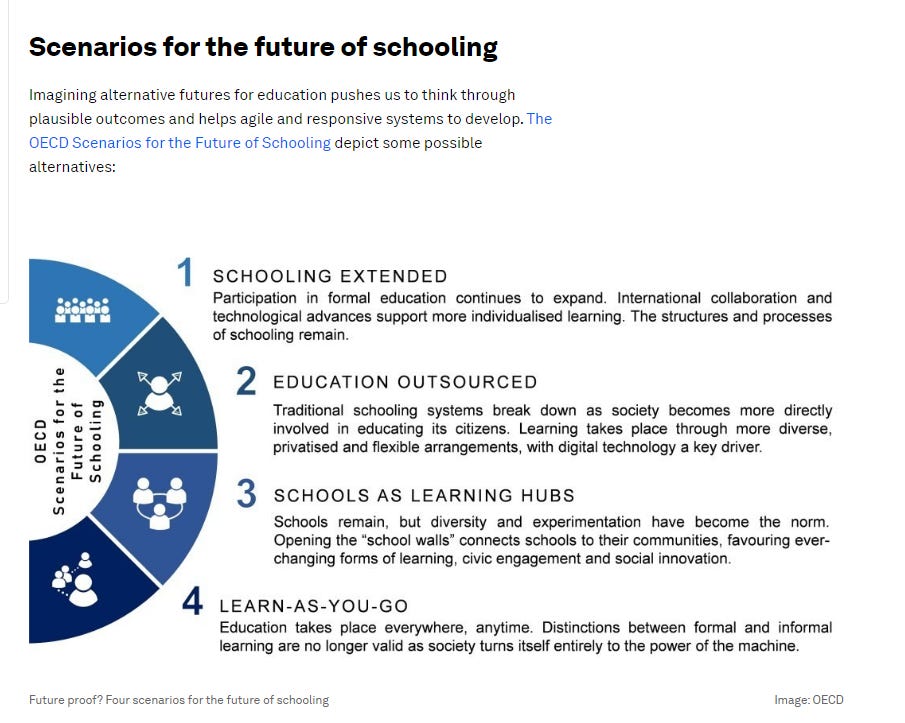

Are we witnessing the emergence of a new, post-AI model of async online learning?

TLDR: by effectively tailoring the learning experience to the learner’s comprehension levels and preferred learning modes, AI can enhance the overall learning experience, leading to increased “stickiness” and higher rates of performance in assessments.

…

TLDR: AI enables us to scale responsive, personalised “always on” feedback and support in a way that might help to solve one of the most wicked problems of online async learning – isolation and, as a result, disengagement.

…

In the last year we have also seen the rise of an unprecedented number of “always on” AI tutors, built to provide coaching and feedback how and when learners need it.

Perhaps the most well-known example is Khan Academy’s Khanmigo and its GPT sidekick Tutor Me. We’re also seeing similar tools emerge in K12 and Higher Ed where AI is being used to extend the support and feedback provided for students beyond the physical classroom.

Our Guidance on School AI Guidance document has been updated — from stefanbauschard.substack.com by Stefan Bauschard

We’ve updated the free 72-page document we wrote to help schools design their own AI guidance policies.

There are a few key updates.

- Inclusion of Oklahoma and significant updates from North Carolina and Washington.

- More specifics on implementation — thanks NC and WA!

- A bit more on instructional redesign. Thanks to NC for getting this party started!

Creating a Culture Around AI: Thoughts and Decision-Making — from er.educause.edu by Courtney Plotts and Lorna Gonzalez

Given the potential ramifications of artificial intelligence (AI) diffusion on matters of diversity, equity, inclusion, and accessibility, now is the time for higher education institutions to adopt culturally aware, analytical decision-making processes, policies, and practices around AI tools selection and use.

.webp)