Getting (and Keeping) Early Learners’ Attention — from edutopia.org by Heather Sanderell

These ideas for lesson hooks—like using songs, video clips, and picture walks—can motivate young students to focus on learning.

How do you grasp and maintain the attention of a room full of wide-eyed students with varying interests and abilities? Do you use visuals and games or interactive activities? Do you use art and sports and music or sounds? The answer is yes, to all!

When trying to keep the attention of your learners, it’s important to stimulate their senses and pique their diverse interests. Educational theorist and researcher Robert Gagné devised his nine events of instructional design, which include grabbing learners’ attention with a lesson hook. This is done first to set the tone for the remainder of the lesson.

3 Ways to Help Students Overcome the Forgetting Curve — from edutopia.org by Cathleen Beachboard

Our brains are wired to forget things unless we take active steps to remember. Here’s how you can help students hold on to what they learn.

You teach a lesson that lights up the room. Students are nodding and hands are flying up, and afterward you walk out thinking, “They got it. They really got it.”

And then, the next week, you ask a simple review question—and the room falls silent.

If that situation has ever made you question your ability to teach, take heart: You’re not failing, you’re simply facing the forgetting curve. Understanding why students forget—and how we can help them remember—can transform not just our lessons but our students’ futures.

The good news? You don’t have to overhaul your curriculum to beat the forgetting curve. You just need three small, powerful shifts in how you teach.

From DSC:

Along these same lines, also see:

.

7 Nature Experiments to Spark Student Curiosity — from edutopia.org by Donna Phillips

Encourage your students to ask questions about and explore the world around them with these hands-on lessons.

Children are natural scientists—they ask big questions, notice tiny details, and learn best through hands-on exploration. That’s why nature experiments are a classroom staple for me. From growing seeds to using the sun’s energy, students don’t just learn science, they experience it. Here are my favorite go-to nature experiments that spark curiosity.

Agentic AI use cases in the legal industry — from legal.thomsonreuters.com

What legal professionals need to know now with the rise of agentic AI

While GenAI can create documents or answer questions, agentic AI takes intelligence a step further by planning how to get multi-step work done, including tasks such as consuming information, applying logic, crafting arguments, and then completing them.? This leaves legal teams more time for nuanced decision-making, creative strategy, and relationship-building with clients—work that machines can’t do.

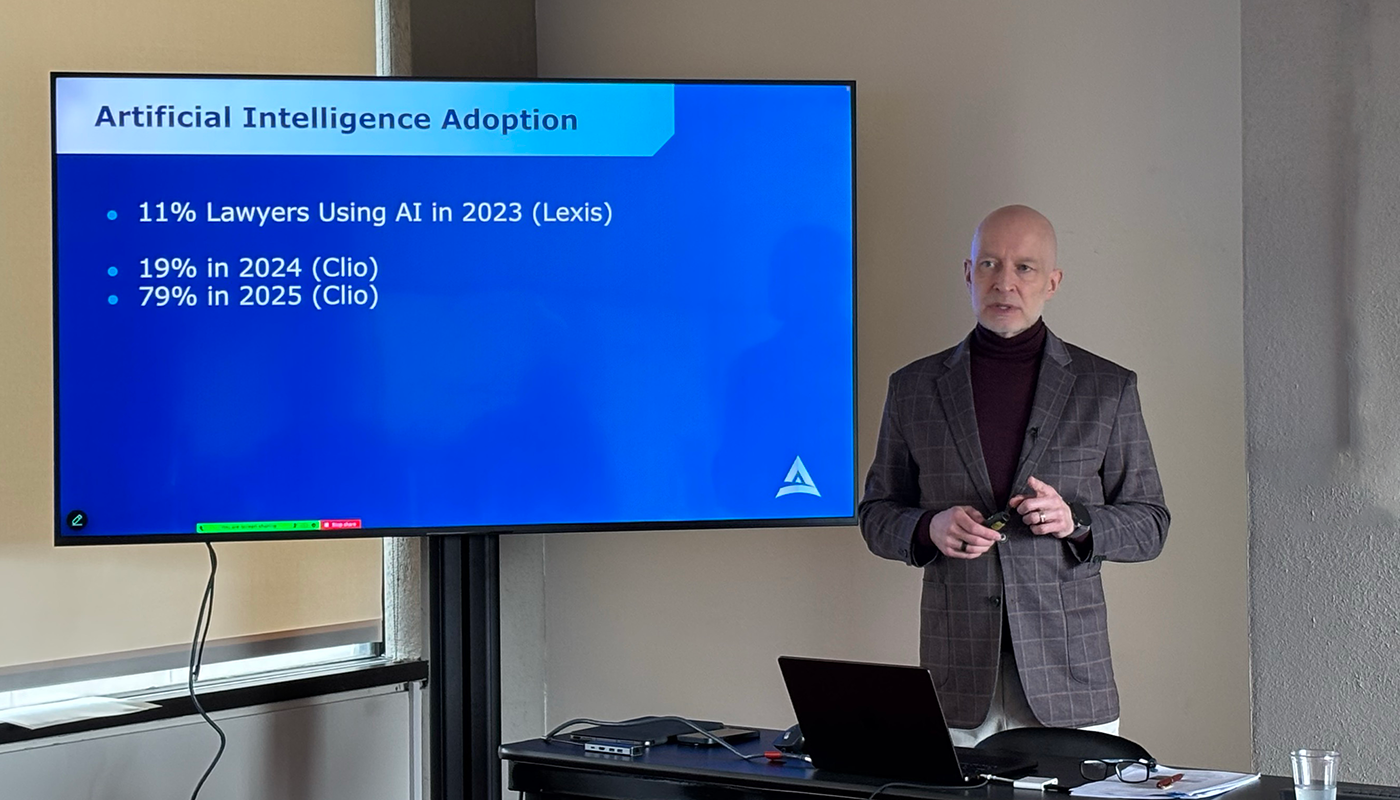

The AI Legal Landscape in 2025: Beyond the Hype — from akerman.com by Melissa C. Koch

What we’re witnessing is a profession in transition where specific tasks are being augmented or automated while new skills and roles emerge.

The data tells an interesting story: approximately 79% of law firms have integrated AI tools into their workflows, yet only a fraction have truly transformed their operations. Most implementations focus on pattern recognition tasks such as document review, legal research, contract analysis. These implementations aren’t replacing lawyers; they’re redirecting attention to higher-value work.

This technological shift doesn’t happen in isolation. It’s occurring amid client pressure for efficiency, competition from alternative providers, and the expectations of a new generation of lawyers who have never known a world without AI assistance.

LexisNexis and Harvey team up to revolutionize legal research with artificial intelligence — from abajournal.com by Danielle Braff

Lawyers using the Harvey artificial intelligence platform will soon be able to tap into LexisNexis’ vast legal research capabilities.

Thanks to a new partnership announced Wednesday, Harvey users will be able to ask legal questions and receive fast, citation-backed answers powered by LexisNexis case law, statutes and Shepard’s Citations, streamlining everything from basic research to complex motions. According to a press release, generated responses to user queries will be grounded in LexisNexis’ proprietary knowledge graphs and citation tools—making them more trustworthy for use in court or client work.

10 Legal Tech Companies to Know — from builtin.com

These companies are using AI, automation and analytics to transform how legal work gets done.

.

Four months after a $3B valuation, Harvey AI grows to $5B — from techcrunch.com by Marina Temkin

Harvey AI, a startup that provides automation for legal work, has raised $300 million in Series E funding at a $5 billion valuation, the company told Fortune. The round was co-led by Kleiner Perkins and Coatue, with participation from existing investors, including Conviction, Elad Gil, OpenAI Startup Fund, and Sequoia.

The billable time revolution — from jordanfurlong.substack.com by Jordan Furlong

Gen AI will bring an end to the era when lawyers’ value hinged on performing billable work. Grab the coming opportunity to re-prioritize your daily activities and redefine your professional purpose.

Because of Generative AI, lawyers will perform fewer “billable” tasks in future; but why is that a bad thing? Why not devote that incoming “freed-up” time to operating, upgrading, and flourishing your law practice? Because this is what you do now: You run a legal business. You deliver good outcomes, good experiences, and good relationships to clients. Humans do some of the work and machines do some of the work and the distinction that matters is not billable/non-billable, it’s which type of work is best suited to which type of performer.

Thoughts on thinking — from dcurt.is by Dustin Curtis

Intellectual rigor comes from the journey: the dead ends, the uncertainty, and the internal debate. Skip that, and you might still get the insight–but you’ll have lost the infrastructure for meaningful understanding. Learning by reading LLM output is cheap. Real exercise for your mind comes from building the output yourself.

The irony is that I now know more than I ever would have before AI. But I feel slightly dumber. A bit more dull. LLMs give me finished thoughts, polished and convincing, but none of the intellectual growth that comes from developing them myself.

Using AI Right Now: A Quick Guide — from oneusefulthing.org by Ethan Mollick

Which AIs to use, and how to use them

Every few months I put together a guide on which AI system to use. Since I last wrote my guide, however, there has been a subtle but important shift in how the major AI products work. Increasingly, it isn’t about the best model, it is about the best overall system for most people. The good news is that picking an AI is easier than ever and you have three excellent choices. The challenge is that these systems are getting really complex to understand. I am going to try and help a bit with both.

First, the easy stuff.

Which AI to Use

For most people who want to use AI seriously, you should pick one of three systems: Claude from Anthropic, Google’s Gemini, and OpenAI’s ChatGPT.

Also see:

Student Voice, Socratic AI, and the Art of Weaving a Quote — from elmartinsen.substack.com by Eric Lars Martinsen

How a custom bot helps students turn source quotes into personal insight—and share it with others

This summer, I tried something new in my fully online, asynchronous college writing course. These classes have no Zoom sessions. No in-person check-ins. Just students, Canvas, and a lot of thoughtful design behind the scenes.

One activity I created was called QuoteWeaver—a PlayLab bot that helps students do more than just insert a quote into their writing.

It’s a structured, reflective activity that mimics something closer to an in-person 1:1 conference or a small group quote workshop—but in an asynchronous format, available anytime. In other words, it’s using AI not to speed students up, but to slow them down.

…

The bot begins with a single quote that the student has found through their own research. From there, it acts like a patient writing coach, asking open-ended, Socratic questions such as:

What made this quote stand out to you?

How would you explain it in your own words?

What assumptions or values does the author seem to hold?

How does this quote deepen your understanding of your topic?

It doesn’t move on too quickly. In fact, it often rephrases and repeats, nudging the student to go a layer deeper.

The Disappearance of the Unclear Question — from jeppestricker.substack.com Jeppe Klitgaard Stricker

New Piece for UNESCO Education Futures

On [6/13/25], UNESCO published a piece I co-authored with Victoria Livingstone at Johns Hopkins University Press. It’s called The Disappearance of the Unclear Question, and it’s part of the ongoing UNESCO Education Futures series – an initiative I appreciate for its thoughtfulness and depth on questions of generative AI and the future of learning.

Our piece raises a small but important red flag. Generative AI is changing how students approach academic questions, and one unexpected side effect is that unclear questions – for centuries a trademark of deep thinking – may be beginning to disappear. Not because they lack value, but because they don’t always work well with generative AI. Quietly and unintentionally, students (and teachers) may find themselves gradually avoiding them altogether.

Of course, that would be a mistake.

We’re not arguing against using generative AI in education. Quite the opposite. But we do propose that higher education needs a two-phase mindset when working with this technology: one that recognizes what AI is good at, and one that insists on preserving the ambiguity and friction that learning actually requires to be successful.

Leveraging GenAI to Transform a Traditional Instructional Video into Engaging Short Video Lectures — from er.educause.edu by Hua Zheng

By leveraging generative artificial intelligence to convert lengthy instructional videos into micro-lectures, educators can enhance efficiency while delivering more engaging and personalized learning experiences.

This AI Model Never Stops Learning — from link.wired.com by Will Knight

Researchers at Massachusetts Institute of Technology (MIT) have now devised a way for LLMs to keep improving by tweaking their own parameters in response to useful new information.

The work is a step toward building artificial intelligence models that learn continually—a long-standing goal of the field and something that will be crucial if machines are to ever more faithfully mimic human intelligence. In the meantime, it could give us chatbots and other AI tools that are better able to incorporate new information including a user’s interests and preferences.

The MIT scheme, called Self Adapting Language Models (SEAL), involves having an LLM learn to generate its own synthetic training data and update procedure based on the input it receives.

Edu-Snippets — from scienceoflearning.substack.com by Nidhi Sachdeva and Jim Hewitt

Why knowledge matters in the age of AI; What happens to learners’ neural activity with prolonged use of LLMs for writing

Highlights:

- Offloading knowledge to Artificial Intelligence (AI) weakens memory, disrupts memory formation, and erodes the deep thinking our brains need to learn.

- Prolonged use of ChatGPT in writing lowers neural engagement, impairs memory recall, and accumulates cognitive debt that isn’t easily reversed.

The Memory Paradox: Why Our Brains Need Knowledge in an Age of AI — from papers.ssrn.com by Barbara Oakley, Michael Johnston, Kenzen Chen, Eulho Jung, and Terrence Sejnowski; via George Siemens

Abstract

In an era of generative AI and ubiquitous digital tools, human memory faces a paradox: the more we offload knowledge to external aids, the less we exercise and develop our own cognitive capacities. This chapter offers the first neuroscience-based explanation for the observed reversal of the Flynn Effect—the recent decline in IQ scores in developed countries—linking this downturn to shifts in educational practices and the rise of cognitive offloading via AI and digital tools. Drawing on insights from neuroscience, cognitive psychology, and learning theory, we explain how underuse of the brain’s declarative and procedural memory systems undermines reasoning, impedes learning, and diminishes productivity. We critique contemporary pedagogical models that downplay memorization and basic knowledge, showing how these trends erode long-term fluency and mental flexibility. Finally, we outline policy implications for education, workforce development, and the responsible integration of AI, advocating strategies that harness technology as a complement to – rather than a replacement for – robust human knowledge.

Keywords

cognitive offloading, memory, neuroscience of learning, declarative memory, procedural memory, generative AI, Flynn Effect, education reform, schemata, digital tools, cognitive load, cognitive architecture, reinforcement learning, basal ganglia, working memory, retrieval practice, schema theory, manifolds

The AI-enhanced learning ecosystem: A case study in collaborative innovation — from chieflearningofficer.com by Kevin Jennings

How artificial intelligence can serve as a tool and collaborative partner in reimagining content development and management.

Learning and development professionals face unprecedented challenges in today’s rapidly evolving business landscape. According to LinkedIn’s 2025 Workplace Learning Report, 67 percent of L&D professionals report being “maxed out” on capacity, while 66 percent have experienced budget reductions in the past year.

Despite these constraints, 87 percent agree their organizations need to develop employees faster to keep pace with business demands. These statistics paint a clear picture of the pressure L&D teams face: do more, with less, faster.

This article explores how one L&D leader’s strategic partnership with artificial intelligence transformed these persistent challenges into opportunities, creating a responsive learning ecosystem that addresses the modern demands of rapid product evolution and diverse audience needs. With 71 percent of L&D professionals now identifying AI as a high or very high priority for their learning strategy, this case study demonstrates how AI can serve not merely as a tool but as a collaborative partner in reimagining content development and management.

.

How we use GenAI and AR to improve students’ design skills — from timeshighereducation.com by Antonio Juarez, Lesly Pliego and Jordi Rábago who are professors of architecture at Monterrey Institute of Technology in Mexico; Tomas Pachajoa is a professor of architecture at the El Bosque University in Colombia; & Carlos Hinrichsen and Marietta Castro are educators at San Sebastián University in Chile.

Guidance on using generative AI and augmented reality to enhance student creativity, spatial awareness and interdisciplinary collaboration

Blend traditional skills development with AI use

For subjects that require students to develop drawing and modelling skills, have students create initial design sketches or models manually to ensure they practise these skills. Then, introduce GenAI tools such as Midjourney, Leonardo AI and ChatGPT to help students explore new ideas based on their original concepts. Using AI at this stage broadens their creative horizons and introduces innovative perspectives, which are crucial in a rapidly evolving creative industry.

Provide step-by-step tutorials, including both written guides and video demonstrations, to illustrate how initial sketches can be effectively translated into AI-generated concepts. Offer example prompts to demonstrate diverse design possibilities and help students build confidence using GenAI.

Integrating generative AI and AR consistently enhanced student engagement, creativity and spatial understanding on our course.

How Texas is Preparing Higher Education for AI — from the74million.org by Kate McGee

TX colleges are thinking about how to prepare students for a changing workforce and an already overburdened faculty for new challenges in classrooms.

“It doesn’t matter if you enter the health industry, banking, oil and gas, or national security enterprises like we have here in San Antonio,” Eighmy told The Texas Tribune. “Everybody’s asking for competency around AI.”

It’s one of the reasons the public university, which serves 34,000 students, announced earlier this year that it is creating a new college dedicated to AI, cyber security, computing and data science. The new college, which is still in the planning phase, would be one of the first of its kind in the country. UTSA wants to launch the new college by fall 2025.

But many state higher education leaders are thinking beyond that. As AI becomes a part of everyday life in new, unpredictable ways, universities across Texas and the country are also starting to consider how to ensure faculty are keeping up with the new technology and students are ready to use it when they enter the workforce.

In the Room Where It Happens: Generative AI Policy Creation in Higher Education — from er.educause.edu by Esther Brandon, Lance Eaton, Dana Gavin, and Allison Papini

To develop a robust policy for generative artificial intelligence use in higher education, institutional leaders must first create “a room” where diverse perspectives are welcome and included in the process.

Q&A: Artificial Intelligence in Education and What Lies Ahead — from usnews.com by Sarah Wood

Research indicates that AI is becoming an essential skill to learn for students to succeed in the workplace.

Q: How do you expect to see AI embraced more in the future in college and the workplace?

I do believe it’s going to become a permanent fixture for multiple reasons. I think the national security imperative associated with AI as a result of competing against other nations is going to drive a lot of energy and support for AI education. We also see shifts across every field and discipline regarding the usage of AI beyond college. We see this in a broad array of fields, including health care and the field of law. I think it’s here to stay and I think that means we’re going to see AI literacy being taught at most colleges and universities, and more faculty leveraging AI to help improve the quality of their instruction. I feel like we’re just at the beginning of a transition. In fact, I often describe our current moment as the ‘Ask Jeeves’ phase of the growth of AI. There’s a lot of change still ahead of us. AI, for better or worse, it’s here to stay.

AI-Generated Podcasts Outperform Textbooks in Landmark Education Study — form linkedin.com by David Borish

A new study from Drexel University and Google has demonstrated that AI-generated educational podcasts can significantly enhance both student engagement and learning outcomes compared to traditional textbooks. The research, involving 180 college students across the United States, represents one of the first systematic investigations into how artificial intelligence can transform educational content delivery in real-time.

What can we do about generative AI in our teaching? — from linkedin.com by Kristina Peterson

So what can we do?

- Interrogate the Process: We can ask ourselves if we I built in enough checkpoints. Steps that can’t be faked. Things like quick writes, question floods, in-person feedback, revision logs.

- Reframe AI: We can let students use AI as a partner. We can show them how to prompt better, revise harder, and build from it rather than submit it. Show them the difference between using a tool and being used by one.

- Design Assignments for Curiosity, Not Compliance: Even the best of our assignments need to adapt. Mine needs more checkpoints, more reflective questions along the way, more explanation of why my students made the choices they did.

Teachers Are Not OK — from 404media.co by Jason Koebler

The response from teachers and university professors was overwhelming. In my entire career, I’ve rarely gotten so many email responses to a single article, and I have never gotten so many thoughtful and comprehensive responses.

One thing is clear: teachers are not OK.

…

In addition, universities are contracting with companies like Microsoft, Adobe, and Google for digital services, and those companies are constantly pushing their AI tools. So a student might hear “don’t use generative AI” from a prof but then log on to the university’s Microsoft suite, which then suggests using Copilot to sum up readings or help draft writing. It’s inconsistent and confusing.

I am sick to my stomach as I write this because I’ve spent 20 years developing a pedagogy that’s about wrestling with big ideas through writing and discussion, and that whole project has been evaporated by for-profit corporations who built their systems on stolen work. It’s demoralizing.

NAMLE 2025 Conference

Join us for the largest professional development conference dedicated to media literacy education in the U.S. on July 11-12, 2025.

From Pre-K to Higher Education, Community Education and Libraries, the conference provides valuable resources, technology, teacher practice and pedagogy, assessments, and core concepts of media literacy education.

How To Get Hired During the AI Apocalypse — from kathleendelaski.substack.com by Kathleen deLaski

And other discussions to have with your kids on the way to college graduation

A less temporary, more existential threat to the four year degree: AI could hollow out the entry level job market for knowledge workers (i.e. new college grads). And if 56% of families were saying college “wasn’t worth it” in 2023,(WSJ), what will that number look like in 2026 or beyond? The one of my kids who went to college ended up working in a bike shop for a year-ish after graduation. No regrets, but it came as a shock to them that they weren’t more employable with their neuroscience degree.

A colleague provided a great example: Her son, newly graduated, went for a job interview as an entry level writer last month and he was asked, as a test, to produce a story with AI and then use that story to write a better one by himself. He would presumably be judged on his ability to prompt AI and then improve upon its product. Is that learning how to DO? I think so. It’s using AI tools to accomplish a workplace task.

Also relevant in terms of the job search, see the following gifted article:

‘We Are the Most Rejected Generation’ — from nytimes.com by David Brooks; gifted article

David talks admissions rates for selective colleges, ultra-hard to get summer internships, a tough entry into student clubs, and the job market.

Things get even worse when students leave school and enter the job market. They enter what I’ve come to think of as the seventh circle of Indeed hell. Applying for jobs online is easy, so you have millions of people sending hundreds of applications each into the great miasma of the internet, and God knows which impersonal algorithm is reading them. I keep hearing and reading stories about young people who applied to 400 jobs and got rejected by all of them.

It seems we’ve created a vast multilayered system that evaluates the worth of millions of young adults and, most of the time, tells them they are not up to snuff.

Many administrators and faculty members I’ve spoken to are mystified that students would create such an unforgiving set of status competitions. But the world of competitive exclusion is the world they know, so of course they are going to replicate it.

And in this column I’m not even trying to cover the rejections experienced by the 94 percent of American students who don’t go to elite schools and don’t apply for internships at Goldman Sachs. By middle school, the system has told them that because they don’t do well on academic tests, they are not smart, not winners. That’s among the most brutal rejections our society has to offer.

Fiverr CEO explains alarming message to workers about AI — from iblnews.org

Fiverr CEO Micha Kaufman recently warned his employees about the impact of artificial intelligence on their jobs.

The Great Career Reinvention, and How Workers Can Keep Up — from workshift.org by Michael Rosenbaum

A wide range of roles can or will quickly be replaced with AI, including inside sales representatives, customer service representatives, junior lawyers, junior accountants, and physicians whose focus is diagnosis.

Behind the Curtain: A white-collar bloodbath — from axios.com by Jim VandeHei and Mike Allen

Dario Amodei — CEO of Anthropic, one of the world’s most powerful creators of artificial intelligence — has a blunt, scary warning for the U.S. government and all of us:

- AI could wipe out half of all entry-level white-collar jobs — and spike unemployment to 10-20% in the next one to five years, Amodei told us in an interview from his San Francisco office.

- Amodei said AI companies and government need to stop “sugar-coating” what’s coming: the possible mass elimination of jobs across technology, finance, law, consulting and other white-collar professions, especially entry-level gigs.

Why it matters: Amodei, 42, who’s building the very technology he predicts could reorder society overnight, said he’s speaking out in hopes of jarring government and fellow AI companies into preparing — and protecting — the nation.

Skilling Up for AI Transformation — from learningguild.com by Lauren Milstid and Megan Torrance

Lately, I’ve been in a lot of conversations—some casual, some strategy-deep—about what it takes to skill up teams for AI. One pattern keeps emerging: The organizations getting the most out of generative AI are the ones doing the most to support their people. They’re not just training on a single tool. They’re building the capacity to work with AI as a class of technology.

So let’s talk about that. Not the hype, but the real work of helping humans thrive in an AI-enabled workplace.

If Leadership Training Isn’t Applied, It Hasn’t Happened — from learningguild.com by Tim Samuels

L&D leadership training sessions often “feel” successful. A program is designed, a workshop is delivered, and employees leave feeling informed and engaged. But if that training isn’t applied in the workplace, did it actually happen? If we focus entirely on the “learning” but not the “development,” we’re wasting huge amounts of time and money. So let’s take a look at the current situation first.

The reality is stark; according to Harvard Business Review:

- Only 12% of employees apply new skills learned in L&D programs

- Just 25% believe their training measurably improved performance

- We forget 75% of what we learn within six days unless we use it

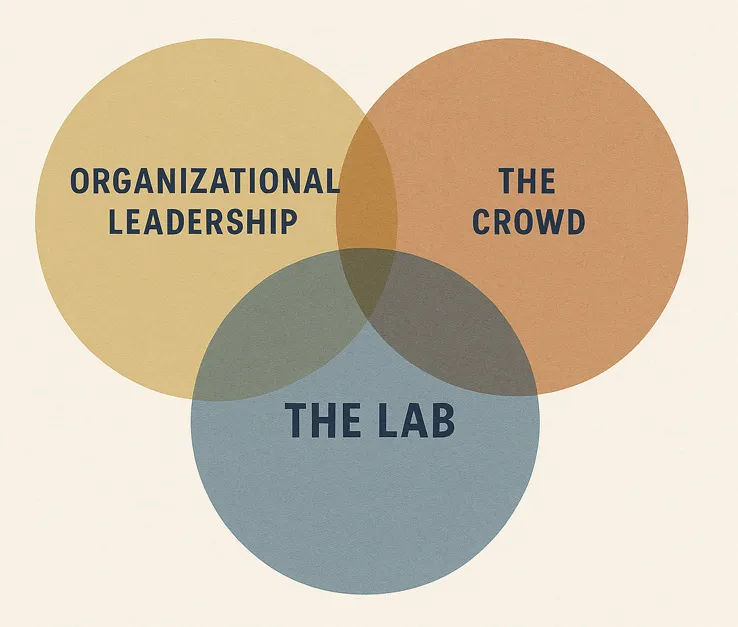

Making AI Work: Leadership, Lab, and Crowd — from oneusefulthing.org by Ethan Mollick

A formula for AI in companies

How do we reconcile the first three points with the final one? The answer is that AI use that boosts individual performance does not naturally translate to improving organizational performance. To get organizational gains requires organizational innovation, rethinking incentives, processes, and even the nature of work. But the muscles for organizational innovation inside companies have atrophied. For decades, companies have outsourced this to consultants or enterprise software vendors who develop generalized approaches that address the issues of many companies at once. That won’t work here, at least for a while. Nobody has special information about how to best use AI at your company, or a playbook for how to integrate it into your organization.

.

Galileo Learn™ – A Revolutionary Approach To Corporate Learning — from joshbersin.com

Today we are excited to launch Galileo Learn™, a revolutionary new platform for corporate learning and professional development.

…

How do we leverage AI to revolutionize this model, doing away with the dated “publishing” model of training?

The answer is Galileo Learn, a radically new and different approach to corporate training and professional development.

…

What Exactly is Galileo Learn™?

Galileo Learn is an AI-native learning platform which is tightly integrated into the Galileo agent. It takes content in any form (PDF, word, audio, video, SCORM courses, and more) and automatically (with your guidance) builds courses, assessments, learning programs, polls, exercises, simulations, and a variety of other instructional formats.

Designing an Ecosystem of Resources to Foster AI Literacy With Duri Long — from aialoe.org

Centering Public Understanding in AI Education

In a recent talk titled “Designing an Ecosystem of Resources to Foster AI Literacy,” Duri Long, Assistant Professor at Northwestern University, highlighted the growing need for accessible, engaging learning experiences that empower the public to make informed decisions about artificial intelligence. Long emphasized that as AI technologies increasingly influence everyday life, fostering public understanding is not just beneficial—it’s essential. Her work seeks to develop a framework for AI literacy across varying audiences, from middle school students to adult learners and journalists.

A Design-Driven, Multi-Context Approach

Drawing from design research, cognitive science, and the learning sciences, Long presented a range of educational tools aimed at demystifying AI. Her team has created hands-on museum exhibits, such as Data Bites, where learners build physical datasets to explore how computers learn. These interactive experiences, along with web-based tools and support resources, are part of a broader initiative to bridge AI knowledge gaps using the 4As framework: Ask, Adapt, Author, and Analyze. Central to her approach is the belief that familiar, tangible interactions and interfaces reduce intimidation and promote deeper engagement with complex AI concepts.

AI-Powered Lawyering: AI Reasoning Models, Retrieval Augmented Generation, and the Future of Legal Practice

Minnesota Legal Studies Research Paper No. 25-16; March 02, 2025; from papers.ssrn.com by:

Daniel Schwarcz

University of Minnesota Law School

Sam Manning

Centre for the Governance of AI

Patrick Barry

University of Michigan Law School

David R. Cleveland

University of Minnesota Law School

J.J. Prescott

University of Michigan Law School

Beverly Rich

Ogletree Deakins

Abstract

Generative AI is set to transform the legal profession, but its full impact remains uncertain. While AI models like GPT-4 improve the efficiency with which legal work can be completed, they can at times make up cases and “hallucinate” facts, thereby undermining legal judgment, particularly in complex tasks handled by skilled lawyers. This article examines two emerging AI innovations that may mitigate these lingering issues: Retrieval Augmented Generation (RAG), which grounds AI-powered analysis in legal sources, and AI reasoning models, which structure complex reasoning before generating output. We conducted the first randomized controlled trial assessing these technologies, assigning upper-level law students to complete six legal tasks using a RAG-powered legal AI tool (Vincent AI), an AI reasoning model (OpenAI’s o1-preview), or no AI. We find that both AI tools significantly enhanced legal work quality, a marked contrast with previous research examining older large language models like GPT-4. Moreover, we find that these models maintain the efficiency benefits associated with use of older AI technologies. Our findings show that AI assistance significantly boosts productivity in five out of six tested legal tasks, with Vincent yielding statistically significant gains of approximately 38% to 115% and o1-preview increasing productivity by 34% to 140%, with particularly strong effects in complex tasks like drafting persuasive letters and analyzing complaints. Notably, o1-preview improved the analytical depth of participants’ work product but resulted in some hallucinations, whereas Vincent AI-aided participants produced roughly the same amount of hallucinations as participants who did not use AI at all. These findings suggest that integrating domain-specific RAG capabilities with reasoning models could yield synergistic improvements, shaping the next generation of AI-powered legal tools and the future of lawyering more generally.

Guest post: How technological innovation can boost growth — from legaltechnology.com by Caroline Hill

One key change is the growing adoption of technology within legal service providers, and this is transforming the way firms operate and deliver value to clients.

The legal services sector’s digital transformation is gaining momentum, driven both by client expectations as well as the potential for operational efficiency. With the right support, legal firms can innovate through tech adoption and remain competitive to deliver strong client outcomes and long-term growth.

AI Can Do Many Tasks for Lawyers – But Be Careful — from nysba.org by Rebecca Melnitsky

Artificial intelligence can perform several tasks to aid lawyers and save time. But lawyers must be cautious when using this new technology, lest they break confidentiality or violate ethical standards.

The New York State Bar Association hosted a hybrid program discussing AI’s potential and its pitfalls for the legal profession. More than 300 people watched the livestream.

For that reason, Unger suggests using legal AI tools, like LexisNexis AI, Westlaw Edge, and vLex Fastcase, for legal research instead of general generative AI tools. While legal-specific tools still hallucinate, they hallucinate much less. A legal tool will hallucinate 10% to 20% of the time, while a tool like ChatGPT will hallucinate 50% to 80%.

Fresh Voices on Legal Tech with Nikki Shaver — from legaltalknetwork.com by Dennis Kennedy, Tom Mighell, and Nikki Shaver

Determining which legal technology is best for your law firm can seem like a daunting task, so Legaltech Hub does the hard work for you! In another edition of Fresh Voices, Dennis and Tom talk with Nikki Shaver, CEO at Legaltech Hub, about her in-depth knowledge of technology and AI trends. Nikki shares what effective tech strategies should look like for attorneys and recommends innovative tools for maintaining best practices in modern law firms. Learn more at legaltechnologyhub.com.

AI for in-house legal: 2025 predictions — from deloitte.com

Our expectations for AI engagement and adoption in the legal Market over the coming year.

AI will continue to transform in-house legal departments in 2025

As we enter 2025, over two-thirds of organisations plan to increase their Generative AI (GenAI) investments, providing legal teams with significant executive support and resources to further develop this Capabilities. This presents a substantial opportunity for legal departments, particularly as GenAI technology continues to advance at an impressive pace. We make five predictions for AI engagement and adoption in the legal Market over the coming year and beyond.

Navigating The Fine Line: Redefining Legal Advice In The Age Of Tech With Erin Levine And Quinten Steenhuis — from abovethelaw.com by Olga V. Mack

The definition of ‘practicing law’ is outdated and increasingly irrelevant in a tech-driven world. Should the line between legal advice and legal information even exist?

Practical Takeaways for Legal Leaders

- Use Aggregated Data: Providing consumers with benchmarks (e.g., “90% of users in your position accepted similar settlements”) empowers them without giving direct legal advice.

- Train and Supervise AI Tools: AI works best when it’s trained on reliable, localized data and supervised by legal professionals.

- Partner with Courts: As Quinten pointed out, tools built in collaboration with courts often avoid UPL pitfalls. They’re also more likely to gain the trust of both regulators and consumers.

- Embrace Transparency: Clear disclaimers like “This is not legal advice” go a long way in building consumer trust and meeting ethical standards.

I’m a LinkedIn Executive. I See the Bottom Rung of the Career Ladder Breaking. — from nytimes.com by Aneesh Raman; this is a gifted article

There are growing signs that artificial intelligence poses a real threat to a substantial number of the jobs that normally serve as the first step for each new generation of young workers. Uncertainty around tariffs and global trade is likely to only accelerate that pressure, just as millions of 2025 graduates enter the work force.

…

Breaking first is the bottom rung of the career ladder. In tech, advanced coding tools are creeping into the tasks of writing simple code and debugging — the ways junior developers gain experience. In law firms, junior paralegals and first-year associates who once cut their teeth on document review are handing weeks of work over to A.I. tools to complete in a matter of hours. And across retailers, A.I. chatbots and automated customer service tools are taking on duties once assigned to young associates.

‘What I learned when students walked out of my AI class’ — from timeshighereducation.com by Chris Hogg

Chris Hogg found the question of using AI to create art troubled his students deeply. Here’s how the moment led to deeper understanding for both student and educator

Teaching AI can be as thrilling as it is challenging. This became clear one day when three students walked out of my class, visibly upset. They later explained their frustration: after spending years learning their creative skills, they were disheartened to see AI effortlessly outperform them at the blink of an eye.

This moment stuck with me – not because it was unexpected, but because it encapsulates the paradoxical relationship we all seem to have with AI. As both an educator and a creative, I find myself asking: how do we engage with this powerful tool without losing ourselves in the process? This is the story of how I turned moments of resistance into opportunities for deeper understanding.

In the AI era, how do we battle cognitive laziness in students? — from timeshighereducation.com by Sean McMinn

With the latest AI technology now able to handle complex problem-solving processes, will students risk losing their own cognitive engagement? Metacognitive scaffolding could be the answer, writes Sean McMinn

The concern about cognitive laziness seems to be backed by Anthropic’s report that students use AI tools like Claude primarily for creating (39.8 per cent) and analysing (30.2 per cent) tasks, both considered higher-order cognitive functions according to Bloom’s Taxonomy. While these tasks align well with advanced educational objectives, they also pose a risk: students may increasingly delegate critical thinking and complex cognitive processes directly to AI, risking a reduction in their own cognitive engagement and skill development.

Make Instructional Design Fun Again with AI Agents — from drphilippahardman.substack.com by Dr. Philippa Hardman

A special edition practical guide to selecting & building AI agents for instructional design and L&D

Exactly how we do this has been less clear, but — fuelled by the rise of so-called “Agentic AI” — more and more instructional designers ask me: “What exactly can I delegate to AI agents, and how do I start?”

In this week’s post, I share my thoughts on exactly what instructional design tasks can be delegated to AI agents, and provide a step-by-step approach to building and testing your first AI agent.

Here’s a sneak peak….

AI Personality Matters: Why Claude Doesn’t Give Unsolicited Advice (And Why You Should Care) — from mikekentz.substack.com by Mike Kentz

First in a four-part series exploring the subtle yet profound differences between AI systems and their impact on human cognition

After providing Claude with several prompts of context about my creative writing project, I requested feedback on one of my novel chapters. The AI provided thoughtful analysis with pros and cons, as expected. But then I noticed what wasn’t there: the customary offer to rewrite my chapter.

…

Without Claude’s prompting, I found myself in an unexpected moment of metacognition. When faced with improvement suggestions but no offer to implement them, I had to consciously ask myself: “Do I actually want AI to rewrite this section?” The answer surprised me – no, I wanted to revise it myself, incorporating the insights while maintaining my voice and process.

The contrast was striking. With ChatGPT, accepting its offer to rewrite felt like a passive, almost innocent act – as if I were just saying “yes” to a helpful assistant. But with Claude, requesting a rewrite required deliberate action. Typing out the request felt like a more conscious surrender of creative agency.

Also re: metacognition and AI, see:

In the AI era, how do we battle cognitive laziness in students? — from timeshighereducation.com by Sean McMinn

With the latest AI technology now able to handle complex problem-solving processes, will students risk losing their own cognitive engagement? Metacognitive scaffolding could be the answer, writes Sean McMinn

The concern about cognitive laziness seems to be backed by Anthropic’s report that students use AI tools like Claude primarily for creating (39.8 per cent) and analysing (30.2 per cent) tasks, both considered higher-order cognitive functions according to Bloom’s Taxonomy. While these tasks align well with advanced educational objectives, they also pose a risk: students may increasingly delegate critical thinking and complex cognitive processes directly to AI, risking a reduction in their own cognitive engagement and skill development.

By prompting students to articulate their cognitive processes, such tools reinforce the internalisation of self-regulated learning strategies essential for navigating AI-augmented environments.

EDUCAUSE Panel Highlights Practical Uses for AI in Higher Ed — from govtech.com by Abby Sourwine

A webinar this week featuring panelists from the education, private and nonprofit sectors attested to how institutions are applying generative artificial intelligence to advising, admissions, research and IT.

Many higher education leaders have expressed hope about the potential of artificial intelligence but uncertainty about where to implement it safely and effectively. According to a webinar Tuesday hosted by EDUCAUSE, “Unlocking AI’s Potential in Higher Education,” their answer may be “almost everywhere.”

Panelists at the event, including Kaskaskia College CIO George Kriss, Canyon GBS founder and CEO Joe Licata and Austin Laird, a senior program officer at the Gates Foundation, said generative AI can help colleges and universities meet increasing demands for personalization, timely communication and human-to-human connections throughout an institution, from advising to research to IT support.

Partly Cloudy with a Chance of Chatbots — from derekbruff.org by Derek Bruff

Here are the predictions, our votes, and some commentary:

- “By 2028, at least half of large universities will embed an AI ‘copilot’ inside their LMS that can draft content, quizzes, and rubrics on demand.” The group leaned toward yes on this one, in part because it was easy to see LMS vendors building this feature in as a default.

- “Discipline-specific ‘digital tutors’ (LLM chatbots trained on course materials) will handle at least 30% of routine student questions in gateway courses.” We learned toward yes on this one, too, which is why some of us are exploring these tools today. We would like to be ready how to use them well (or avoid their use) when they are commonly available.

- “Adaptive e-texts whose examples, difficulty, and media personalize in real time via AI will outsell static digital textbooks in the U.S. market.” We leaned toward no on this one, in part because the textbook market and what students want from textbooks has historically been slow to change. I remember offering my students a digital version of my statistics textbook maybe 6-7 years ago, and most students opted to print the whole thing out on paper like it was 1983.

- “AI text detectors will be largely abandoned as unreliable, shifting assessment design toward oral, studio, or project-based ‘AI-resilient’ tasks.” We leaned toward yes on this. I have some concerns about oral assessments (they certainly privilege some students over others), but more authentic assignments seems like what higher ed needs in the face of AI. Ted Underwood recently suggested a version of this: “projects that attempt genuinely new things, which remain hard even with AI assistance.” See his post and the replies for some good discussion on this idea.

- “AI will produce multimodal accessibility layers (live translation, alt-text, sign-language avatars) for most lecture videos without human editing.” We leaned toward yes on this one, too. This seems like another case where something will be provided by default, although my podcast transcripts are AI-generated and still need editing from me, so we’re not there quite yet.

‘We Have to Really Rethink the Purpose of Education’

The Ezra Klein Show

Description: I honestly don’t know how I should be educating my kids. A.I. has raised a lot of questions for schools. Teachers have had to adapt to the most ingenious cheating technology ever devised. But for me, the deeper question is: What should schools be teaching at all? A.I. is going to make the future look very different. How do you prepare kids for a world you can’t predict?

And if we can offload more and more tasks to generative A.I., what’s left for the human mind to do?

Rebecca Winthrop is the director of the Center for Universal Education at the Brookings Institution. She is also an author, with Jenny Anderson, of “The Disengaged Teen: Helping Kids Learn Better, Feel Better, and Live Better.” We discuss how A.I. is transforming what it means to work and be educated, and how our use of A.I. could revive — or undermine — American schools.

AI prompting secrets EXPOSED — from theneurondaily.com by Grant Harvey

Here are the three best prompting guides:

- Anthropic’s “Prompt Engineering Overview” is a free masterclass that’s worth its weight in gold. Their “constitutional AI prompting” section helped us create a content filter that actually works—unlike the one that kept flagging our coffee bean reviews as “inappropriate.” Apparently “rich body” triggered something…

- OpenAI’s “Cookbook” is like having a Michelin-star chef explain cooking—simple for beginners, but packed with pro techniques. Their JSON formatting examples saved us 3 hours of debugging last week…

- Google’s “Prompt Design Strategies” breaks down complex concepts with clear examples. Their before/after gallery showing how slight prompt tweaks improve results made us rethink everything we knew about getting quality outputs.

Pro tip: Save these guides as PDFs before they disappear behind paywalls. The best AI users keep libraries of these resources for quick reference.

.

My personal review of 10+ AI agents and what actually works — from aiwithallie.beehiiv.com by Allie K. Miller

The AI Agents Report Card you wish your boss gave you.

What you’ll learn in this newsletter:

- Which AI agents actually deliver value right now

- Where even the best agents still fall embarrassingly short

- The surprising truth about those sleek, impressive interfaces

- The economics of delegating to AI (and when it’s worth the premium)

- Five practical takeaways to guide your AI strategy

Employees Keep Their AI-Driven Productivity a Secret — from hrotoday.com; via The Neuron

“To address this, organizations should consider building a sustainable AI governance model, prioritizing transparency, and tackling the complex challenge of AI-fueled imposter syndrome through reinvention. Employers who fail to approach innovation with empathy and provide employees with autonomy run the risk of losing valuable staff and negatively impacting employee productivity.”

Key findings from the report include the following:

- Employees are keeping their productivity gains a secret from their employers. …

- In-office employees may still log in remotely after hours. …

- Younger workers are more likely to switch jobs to gain more flexibility.

AI discovers new math algorithms — from by Zach Mink & Rowan Cheung

PLUS: Anthropic reportedly set to launch new Sonnet, Opus models

The Rundown: Google just debuted AlphaEvolve, a coding agent that harnesses Gemini and evolutionary strategies to craft algorithms for scientific and computational challenges — driving efficiency inside Google and solving historic math problems.

…

Why it matters: Yesterday, we had OpenAI’s Jakub Pachocki saying AI has shown “significant evidence” of being capable of novel insights, and today Google has taken that a step further. Math plays a role in nearly every aspect of life, and AI’s pattern and algorithmic strengths look ready to uncover a whole new world of scientific discovery.

AI agents are set to explode: Reports forecast 45% annual growth rate — from hrexecutive.com by Jill Barth

At the recent HR Executive and Future Talent Council event at Bentley University near Boston, I talked with Top 100 HR Tech Influencer Joey Price about what he’s hearing from HR leaders. Price is president and CEO of Jumpstart HR and executive analyst at Aspect43, Jumpstart HR’s HR?tech research division, and author of a valuable new book, The Power of HR: How to Make an Organizational Impact as a People?Professional.

This puts him solidly at the center of HR’s most relevant conversations. Price described the curiosity he’s hearing from many HR leaders about AI agents, which have become increasingly prominent in recent months.