Stanford Law Unveils liftlab, a Groundbreaking AI Initiative Focused on the Legal Profession’s Future — from law.stanford.edu

September 15, 2025 — Stanford, CA — Stanford Law School today announced the launch of the Legal Innovation through Frontier Technology Lab, or liftlab, to explore how artificial intelligence can reshape legal services—not just to make them faster and cheaper, but better and more widely accessible.

Led by Professor Julian Nyarko and Executive Director Megan Ma, liftlab is among the first academic efforts in legal AI to unite research, prototyping, and real-time collaboration with industry. While much of AI innovation in law has so far focused on streamlining routine tasks, liftlab is taking a broader and more ambitious approach. The goal is to tap AI’s potential to fundamentally change the way legal work serves society.

The divergence of law firms from lawyers — from jordanfurlong.substack.com by Jordan Furlong

LLMs’ absorption of legal task performance will drive law firms towards commoditized service hubs while raising lawyers to unique callings as trustworthy legal guides — so long as we do this right.

Generative AI is going to weaken and potentially dissolve that relationship. Law firms will become capable of generating output that can be sold to clients with no lawyer involvement at all.

Right now, it’s possible for an ordinary person to obtain from an LLM like ChatGPT-5 the performance of a legal task — the provision of legal analysis, the production of a legal instrument, the delivery of legal advice — that previously could only be acquired from a human lawyer.

I’m not saying a person should do that. The LLM’s output might be effective and reliable, or it might prove disastrously off-base. But many people are already using LLMs in this way, and in the absence of other accessible options for legal assistance, they will continue to do so.

Why legal professionals need purpose-built agentic AI — from legal.thomsonreuters.com by Frank Schilder with Thomson Reuters Labs

Highlights

- Professional-grade agentic AI systems are architecturally distinct from consumer chatbots, utilizing domain-specific data and robust verification mechanisms to deliver the high accuracy and reliability essential for legal work, whereas consumer tools prioritize conversational flow using unvetted web data.

- True agentic AI for legal professionals offers transparent, multi-agent workflows, integrates with authoritative legal databases for verification, and applies domain-specific reasoning to understand legal nuances, unlike traditional chatbots that lack this complexity and autonomy.

- When evaluating legal AI, professionals should avoid solutions that lack workflow transparency, offer no human checkpoints for oversight, and cannot integrate with professional databases, ensuring the chosen tool enhances, rather than replaces, expert judgment.

How I Left Corporate Law to Become a Legal Tech Entrepreneur — from news.bloomberglaw.com by Adam Nguyen; behind a paywall

If you’re a lawyer wondering whether to take the leap into entrepreneurship, I understand the apprehension that comes with leaving a predictable path. Embracing the fear, uncertainty, challenges, and constant evolution integral to an entrepreneur’s journey has been worth it for me.

Lawyering In The Age Of AI: Why Artificial Intelligence Might Make Lawyers More Human — from abovethelaw.com by Lisa Lang and Joshua Horenstein

AI could rehumanize the legal profession.

AI is already adept at doing what law school trained us to do — identifying risks, spotting issues, and referencing precedent. What it’s not good at is nuance, trust, or judgment — skills that define great lawyering.

When AI handles some of the drudgery — like contract clause spotting and formatting — it gives us something precious back: time. That time forces lawyers to stop hiding behind legalese and impractical analysis. It allows — and even demands — that we communicate like leaders.

Imagine walking into a business meeting and, instead of delivering a 20-page memo, offering a single slide with a recommendation tied directly to company goals. That’s not just good lawyering; that’s leadership. And AI may be the catalyst that gets us there.

AI changes the game. When generative tools can translate clauses into plain English, the old value proposition of complexity begins to crumble. The playing field shifts — from who can analyze the most thoroughly to who can communicate the most clearly.

That’s not a threat. It’s an opportunity — one for lawyers to become better partners to the business by focusing on what matters most: sound judgment delivered in plain language.

Miro and GenAI as drivers of online student engagement — from timeshighereducation.com by Jaime Eduardo Moncada Garibay

A set of practical strategies for transforming passive online student participation into visible, measurable and purposeful engagement through the use of Miro, enhanced by GenAI

To address this challenge, I shifted my focus from requesting participation to designing it. This strategic change led me to integrate Miro, a visual digital workspace, into my classes. Miro enables real-time visualisation and co-creation of ideas, whether individually or in teams.

…

The transition from passive attendance to active engagement in online classes requires deliberate instructional design. Tools such as Miro, enhanced by GenAI, enable educators to create structured, visually rich learning environments in which participation is both expected and documented.

While technology provides templates, frames, timers and voting features, its real pedagogical value emerges through intentional facilitation, where the educator’s role shifts from delivering content to orchestrating collaborative, purposeful learning experiences.

Benchmarking Online Education with Bruce Etter and Julie Uranis — from buzzsprout.com by Derek Bruff

Here are some that stood out to me:

- In the past, it was typical for faculty to teach online courses as an “overload” of some kind, but BOnES data show that 92% of online programs feature courses taught as part of faculty member’s standard teaching responsibilities. Online teaching has become one of multiple modalities in which faculty teach regularly.

- Three-quarters of chief online officers surveyed said they plan to have a great market share of online enrollments in the future, but only 23% said their current marketing is better than their competitors. The rising tide of online enrollments won’t lift all boats–some institutions will fare better than others.

- Staffing at online education units is growing, with the median staff size increasing from 15 last year to 20 this year. Julie pointed out that successful online education requires investment of resources. You might need as many buildings as onsite education does, but you need people and you need technology.

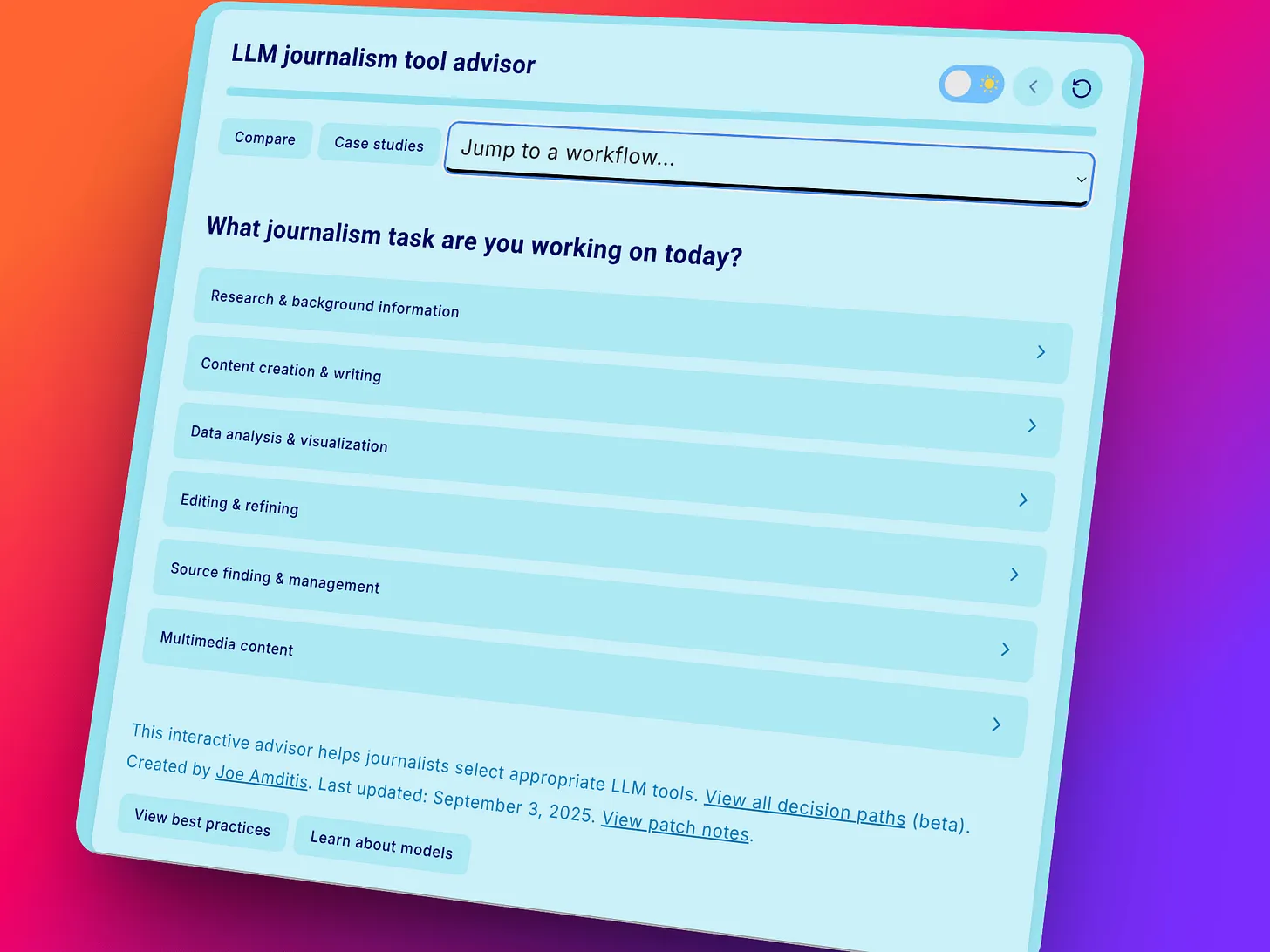

A Journalist’s Toolkit for the AI Era — from wondertools.substack.com by Jeremy Caplan and Joe Amditis

A guest expert shares his practical tools

As news organizations scramble to update their digital toolkits, I invited one of the most tech savvy journalism advisors I know to share his guidance.

In the guest post below, Joe Amditis shares a bunch of useful resources. A former CUNY student of mine, Joe now serves as associate director of operations at the Center for Cooperative Media at Montclair State University.

The 2025 Changing Landscape of Online Education (CHLOE) 10 Report — from qualitymatters.org; emphasis below from DSC

Notable findings from the 73-page report include:

- Online Interest Surges Across Student Populations: …

- Institutional Preparedness Falters Amid Rising Demand: Despite accelerating demand, institutional readiness has stagnated—or regressed—in key areas. …

- The Online Education Marketplace Is Increasingly Competitive: …

- Alternative Credentials Take Center Stage: …

- AI Integration Lacks Strategic Coordination: …

Just 28% of faculty are considered fully prepared for online course design, and 45% for teaching. Alarmingly, only 28% of institutions report having fully developed academic continuity plans for future emergency pivots to online.

Also relevant, see:

Great Expectations, Fragile Foundations — from onedtech.philhillaa.com by Glenda Morgan

Lessons about growth from the CHLOE & BOnES reports

Cultural resistance remains strong. Many [Chief Online Learning Officers] COLOs say faculty and deans still believe in-person learning is “just better,” creating headwinds even for modest online growth. As one respondent at a four-year institution with a large online presence put it:

Supportive departments [that] see the value in online may have very different levels of responsiveness compared to academic departments [that] are begrudgingly online. There is definitely a growing belief that students “should” be on-ground and are only choosing online because it’s easy/ convenient. Never mind the very real and growing population of nontraditional learners who can only take online classes, and the very real and growing population of traditional-aged learners who prefer online classes; many faculty/deans take a paternalistic, “we know what’s best” approach.

…

Ultimately, what we need is not just more ambition but better ambition. Ambition rooted in a realistic understanding of institutional capacity, a shared strategic vision, investments in policy and infrastructure, and a culture that supports online learning as a core part of the academic mission, not an auxiliary one. It’s time we talked about what it really takes to grow online learning , and where ambition needs to be matched by structure.

From DSC:

Yup. Culture is at the breakfast table again…boy, those strategies taste good.

I’d like to take some of this report — like the graphic below — and share it with former faculty members and members of a couple of my past job families’ leadership. They strongly didn’t agree with us when we tried to advocate for the development of online-based learning/programs at our organizations…but we were right. We were right all along. And we were LEADING all along. No doubt about it — even if the leadership at the time said that we weren’t leading.

The cultures of those organizations hurt us at the time. But our cultivating work eventually led to the development of online programs — unfortunately, after our groups were disbanded, they had to outsource those programs to OPMs.

Arizona State University — with its dramatic growth in online-based enrollments.

I Teach Creative Writing. This Is What A.I. Is Doing to Students. — from nytimes.com by Meghan O’Rourke; this is a gifted article.

We need a coherent approach grounded in understanding how the technology works, where it is going and what it will be used for.

From DSC:

I almost feel like Meghan should right the words “this week” or “this month” after the above sentence. Whew! Things are moving fast.

For example, we’re now starting to see more agents hitting the scene — software that can DO things. But that can open up a can of worms too.

Students know the ground has shifted — and that the world outside the university expects them to shift with it. A.I. will be part of their lives regardless of whether we approve. Few issues expose the campus cultural gap as starkly as this one.ce

From DSC:

Universities and colleges have little choice but to integrate AI into their programs and offerings. There’s enough pressure on institutions of traditional higher education to prove their worth/value. Students and their families want solid ROI’s. Students know that they are going to need AI-related skills (see the link immediately below for example), or they are going to be left out of the competitive job search process.

A relevant resource here:

- New Lightcast Report: AI Skills Command 28% Salary Premium as Demand Shifts Beyond Tech Industry — from lightcast.io; via Paul Fain

First-of-its-kind analysis reveals specific AI skills employers need most, enabling targeted workforce training strategies across all career areas

From DSC:

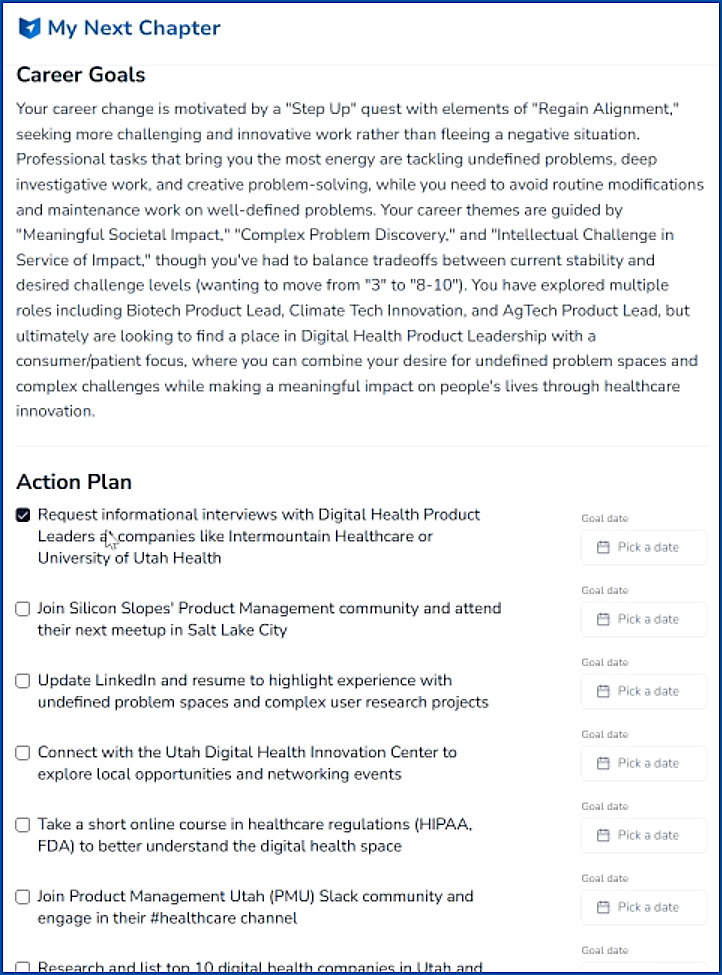

In looking at MyNextChapter.ai — THIS TYPE OF FUNCTIONALITY of an AI-based chatbot talking to you re: good fits for a future job — is the kind of thing that could work well in this type of vision/learning platform. The AI asks you relevant career-oriented questions, comes up with some potential job fits, and then gives you resources about how to gain those skills, who to talk with, organizations to join, next steps to get your foot in the door somewhere, etc.

The next gen learning platform would provide links to online-based courses, blogs, peoples’ names on LinkedIn, courses from L&D organizations or from institutions of higher education or from other entities/places to obtain those skills (similar to the ” Action Plan” below from MyNextChapter.ai).

From DSC:

Read through the article below. It’s an excellent example of a learning ecosystem, one that has been developed and practiced by Tiago Forte.

My 4-Stage System for Learning Anything New — from fortelabs.com by Tiago Forte

- Stage 1: Immersion – Get Maximum Exposure

- Stage 2: Building – Make Something Real

- Stage 3: Structured Learning – Find Your Mentors

- Stage 4: Connection – Build Real Relationships

- The Secret Ingredient: Cultivating Play in Learning

Teach business students to write like executives — from timeshighereducation.com by José Ignacio Sordo Galarza

Many business students struggle to communicate with impact. Teach them to pitch ideas on a single page to build clarity, confidence and work-ready communication skills

Many undergraduate business students transition into the workforce equipped with communication habits that, while effective in academic settings, prove ineffective in professional environments. At university, students are trained to write for professors, not executives. This becomes problematic in the workplace where lengthy reports and academic jargon often obscure rather than clarify intent. Employers seek ideas they can absorb in seconds. This is where the one-pager – a single-page, high-impact document that helps students develop clarity of thought, concise expression and strategic communication – proves effective.

Also from Times Higher Education, see:

Is the dissertation dead? If so, what are the alternatives? — from timeshighereducation.com by Rushana Khusainova, Sarah Sholl, & Patrick Harte

Dissertation alternatives, such as capstone projects and applied group-based projects, could better prepare graduates for their future careers. Discover what these might look like

The traditional dissertation, a longstanding pillar of higher education, is facing increasing scrutiny. Concerns about its relevance to contemporary career paths, limitations in fostering practical skills and the changing nature of knowledge production in the GenAI age have fuelled discussions about its continued efficacy. So, is the dissertation dead?

The dissertation is facing a number of challenges. It can be perceived as having little relevance to career aspirations in increasingly competitive job markets. According to The Future of Jobs Report 2025 by the World Economic Forum, employers demand and indeed prioritise skills such as collaborative problem-solving in diverse and complex contexts, which a dissertation might not demonstrate.

On blogging (again) — from by Martin Weller

I also pondered what functions blogging has provided for me over the years.

- Continuity – as an individual you persist across multiple organisations, roles and jobs. Although I stayed in one institution, I had many roles and the blog wasn’t associated with one specific project. Now I have left it continues.

- Holistic – you can blog about one topic, but over time I think some personality will creep in. You are not just one thing, you have a personal life, tastes, interests etc which will all feed into what you do. A blog allows this more rounded representation.

- Experimentation – there is relatively low cost and risk for much of it (this may not be the case for many people online, we need to acknowledge), so you can try things, and if they don’t work, so what? Also you can try formats that conventional outlets might not be appropriate for.

- Development – the blog has been both an intentional and unintentional vehicle for working up ideas, documenting the process and getting feedback, which have led to more substantial outputs, such as books, project proposals and papers. Most importantly though it has been the means through which I have continually developed writing.

- Connecting – particularly in those halcyon early days, it was a good way of finding others, working on ideas together, sharing something of yourself. A lot of my career related personal friendships have resulted from blogging.

- Publicity – I became at one point (the OU crisis of 2018) something of a public voice of the OU, and have often used the blog for projects such as GO-GN

That’s not a bad return for a lil’ ol’ blog. I couldn’t say the same for academic journals.

The unexpected benefits of academic blogging | THE Campus Learn, Share, Connect

DC: I wish more faculty and staff members would blog and share their knowledge with the public. I greatly appreciate those staff and faculty members that DO blog! https://t.co/CHfHbxICcL

— Daniel S. Christian (@dchristian5) July 4, 2025

Transform Public Speaking with Yoodli: Your AI Coach — from rdene915.com by Paula Johnson

Yoodli is an AI tool designed to help users improve their public speaking skills. It analyzes your speech in real-time or after a recording and gives you feedback on things like:

-

- Filler words (“um,” “like,” “you know”)

- Pacing (Are you sprinting or sedating your audience?)

- Word choice and sentence complexity

- Eye contact and body language (with video)

- And yes, even your “uhhh” to actual word ratio

Yoodli gives you a transcript and a confidence score, plus suggestions that range from helpful to brutally honest. It’s basically Simon Cowell with AI ethics and a smiley face interface.

[What’s] going on with AI and education? — from theneuron.ai by Grant Harvey

With students and teachers alike using AI, schools are facing an “assessment crisis” where the line between tool and cheating has blurred, forcing a shift away from a broken knowledge economy toward a new focus on building human judgment through strategic struggle.

What to do about it: The future belongs to the “judgment economy,” where knowledge is commoditized but taste, agency, and learning velocity become the new human moats. Use the “Struggle-First” principle: wrestle with problems for 20-30 minutes before turning to AI, then use AI as a sparring partner (not a ghostwriter) to deepen understanding. The goal isn’t to avoid AI, but to strategically choose when to embrace “desirable difficulties” that build genuine expertise versus when to leverage AI for efficiency.

…

The Alpha-School Program in brief:

-

- Students complete core academics in just 2 hours using AI tutors, freeing up 4+ hours for life skills, passion projects, and real-world experiences.

- The school claims students learn at least 2x faster than their peers in traditional school.

- The top 20% of students show 6.5x growth. Classes score in the top 1-2% nationally across the board.

- Claims are based on NWEA’s Measures of Academic Progress (MAP) assessments… with data only available to the school. Hmm…

Austen Allred shared a story about the school, which put it on our radar.

Featured Report: Teaching for Tomorrow: Unlocking Six Weeks a Year With AI — from gallup.com

.

.

In the latest installment of Gallup and the Walton Family Foundation’s research on education, K-12 teachers reveal how AI tools are transforming their workloads, instructional quality and classroom optimism. The report finds that 60% of teachers used an AI tool during the 2024–25 school year. Weekly AI users report reclaiming nearly six hours per week — equivalent to six weeks per year — which they reinvest in more personalized instruction, deeper student feedback and better parent communication.

Despite this emerging “AI dividend,” adoption is uneven: 40% of teachers aren’t using AI at all, and only 19% report their school has a formal AI policy. Teachers with access to policies and support save significantly more time.

Educators also say AI improves their work. Most report higher-quality lesson plans, assessments and student feedback. And teachers who regularly use AI are more optimistic about its benefits for student engagement and accessibility — mirroring themes from the Voices of Gen Z: How American Youth View and Use Artificial Intelligence report, which found students hesitant but curious about AI’s classroom role. As AI tools grow more embedded in education, both teachers and students will need the training and support to use them effectively.

Also see:

- 2-Hour Learning

- What if children could crush academics in 2 hours, 2x faster?

- What if children could get back their most valuable resource, which is time?

- What if children could pursue the things they want during their afternoons and develop life skills?

Amira Learning: Teaching With The AI-Powered Reading Tool — from techlearning.com by Erik Ofgang

Amira Learning is a research-backed AI reading tutor that incorporates the science of reading into its features.

What Is Amira Learning?

Amira Learning’s system is built upon research led by Jack Mostow, a professor at Carnegie Mellon who helped pioneer AI literacy education. Amira uses Claude AI to power its AI features, but these features are different than many other AI tools on the market. Instead of focusing on chat and generative response, Amira’s key feature is its advanced speech recognition and natural language processing capabilities, which allow the app to “hear” when a student is struggling and tailor suggestions to that student’s particular mistakes.

Though it’s not meant to replace a teacher, Amira provides real-time feedback and also helps teachers pinpoint where a student is struggling. For these reasons, Amira Learning is a favorite of education scientists and advocates for science of reading-based literacy instruction. The tool currently is used by more than 4 million students worldwide and across the U.S.

‘What I learned when students walked out of my AI class’ — from timeshighereducation.com by Chris Hogg

Chris Hogg found the question of using AI to create art troubled his students deeply. Here’s how the moment led to deeper understanding for both student and educator

Teaching AI can be as thrilling as it is challenging. This became clear one day when three students walked out of my class, visibly upset. They later explained their frustration: after spending years learning their creative skills, they were disheartened to see AI effortlessly outperform them at the blink of an eye.

This moment stuck with me – not because it was unexpected, but because it encapsulates the paradoxical relationship we all seem to have with AI. As both an educator and a creative, I find myself asking: how do we engage with this powerful tool without losing ourselves in the process? This is the story of how I turned moments of resistance into opportunities for deeper understanding.

In the AI era, how do we battle cognitive laziness in students? — from timeshighereducation.com by Sean McMinn

With the latest AI technology now able to handle complex problem-solving processes, will students risk losing their own cognitive engagement? Metacognitive scaffolding could be the answer, writes Sean McMinn

The concern about cognitive laziness seems to be backed by Anthropic’s report that students use AI tools like Claude primarily for creating (39.8 per cent) and analysing (30.2 per cent) tasks, both considered higher-order cognitive functions according to Bloom’s Taxonomy. While these tasks align well with advanced educational objectives, they also pose a risk: students may increasingly delegate critical thinking and complex cognitive processes directly to AI, risking a reduction in their own cognitive engagement and skill development.

Make Instructional Design Fun Again with AI Agents — from drphilippahardman.substack.com by Dr. Philippa Hardman

A special edition practical guide to selecting & building AI agents for instructional design and L&D

Exactly how we do this has been less clear, but — fuelled by the rise of so-called “Agentic AI” — more and more instructional designers ask me: “What exactly can I delegate to AI agents, and how do I start?”

In this week’s post, I share my thoughts on exactly what instructional design tasks can be delegated to AI agents, and provide a step-by-step approach to building and testing your first AI agent.

Here’s a sneak peak….

AI Personality Matters: Why Claude Doesn’t Give Unsolicited Advice (And Why You Should Care) — from mikekentz.substack.com by Mike Kentz

First in a four-part series exploring the subtle yet profound differences between AI systems and their impact on human cognition

After providing Claude with several prompts of context about my creative writing project, I requested feedback on one of my novel chapters. The AI provided thoughtful analysis with pros and cons, as expected. But then I noticed what wasn’t there: the customary offer to rewrite my chapter.

…

Without Claude’s prompting, I found myself in an unexpected moment of metacognition. When faced with improvement suggestions but no offer to implement them, I had to consciously ask myself: “Do I actually want AI to rewrite this section?” The answer surprised me – no, I wanted to revise it myself, incorporating the insights while maintaining my voice and process.

The contrast was striking. With ChatGPT, accepting its offer to rewrite felt like a passive, almost innocent act – as if I were just saying “yes” to a helpful assistant. But with Claude, requesting a rewrite required deliberate action. Typing out the request felt like a more conscious surrender of creative agency.

Also re: metacognition and AI, see:

In the AI era, how do we battle cognitive laziness in students? — from timeshighereducation.com by Sean McMinn

With the latest AI technology now able to handle complex problem-solving processes, will students risk losing their own cognitive engagement? Metacognitive scaffolding could be the answer, writes Sean McMinn

The concern about cognitive laziness seems to be backed by Anthropic’s report that students use AI tools like Claude primarily for creating (39.8 per cent) and analysing (30.2 per cent) tasks, both considered higher-order cognitive functions according to Bloom’s Taxonomy. While these tasks align well with advanced educational objectives, they also pose a risk: students may increasingly delegate critical thinking and complex cognitive processes directly to AI, risking a reduction in their own cognitive engagement and skill development.

By prompting students to articulate their cognitive processes, such tools reinforce the internalisation of self-regulated learning strategies essential for navigating AI-augmented environments.

EDUCAUSE Panel Highlights Practical Uses for AI in Higher Ed — from govtech.com by Abby Sourwine

A webinar this week featuring panelists from the education, private and nonprofit sectors attested to how institutions are applying generative artificial intelligence to advising, admissions, research and IT.

Many higher education leaders have expressed hope about the potential of artificial intelligence but uncertainty about where to implement it safely and effectively. According to a webinar Tuesday hosted by EDUCAUSE, “Unlocking AI’s Potential in Higher Education,” their answer may be “almost everywhere.”

Panelists at the event, including Kaskaskia College CIO George Kriss, Canyon GBS founder and CEO Joe Licata and Austin Laird, a senior program officer at the Gates Foundation, said generative AI can help colleges and universities meet increasing demands for personalization, timely communication and human-to-human connections throughout an institution, from advising to research to IT support.

Partly Cloudy with a Chance of Chatbots — from derekbruff.org by Derek Bruff

Here are the predictions, our votes, and some commentary:

- “By 2028, at least half of large universities will embed an AI ‘copilot’ inside their LMS that can draft content, quizzes, and rubrics on demand.” The group leaned toward yes on this one, in part because it was easy to see LMS vendors building this feature in as a default.

- “Discipline-specific ‘digital tutors’ (LLM chatbots trained on course materials) will handle at least 30% of routine student questions in gateway courses.” We learned toward yes on this one, too, which is why some of us are exploring these tools today. We would like to be ready how to use them well (or avoid their use) when they are commonly available.

- “Adaptive e-texts whose examples, difficulty, and media personalize in real time via AI will outsell static digital textbooks in the U.S. market.” We leaned toward no on this one, in part because the textbook market and what students want from textbooks has historically been slow to change. I remember offering my students a digital version of my statistics textbook maybe 6-7 years ago, and most students opted to print the whole thing out on paper like it was 1983.

- “AI text detectors will be largely abandoned as unreliable, shifting assessment design toward oral, studio, or project-based ‘AI-resilient’ tasks.” We leaned toward yes on this. I have some concerns about oral assessments (they certainly privilege some students over others), but more authentic assignments seems like what higher ed needs in the face of AI. Ted Underwood recently suggested a version of this: “projects that attempt genuinely new things, which remain hard even with AI assistance.” See his post and the replies for some good discussion on this idea.

- “AI will produce multimodal accessibility layers (live translation, alt-text, sign-language avatars) for most lecture videos without human editing.” We leaned toward yes on this one, too. This seems like another case where something will be provided by default, although my podcast transcripts are AI-generated and still need editing from me, so we’re not there quite yet.

‘We Have to Really Rethink the Purpose of Education’

The Ezra Klein Show

Description: I honestly don’t know how I should be educating my kids. A.I. has raised a lot of questions for schools. Teachers have had to adapt to the most ingenious cheating technology ever devised. But for me, the deeper question is: What should schools be teaching at all? A.I. is going to make the future look very different. How do you prepare kids for a world you can’t predict?

And if we can offload more and more tasks to generative A.I., what’s left for the human mind to do?

Rebecca Winthrop is the director of the Center for Universal Education at the Brookings Institution. She is also an author, with Jenny Anderson, of “The Disengaged Teen: Helping Kids Learn Better, Feel Better, and Live Better.” We discuss how A.I. is transforming what it means to work and be educated, and how our use of A.I. could revive — or undermine — American schools.

How to Use AI and Universal Design to Empower Diverse Thinkers with Susan Tanner — from legaltalknetwork.com by Zack Glaser, Stephanie Everett, and Susan Tanner

What if the key to better legal work isn’t just smarter tools but more inclusive ones? Susan Tanner, Associate Professor at the University of Louisville Brandeis School of Law, joins Zack Glaser to explore how AI and universal design can improve legal education and law firm operations. Susan shares how tools like generative AI can support neurodiverse thinkers, enhance client communication, and reduce anxiety for students and professionals alike. They also discuss the importance of inclusive design in legal tech and how law firms can better support their teams by embracing different ways of thinking to build a more accessible, future-ready practice. The conversation emphasizes the need for educators and legal professionals to adapt to the evolving landscape of AI, ensuring that they leverage its capabilities to better serve their clients and students.

Maximizing Microsoft Copilot in Your Legal Practice — from legaltalknetwork.com by Tom Mighell, Dennis Kennedy, and Ben Schorr

Copilot is a powerful tool for lawyers, but are you making the most of it within your Microsoft apps? Tom Mighell is flying solo at ABA TECHSHOW 2025 and welcomes Microsoft’s own Ben Schorr to the podcast. Ben shares expert insights into how lawyers can implement Copilot’s AI-assistance to work smarter, not harder. From drafting documents to analyzing spreadsheets to streamlining communication, Copilot can handle the tedious tasks so you can focus on what really matters. Ben shares numerous use-cases and capabilities for attorneys and later gives a sneak peek at Copilot’s coming enhancements.

What does ‘age appropriate’ AI literacy look like in higher education? — from timeshighereducation.com by Fun Siong Lim

As AI literacy becomes an essential work skill, universities need to move beyond developing these competencies at ‘primary school’ level in their students. Here, Fun Siong Lim reflects on frameworks to support higher-order AI literacies

Like platforms developed at other universities, Project NALA offers a front-end interface (known as the builder) for faculty to create their own learning assistant. An idea we have is to open the builder up to students to allow them to create their own GenAI assistant as part of our AI literacy curriculum. As they design, configure and test their own assistant, they will learn firsthand how generative AI works. They get to test performance-enhancement approaches beyond prompt engineering, such as grounding the learning assistant with curated materials (retrieval-augmented generation) and advanced ideas such as incorporating knowledge graphs.

They should have the opportunity to analyse, evaluate and create responsible AI solutions. Offering students the opportunity to build their own AI assistants could be a way forward to develop these much-needed skills.

How to Use ChatGPT 4o’s Update to Turn Key Insights Into Clear Infographics (Prompts Included) — from evakeiffenheim.substack.com by Eva Keiffenheim

This 3-step workflow helps you break down books, reports, or slide-decks into professional visuals that accelerate understanding.

This article shows you how to find core ideas, prompt GPT-4o3 for a design brief, and generate clean, professional images that stick. These aren’t vague “creative visuals”—they’re structured for learning, memory, and action.

If you’re a lifelong learner, educator, creator, or just someone who wants to work smarter, this process is for you.

You’ll spend less time re-reading and more time understanding. And maybe—just maybe—you’ll build ideas that not only click in your brain, but also stick in someone else’s.

SchoolAI Secures $25 Million to Help Teachers and Schools Reach Every Student — from globenewswire.com

The Classroom Experience platform gives every teacher and student their own AI tools for personalized learning

SchoolAI’s Classroom Experience platform combines AI assistants for teachers that help with classroom preparation and other administrative work, and Spaces–personalized AI tutors, games, and lessons that can adapt to each student’s unique learning style and interests. Together, these tools give teachers actionable insights into how students are doing, and how the teacher can deliver targeted support when it matters most.

“Teachers and schools are navigating hard challenges with shrinking budgets, teacher shortages, growing class sizes, and ongoing recovery from pandemic-related learning gaps,” said Caleb Hicks, founder and CEO of SchoolAI. “It’s harder than ever to understand how every student is really doing. Teachers deserve powerful tools to help extend their impact, not add to their workload. This funding helps us double down on connecting the dots for teachers and students, and later this year, bringing school administrators and parents at home onto the platform as well.”

AI in Education, Part 3: Looking Ahead – The Future of AI in Learning — from rdene915.com by Dr. Rachelle Dené Poth

In the first and second parts of my AI series, I focused on where we see AI in classrooms. Benefits range from personalized learning and accessibility tools to AI-driven grading and support of a teaching assistant. In Part 2, I chose to focus on some of the important considerations related to ethics that must be part of the conversation. Schools need to focus on data privacy, bias, overreliance, and the equity divide. I wanted to focus on the future for this last part in the current AI series. Where do we go from here?

Anthropic Education Report: How University Students Use Claude — from anthropic.com

The key findings from our Education Report are:

- STEM students are early adopters of AI tools like Claude, with Computer Science students particularly overrepresented (accounting for 36.8% of students’ conversations while comprising only 5.4% of U.S. degrees). In contrast, Business, Health, and Humanities students show lower adoption rates relative to their enrollment numbers.

- We identified four patterns by which students interact with AI, each of which were present in our data at approximately equal rates (each 23-29% of conversations): Direct Problem Solving, Direct Output Creation, Collaborative Problem Solving, and Collaborative Output Creation.

- Students primarily use AI systems for creating (using information to learn something new) and analyzing (taking apart the known and identifying relationships), such as creating coding projects or analyzing law concepts. This aligns with higher-order cognitive functions on Bloom’s Taxonomy. This raises questions about ensuring students don’t offload critical cognitive tasks to AI systems.

From the Kuali Days 2025 Conference: A CEO’s View of Planning for AI — from campustechnology.com by Mary Grush

A Conversation with Joel Dehlin

How can a company serving higher education navigate the changes AI brings to the ed tech marketplace? What will customers expect in this dynamic? Here, CT talks with Kuali CEO Joel Dehlin, who shared his company’s AI strategies in a featured plenary session, “Sneak Peek of AI in Kuali Build,” at Kuali Days 2025 in Anaheim.

How students can use generative AI — from aliciabankhofer.substack.com by Alicia Bankhofer

Part 4 of 4 in my series on Teaching and Learning in the AI Age

This article is the culmination of a series exploring AI’s impact on education.

Part 1: What Educators Need outlined essential AI literacy skills for teachers, emphasizing the need to move beyond basic ChatGPT exploration to understand the full spectrum of AI tools available in education.

Part 2: What Students Need addressed how students require clear guidance to use AI safely, ethically, and responsibly, with emphasis on developing critical thinking skills alongside AI literacy.

Part 3: How Educators Can Use GenAI presented ten practical use cases for teachers, from creating differentiated resources to designing assessments, demonstrating how AI can reclaim 5-7 hours weekly for meaningful student interactions.

Part 4: How Students Can Use GenAI (this article) provides frameworks for guiding student AI use based on Joscha Falck’s dimensions: learning about, with, through, despite, and without AI.

Mapping a Multidimensional Framework for GenAI in Education — from er.educause.edu by Patricia Turner

Prompting careful dialogue through incisive questions can help chart a course through the ongoing storm of artificial intelligence.

The goal of this framework is to help faculty, educational developers, instructional designers, administrators, and others in higher education engage in productive discussions about the use of GenAI in teaching and learning. As others have noted, theoretical frameworks will need to be accompanied by research and teaching practice, each reinforcing and reshaping the others to create understandings that will inform the development of approaches to GenAI that are both ethical and maximally beneficial, while mitigating potential harms to those who engage with it.

Instructional Design Isn’t Dying — It’s Specialising — from drphilippahardman.substack.com by Dr. Philippa Hardman

Aka, how AI is impacting role & purpose of Instructional Design

Together, these developments have revealed something important: despite widespread anxiety, the instructional design role isn’t dying—it’s specialising.

What we’re witnessing isn’t the automation of instructional design and the death of the instructional designer, but rather the evolution of the ID role into multiple distinct professional pathways.

The generalist “full stack” instructional designer is slowly but decisively fracturing into specialised roles that reflect both the capabilities of generative AI and the strategic imperatives facing modern organisations.

In this week’s blog post, I’ll share what I’ve learned about how our field is transforming, and what it likely means for you and your career path.

Those instructional designers who cling to traditional generalist models risk being replaced, but those who embrace specialisation, data fluency, and AI collaboration will excel and lead the next evolution of the field. Similarly, those businesses that continue to view L&D as a cost centre and focus on automating content delivery will be outperformed, while those that invest in building agile, AI-enabled learning ecosystems will drive measurable performance gains and secure their competitive advantage.

Adding AI to Every Step in Your eLearning Design Workflow — from learningguild.com by George Hanshaw

We know that eLearning is a staple of training and development. The expectations of the learners are higher than ever: They expect a dynamic, interactive, and personalized learning experience. As instructional designers, we are tasked with meeting these expectations by creating engaging and effective learning solutions.

The integration of Artificial Intelligence (AI) into our eLearning design process is a game-changer that can significantly enhance the quality and efficiency of our work.

No matter if you use ADDIE or rapid prototyping, AI has a fit in every aspect of your workflow. By integrating AI, you can ensure a more efficient and effective design process that adapts to the unique needs of your learners. This not only saves time and resources but also significantly enhances the overall learning experience. We will explore the needs analysis and the general design process.